Industrial Light and Magic, the world’s biggest effects company, raises the bar even further in many ways to realise giant robots and other effects for Michael Bay’s “Transformers: Revenge”.

It’s bigger than the first film. Much bigger. Huge, to be precise. Enormous, colossal. “We used 95 per cent of our studio’s render farm,” says Scott Farrar, Visual Effects Supervisor at Industrial Light and Magic and responsible for the special effects on “Transformers: Revenge of the Fallen”. The original film title of the Transformers sequel is “Transformers: Revenge of the Fallen”, directed once again by Michael Bay.

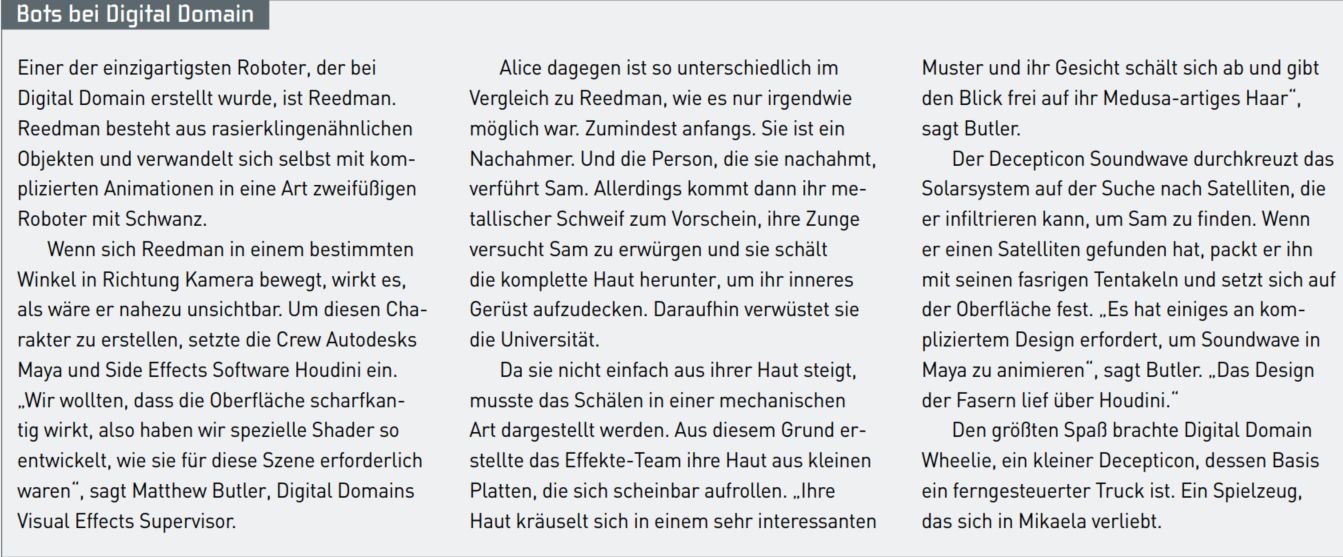

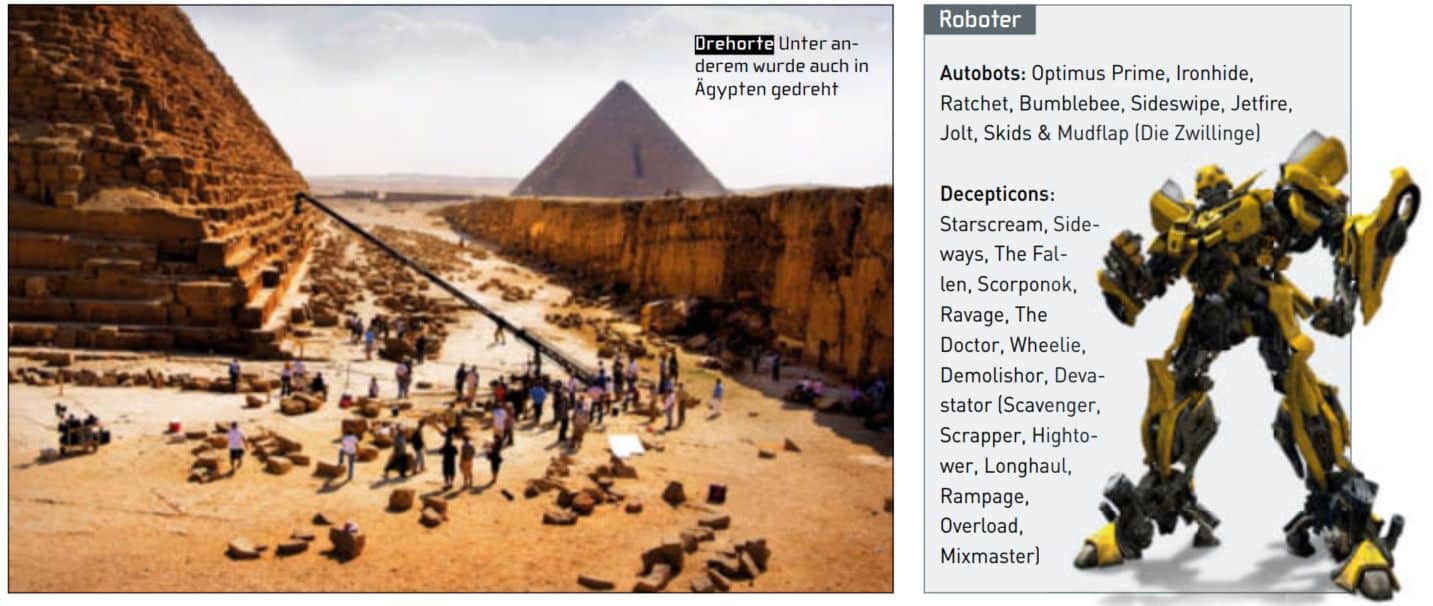

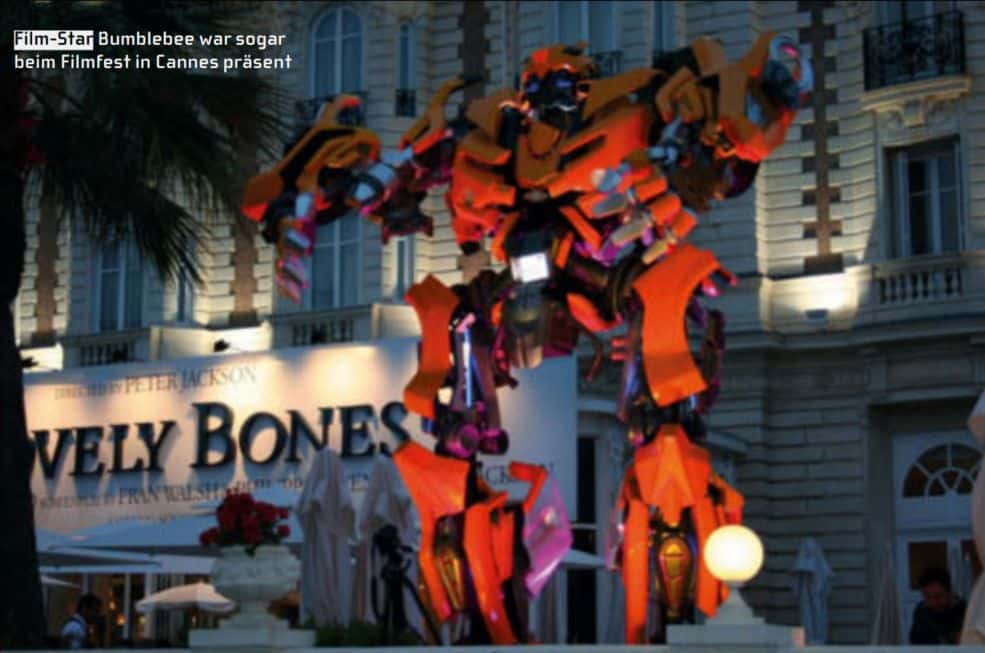

The DreamWorks film once again sends the good Autobot robots into battle against the evil Decepticons. The main protagonists on the human side are once again Shia LaBeouf as Sam Witwicky and Megan Fox as his girlfriend Mikaela Banes. The Autobots Optimus Prime and Bumblebee are also back, as is the Decepticon Megatron.

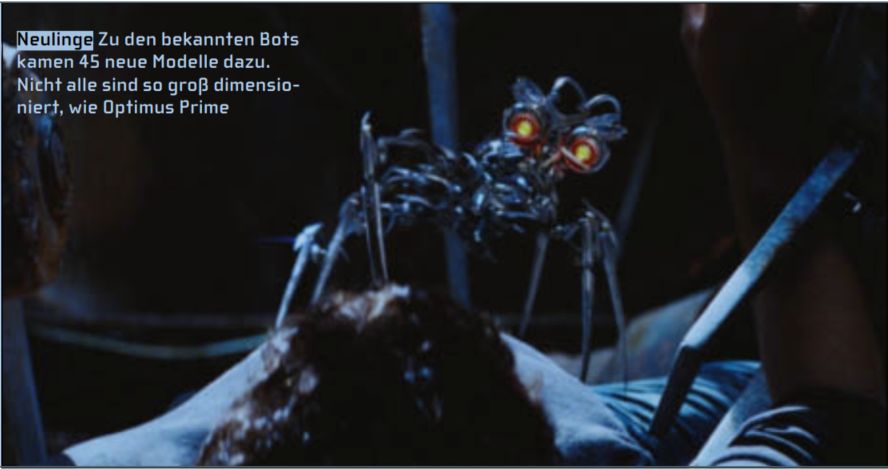

This time, the battle moves out of Los Angeles and takes place in a total of seven states and three countries. The film also takes place in Egypt and Jordan. This time, 45 new robots join the two opposing parties and the other robots from the first film.

“We needed between 16 and 20 TBytes of hard drive space to render the first film,” says Farrar. “This time it’s 140 TBytes.”

ILM created 560 shots for the film, including the 60 robots. Some of these shots were 500 frames long and showed scenes with three to four large robots. This is complicated and extremely time-consuming when it comes to rendering. In addition, Digital Domain created some robots: the Decepticons Reedman and the Microcons Soundwave, Wheelie, the Kitchenbots and Alice, a hypocritical robot.

To assist in the creation of the 60 robots, ILM created a digital model shop where modellers could create a library of robot parts. Anyone creating a character could then use any of these parts, which already had a detailed texture map. If a part of the library was changed, every robot that used this component also changed automatically. The modellers created the characters using Maya and ILM’s own software Zeno.

Animation Director Scott Benza headed a team of 50 animators at ILM. 20 of them were already involved in the first part of Transformers. The large bots, consisting of many parts, were almost always animated by hand. Rigs from the first instalment were also used again, which made it possible to combine the components as desired.

“If someone was working on a shot and came across a new hole in one of the robots, they could simply take a part from another robot and fill the gap,” says Jeff White, second visual effects supervisor.

For example, during an emotional scene with Sam (Shia LaBeouf), a flood of tears pours from Bumblebee’s eyes. However, this robot model was not designed to allow tears to flow. But because parts could be combined, an animator took some parts, added windscreen wipers and used the windscreen wipers’ spray system to create the tears.

Of the 60 characters ILM created, 45 were new heroes and 40 had at least one line of text and had to be animated accordingly. A robot over 30 metres tall called Devastator, made up of seven other robots, was the largest in scale.

Also new is a female Autobot, Arcee, who is built from three motorbikes or “Doc”, a small Decepticon who transforms into a microscope. Jetfire is an older robot that walks on a stick and was originally a Decepticon. That Jetfire transforms from an SR71 Blackbird, a jet that was used as a spy plane in the 60s. It is over 12 metres tall and built from old mechanical components. Even though Bumblebee cries in the aforementioned scene, the aged Jetfire has probably the most emotional and human traits of all the non-human protagonists.

“They breathe, spit, spray sparks and produce gas and smoke,” says White about the robots in the film. “We wouldn’t have thought of doing that in the first part of the film.”

As well as making the change to give the robots more personality and emotion, Michael Bay also took away their metallic gloves, enhancing the fight sequences and making them more violent. This culminates in Bumblebee tearing another robot to pieces in one scene.

Scott Benza was responsible for putting together the team of animators. Some of them were hired specifically for this project based on their skills and interests. Those who had great skills in animating animals worked on Ravage, a cat-like Decepticon. Tech-savvy animators took care of the transformation shots. Those working on Devastator were given more powerful computers. The giant Decepticon required 16 GB of RAM. Formed from seven large vehicles in robot form, the enormous machinery consists of 52,632 parts and around 13 million polygons.

In order to be able to work with the large robots and the large number of individual parts, ILM created a production pipeline with different resolutions. The animators had many buttons on their screen that allowed them to change the resolution from 25K or lower up to 1300K per frame. Each individual part of a robot could be selected and viewed and edited in the desired resolution. At the lowest resolution, even Devastator could be moved in real time.

Devastator resembles a gorilla with an open stomach surrounded by tooth-like components. He is created by brutally assembling himself from one vehicle after another, starting with a mining excavator. One of his arms is made from a crane, the other from a kind of shovel excavator. His legs were originally a rubbish lorry and a bulldozer. A second rubbish truck is used as his torso and a cement mixer becomes his head, into which he sucks everything that gets in his way using a vacuum. “There is one shot in which Devastator moves towards the camera. If you wanted to render that one scene on a home PC, it would take three years in full resolution,” says Jason Smith, Digital Production Supervisor.

Michael Bay worked directly with the animators at ILM to create camera movements for the animation sequences and to contribute his own ideas. “He was constantly on camera. Whether on set or at ILM,” says Benza. At ILM, Bay controlled a virtual camera on the studio’s motion capture stage to film the animated characters. As on set, Bay improvised with the actors. But at ILM, it was animated characters and the animators. Sometimes the animators had ideas that Bay used for the film. And, after ILM choreographed a huge fight between Optimus Prime and Megatron in a forest, Bay invited Benza and Farrar directly to the location, a forest in New Mexico, to supervise the plate photography with their Previz.

For this sequence, the effects crew created CG trees in post-production to adapt the shots to the location. This allowed the robots to crash into the trees and break branches. Each branch was a collection of simple objects strung together. The technical directors were able to run a rigid-body simulation, which resulted in a high-resolution model.

Size matters

With three times the number of robots, which are also significantly more complex, ILM placed very high demands on the available mass storage. In addition, some scenes were shot in IMAX resolution. The studio normally renders the scenes in 2K resolution, but the IMAX scene has eight times the number of pixels at 4K resolution. “We had more compositing work in 4K,” says Smith. “The compositing artists spent a lot of time zooming within individual frames.”

The compositing for the IMAX shots took place in Nuke and Bay cut out the scenes and used them for normal projection. “You definitely want to see the film in IMAX,” adds White.

IMAX also had an influence on the effects crew. In the past, for example, sandstorms were created using instanced particle-based sprites. With 4K, the crew had to rely on volumetric simulations to create enough detail for the sand that the Devastator sucks into its cement mixer mouth.

In one notable scene, Devastator climbs up a pyramid in Egypt and knocks the top off as if it were an unloved toy covering an important piece of technology inside. As he does so, individual blocks can be seen crumbling off the sides and falling downwards. To make this shot possible, ILM’s artists use fields and particles to define fracture lines in 3D on a CG pyramid. The simulation team then ran a rigid-body simulation with 150,000 individual parts on GPUs instead of CPUs.

To create the dusty and shifting sand falling from the pyramid, the TDs built a grey cone and put a piece of cloth over it with a matching surface simulation. They then used the fabric simulation to create a particle simulation. Once they had approved the shape of the simulation, they ran the particle simulation.

It was ILM’s turn

There are two scenes in the film that were created entirely digitally. One takes place on Nemesis, the planet where the Decepticons come from. The other takes place underwater when the Constructicons and the DocBot rescue Megatron.

“That’s where we were needed,” says White. Bay again used the virtual camera to show the crew the framing he envisioned. Then it was up to ILM to reproduce the atmosphere he was used to on set. “He uses loads of drops, smears and fog,” adds White. “So they added all that to shots with The Fallen.” The Fallen is a creature that lies flat on its mechanical back and looks like it could be the Alien’s cousin.

For the underwater scene, the TDs paid special attention to how the lighting works underwater. For example, dirt was built in to create realistic reflections of the light beams.

“There are key scenes in every film,” says White. “In this film, every sequence has key scenes.”

Digital environments

In addition to the robots, ILM created digital environments. “We needed big backdrops for our big robots,” says Farrar. Although Bay filmed in Egypt, Jordan and other locations over a six-month period, the Digimatte department still had its hands full. In some locations, it would have been too difficult or too expensive to bring all the equipment for a particular shot. So it was a good solution for the digital artists to recreate locations using projection maps on low-res 3D geometry. Bay was able to “fly” through these virtual sets with his virtual camera and this became the most efficient way to create the shots. “That’s a good offer to make to the director,” says Farrar.

Even in places where Bay could film, the crew took enough digital photos to be able to recreate them digitally. That was in case Bay wanted to change anything in post-production. Which he did from time to time in collaboration with the effects crew.

“Working with Michael is always a lot of fun,” says Benza. “His films are the hardest but also the most enjoyable.”

And they are also the biggest and most brutal. Where else can you watch heavy robots made up of thousands of parts smash themselves to pieces? And if ILM has done its job well, the viewer will believe every minute of it.