As a chance find at IBC, we came across something that could be really useful – namely “intelligent asset management”, which not only relies on you having tagged correctly, but also recognises what is happening in the video – and thanks to developments in recent years, can even search for moods, concepts or spectrums without knowing the exact term. It’s similar with audio – “The person said they’re in Bielefeld!” – and now Cara One is not looking for the exact text, but for the statement, even if it is only implicit.

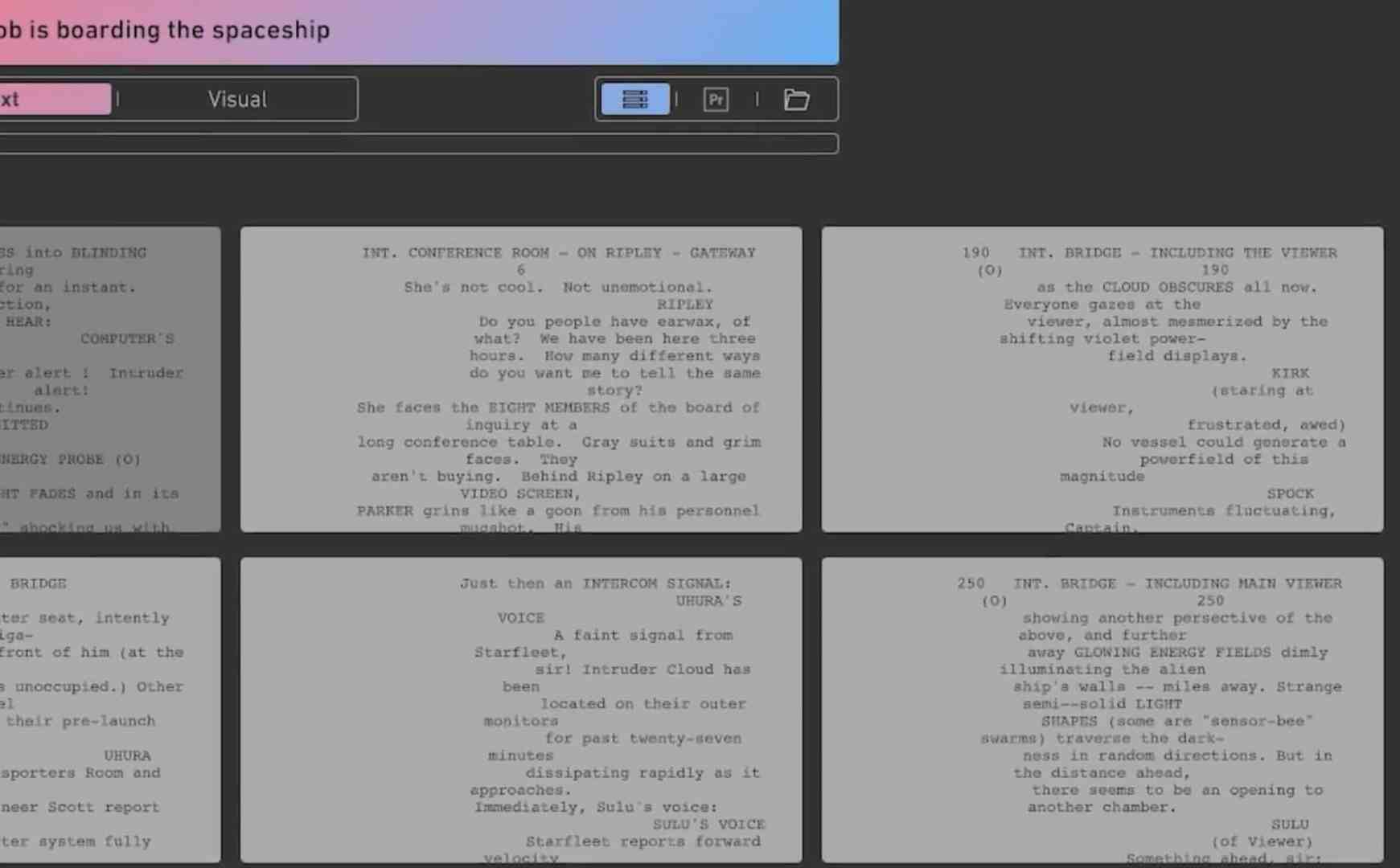

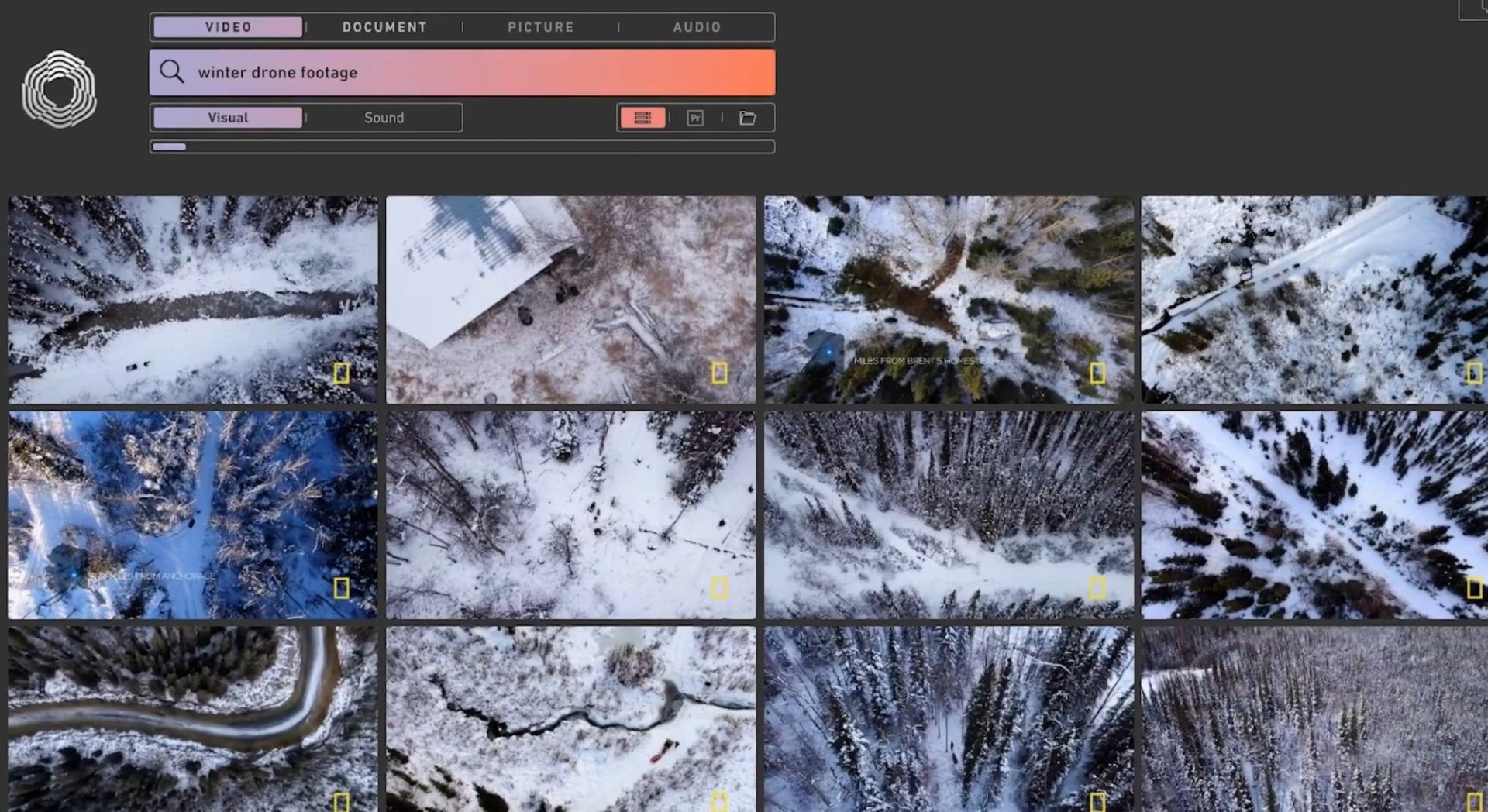

That’s the first step – a functioning search function for videos in the year 2024. But there’s more – firstly, “Cara One” not only searches videos, but also the soundtrack, audio files (atmo, original sounds and so on) and documents, PDFs, images – and whatever else accumulates in a project’s footage folder. All of this is made completely searchable – and once you’ve got that far, what else can you do with the data?

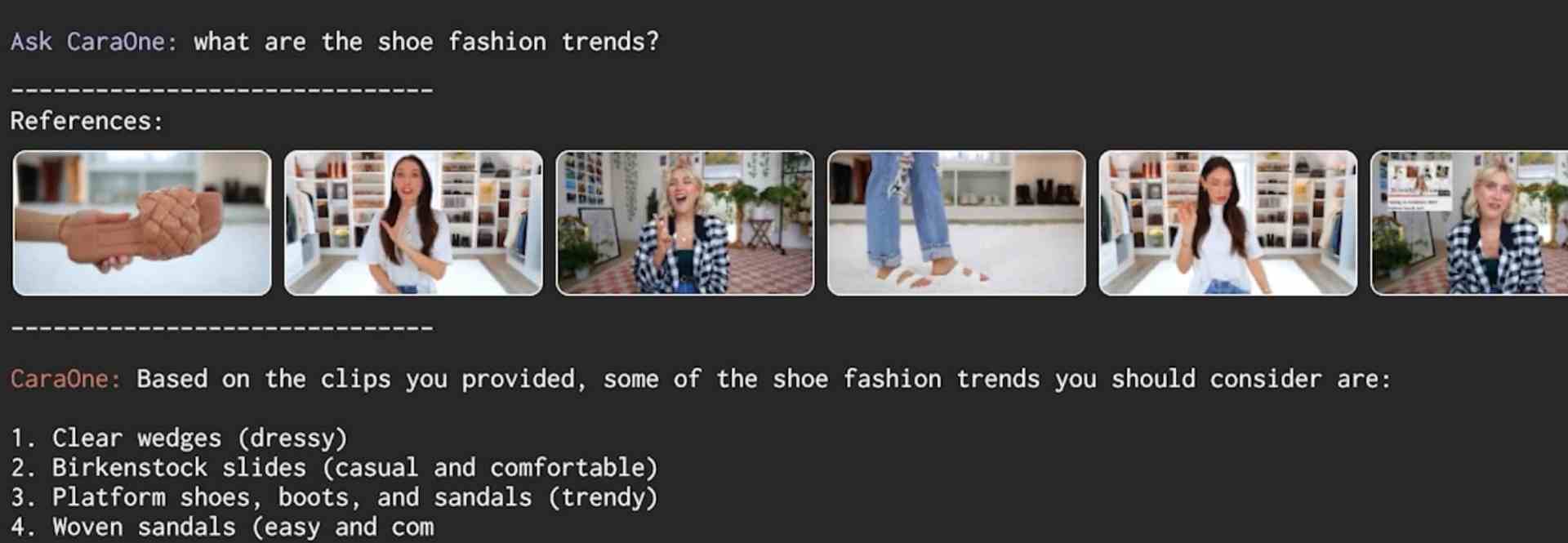

Among other things, you can run a kind of ChatGPT over your own footage – and chat with the Cara-One assistant about what is actually happening now and what the conclusion of the interviews is. This doesn’t replace the editor or researcher, but anyone who relies on “content” in assembly line production will appreciate it.

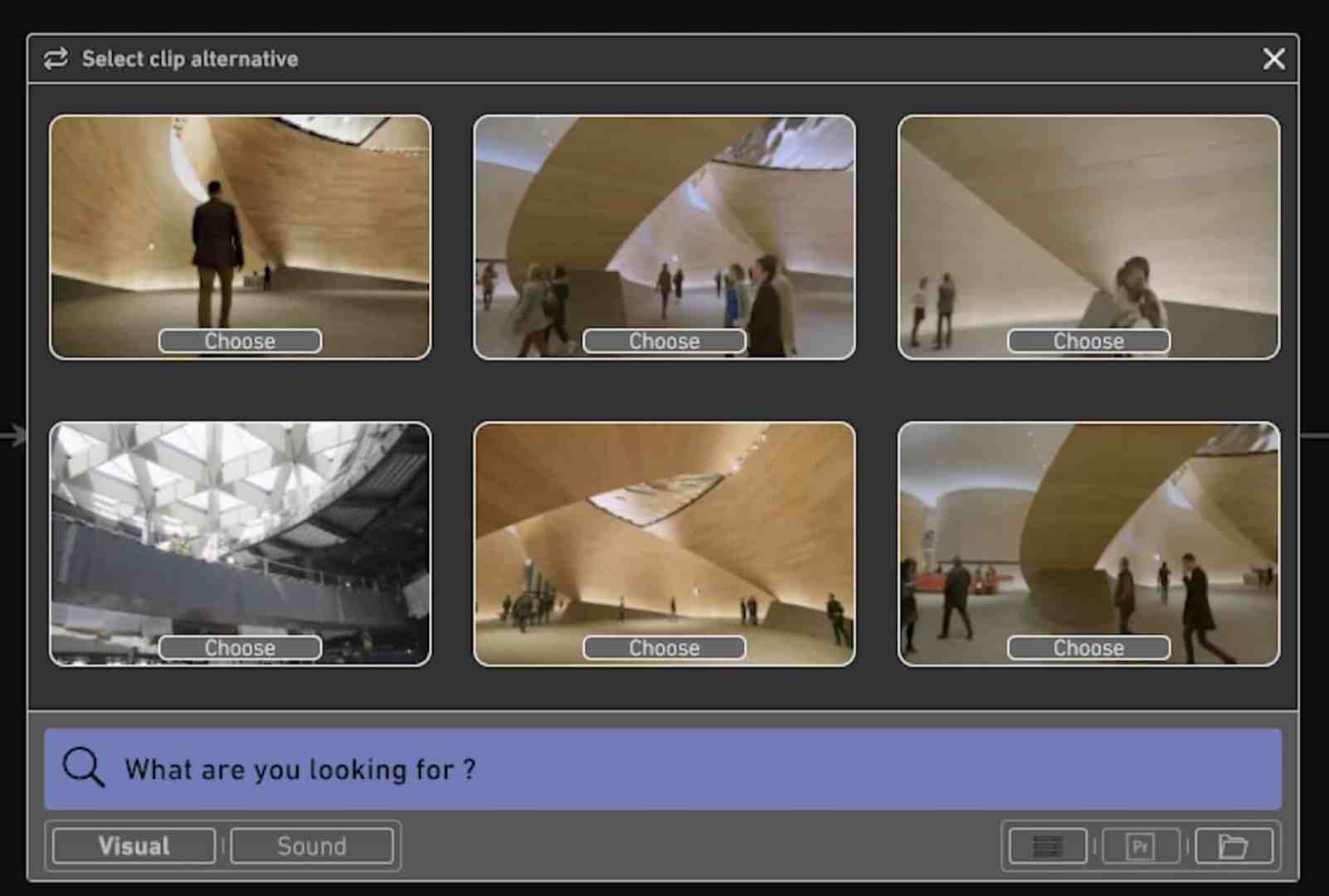

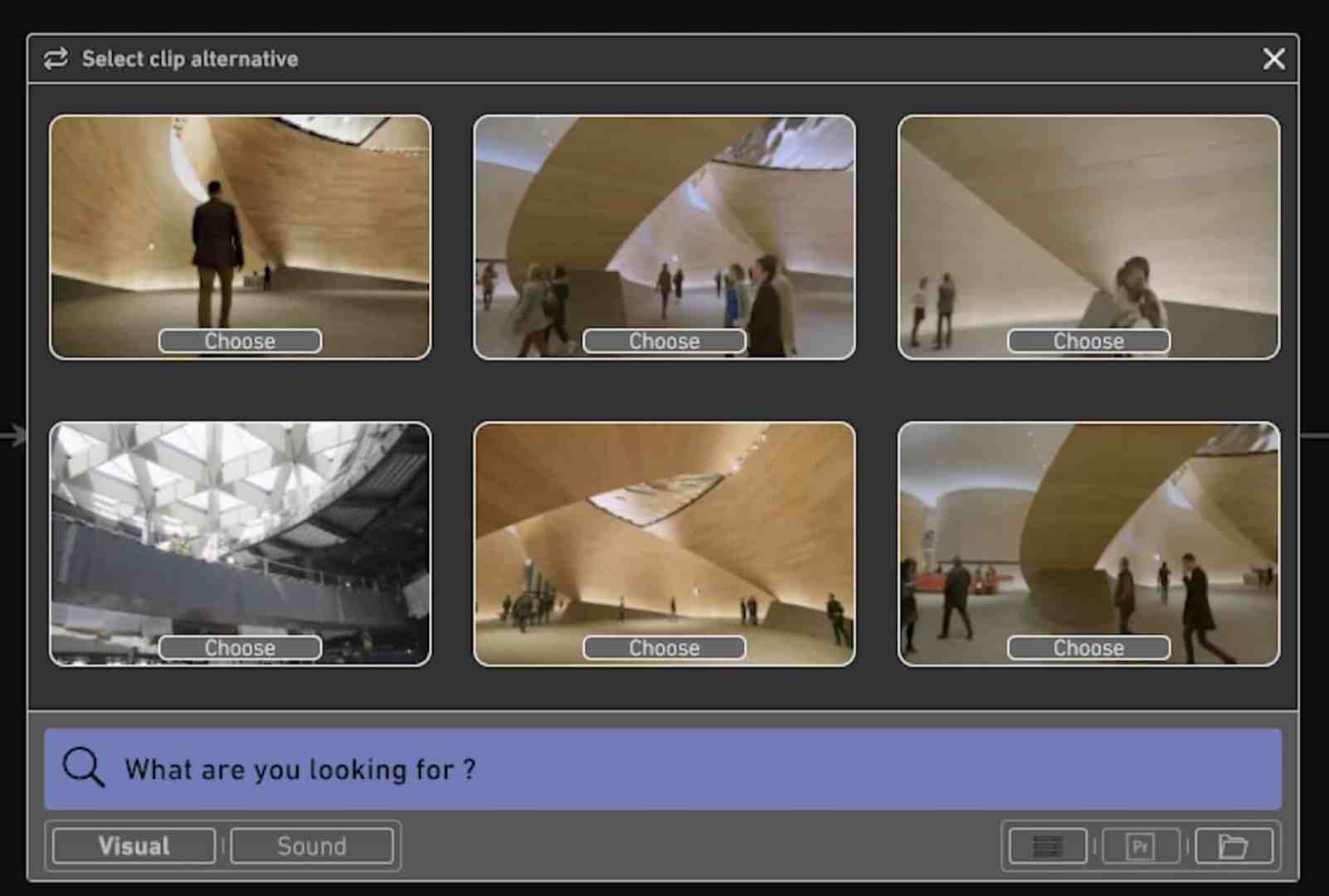

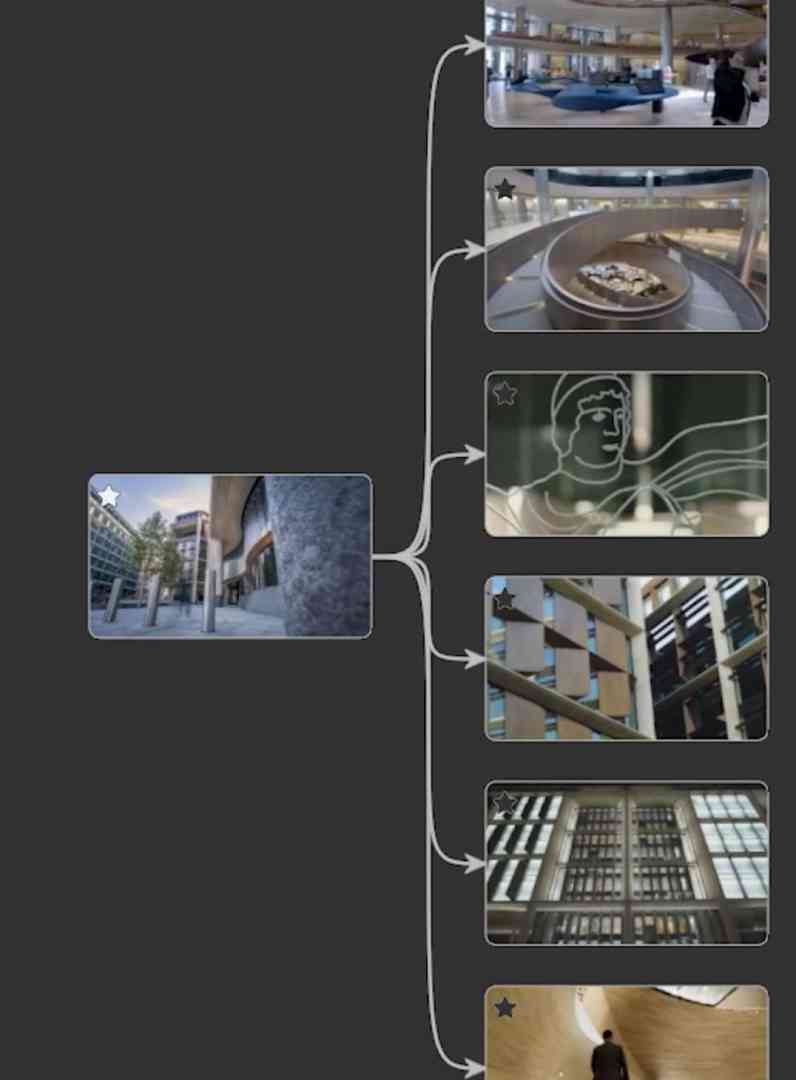

Speaking of assembly lines: “Predictive Editing” goes in the same direction and suggests clips that could hang one after the other – you select them, put everything together and export it to the video editor. The clips are put together in a simple, solid interface and no longer in a timeline with delayed mouse cinema previews.

As I said, it doesn’t replace the brain, but it does make collating the individual parts quicker, easier and no longer dependent on whether everyone has set their keywords correctly, everything is in the right folder and so on.

We asked Eddi Weinwurm – the CEO of “Obvious Future”, formerly of FlavourSys – what happens next. You can find his blog on Medium at @weinwurm, and if you want to see what Cara One looks like right away, go here: caraoneai.com

DP: Hello Eddi, ObviousFuture presented CaraOne, a revolutionary AI tool for media search, at the NAB ..

Eddie Weinwurm: AI. I can’t hear that word anymore!

DP: Why? You’re the CEO of a company that develops AI for the media industry?

Eddie Weinwurm: The endless BS bingo … In 2022 it was still “cloud” – or even “blockchain” – now it’s “AI”. Bingo! No, honestly, it’s a bit annoying. It feels like every second person retrained as an “AI expert” last year. And every second product now has an AI appendix attached to it, whether it makes sense or not. The main thing is to have a buzzword on the packaging!

DP: If not “artificial intelligence”, what else should we call it?

Eddie Weinwurm: For me, “artificial intelligence” is just a stupid term. I want something that is so intelligent that it doesn’t feel artificial. My washing machine, for example, is pretty “artificially intelligent”. One wrong press of a button and the washing shrinks to half size. Its intelligence is very artificial, I speak from experience!

In principle, I believe that technology and product should be considered separately. And in a year or two, AI will be built into every single thing and then the buzzword will make about as much sense as saying: CPU smartphone. Sure, there’s a CPU inside, but that’s to be assumed.

And the technology is ML, i.e. machine learning, or AI for my sake. But what is the product then? Of course, it’s easy to say: “Now new, with AI!” and that’s it. But I actually expect a product that is so intelligent that it doesn’t feel artificial or artificial, but acts as naturally and “humanly intelligent” as possible.

And that is precisely our goal with CaraOne: to create an intelligent assistant for media production. Of course, this is based on state-of-the-art ML technology. But ultimately it’s about the user experience and added value.

DP: So what can CaraOne do that my neatly maintained File & Folder structure can’t?

Eddie Weinwurm: A picture is worth a thousand words and a film “speaks” between 24 and 60 per second. There is so much more information in a clip than a folder or file name could ever express. And even if I maintain a database and give each clip 10 tags, I still miss all the thousands of others. For example, a dog sleeping in a green meadow is more than just “meadow”, “dog”, “sleeping”. For example, it is also an image for a very relaxed, peaceful situation – if even the dog is asleep, then there is nothing to worry about. And with CaraOne, you can access all this additional information that is contained in each clip. For example, a customer at the supermarket checkout is something I would get as a result if I searched for “inflation”. Because of course the concept is also in that image and so on.

Our users love CaraOne because they can search for both concrete concepts like “an overtaking manoeuvre with an oncoming car” and abstract concepts like “a dangerous situation”, which would then show exactly the same thing. This is most popular in non-scripted formats, especially wherever there is a lot, sometimes far too much material. For example, documentaries, reality, shows, but also in trailer production. When I have two hours of film and want to quickly find the best, most beautiful or most emotional scenes. Because CaraOne really understands the content of the clips, the software can also find such scenes very quickly.

In addition to the visual understanding, there is also the understanding of the content of the spoken word. For example, I’m editing something where I’m accompanying a protagonist on a journey through Europe – and now I want to locate her in England. Ideally, with an OT where she says herself that she is in England. A search with CaraOne for “I’m in England” would find a subclip here, for example, in which our protagonist lolls in bed and says, “I’ve just woken up in London!” – where no word is identical, but the meaning is the same. Which is very practical. We are good at memorising the content of conversations, but rarely the wording.

This sense-based search is useful for all formats in which I have many or very long interviews. There’s no need to decipher handwritten notes or search through 30 minutes of interviews (or press conferences), you can find what you’re looking for immediately.

DP: How long does the scan take? I have a 4 TB disc here full of videos in all possible formats, about 500 hours – how long would that take?

Eddie Weinwurm: Our technology analyses the files at between 5-15 times real time on one node. The 500 hours would be processed in around 50 hours. But these nodes can also be scaled, with 10 nodes it would be done in 5 hours. If required, we also offer to transfer this work to the cloud for processing large archives, where we can scale it almost infinitely. We offer CaraOne as an on-prem and cloud solution.

DP: Can I try it out for my team?

Eddie Weinwurm: We offer test systems via our sales partners (Netorium and DVE as in Germany). We always suggest trying out CaraOne as practically as possible. Because once our “end users” have tried it out, they don’t want to let it out of their hands – and it sells itself ;)

DP: Let’s talk about searching: My files are all nicely indexed – did I go to all that trouble for nothing, or can Cara learn existing data structures “along the way”?

Eddie Weinwurm: Yes, we can integrate connections to existing databases; this is a customised configuration and integration that we offer. In practice, however, it turns out that these keywords are hardly ever used because CaraOne would usually recognise the keywords anyway. So I doubt their value.

DP: And I can link all this to my existing database or MAM/PAM and access it with web interfaces?

Eddie Weinwurm: Yes, a connection is possible from both sides. CaraOne comes with a very easy-to-integrate Rest API, so it can be integrated very quickly into existing MAMs or PAMs. On the other hand, it is of course also possible to integrate these systems into the CaraOne search if they offer a corresponding API that we can address from our side.

Further implementations are of course also possible via our API, so you could quickly write a script that automatically analyses how often a logo can be seen or whether non-FSK16 content can be found somewhere, etc. There are no limits to the imagination here.

DP: You presented a new product family at the NAB, “IMA”. What is that?

Eddie Weinwurm: (laughs) I love the word IMA! There’s a rumour that I coined the term PAM with Strawberry back in the day. Whether that’s true or not, I don’t know. In any case, we were in a similar situation about 15 years ago as we are today with CaraOne. There were established terms such as MAM, Avid Interplay, etc., but what we had back then simply didn’t fit into any of these categories. If you don’t want to confuse customers, you have to find a new term. Like PAM back then. Which, by the way, originally stood for Project Asset Management. But, hence now: IMA: Intelligent Media Assistant. That really is something new.

DP: What is an Intelligent Media Assistant?

Eddie Weinwurm: A tool that supports the user with all its intelligence in many areas of post-production. In other words, it assists. And is intelligent at the same time! CaraOne can be used for intelligent media searches, for example. In other words, what I described earlier. So it doesn’t just stupidly search for metadata or tags in a database, but actually searches in the media itself – just like a human would. Simply search for objects, but also situations and emotions in clips. Or even illustrations for very abstract sentences that don’t describe anything visible. I search for: “The unbearable silence of the world”, for example. And you get the lonely man gazing at the grey sea in the morning mist, as a clip, timecode-accurate with in and out points! And I can search for spoken content by meaning. For example, I remember someone saying in an interview that they “like Italian food”. CaraOne finds the passage where he literally says that he “loves pizza and pasta”. CaraOne finds this because it is simply intelligent enough to understand what something spoken means, even though no word is identical! So it works like a search in MAMs, only very humanly intelligent.

And this intelligence runs through the product. With CaraOne, it starts with research. I can simply chat with the software about my assets, scripts and documents, for example in series production I can ask: “What did Tom say about his childhood trauma?” and get a simple answer – including timecode-accurate references to clips or corresponding dialogues from scripts! Or if I have a long interview with a fashion expert, simply ask: “What are the spring fashion trends?” and then receive a written list of all the trends she mentioned. I can then use this as the basis for my article.

And by the way, these searches are incredibly fast, we search 1000 hours on a single one of our AI nodes in a few milliseconds. On-premise, of course! And finally, and we are really proud of this, we have the CaraOne Predict. Because that’s something really new!

DP: Before we come to CaraOne-Predict, what data can Cara read?

Eddie Weinwurm: When it comes to videos, we support virtually all common formats. We can handle all complicated Avid MXFs and AAF formats and structures without any problems, and of course all audio files. We can also search in images and Word documents, as well as PDFs and even PowerPoint slides.

DP: So when we talk about videos: What kind of conditions do they have to fulfil?

Eddie Weinwurm: We are proficient in all common formats and codecs, we also work with proxy files if necessary, if the original is in the archive, and if we don’t know a format, our sister company HiScale is happy to help us out, as they are the world’s top experts when it comes to video formats and codecs. For example, we recently had a customer who was still using an old version of the Avid Meridian codec in current productions. HiScale built us a decoder within a few days.

DP: You mentioned CaraOne-Predict, so you want to revolutionise video editing itself?

Eddie Weinwurm: That’s right. Which is nothing difficult in itself! Since the introduction of digital editing in the 1980s, not much has changed in the actual video editing process. There is a timeline. Full stop. You fill it with clips, trim around, maybe change a few things and that’s it. However, with CaraOne and the intelligence behind it, we can now finally allow completely new workflows. To understand the idea behind it, you have to ask yourself what a timeline in video editing actually is. And the answer is simple: a timeline is actually nothing more than a “story” that is told visually.

And now for the important bit: You can tell stories in different ways, chronologically or, for example, the end first, then the beginning, and so on. It’s all about the order. You know when you tell a joke but give away the punch line too early and it’s no longer a joke. Some ways of telling a story are better than others! And ultimately, video editing is about nothing other than having the best possible visual story at the end. Because it makes a difference whether I first show a very close-up of an angry face and then place it in a long shot of a bar, or whether I first show the long shot of the bar and then the face. Both have a different effect, a different message.

DP: And how does CaraOne help here?

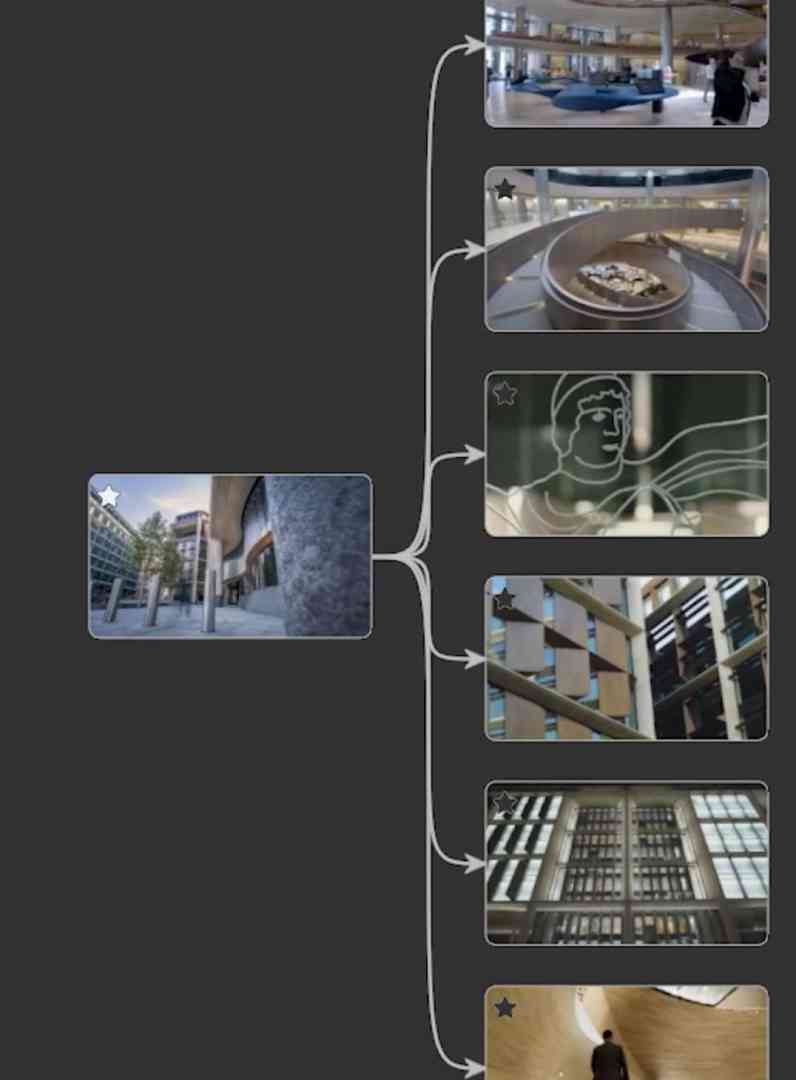

Eddie Weinwurm: Yes, this is where our CaraOne Predict comes into play. For example, we select a clip that we want to start the sequence with, and CaraOne then suggests various suitable clips for how we could continue it. We select one and gradually build up our timeline. Based on the appropriate suggestions, click, click, click.

That’s really great, but what’s really interesting is that I can also go back in the sequence at any time and select any clip and explore an alternative timeline from there. Just like in science fiction. What would happen in the future if Terminator was successful? If I had chosen a different clip instead of this one! The principle of alternative timelines not only works on a large scale, but also on a small scale, which is where the Predict comes into play: For example, I have a clip that establishes me in a library. In other words, a long shot of the entire room. Now I can continue in the classic way with a sequence such as “Half-length shot of a bookshelf”, then “Nearby, a visitor takes a book from the shelf”.

But now I can also go back with CaraOne and say: Oh, I want to try out how the sequence might develop if I use “Semi-close-up, student taking notes at a library table” after the long shot instead! And then switch to a close-up of someone writing in a notebook, for example. So just try out an alternative timeline. And when I’m happy with it, simply import the strand of my timeline that I like best directly into Avid, for example.

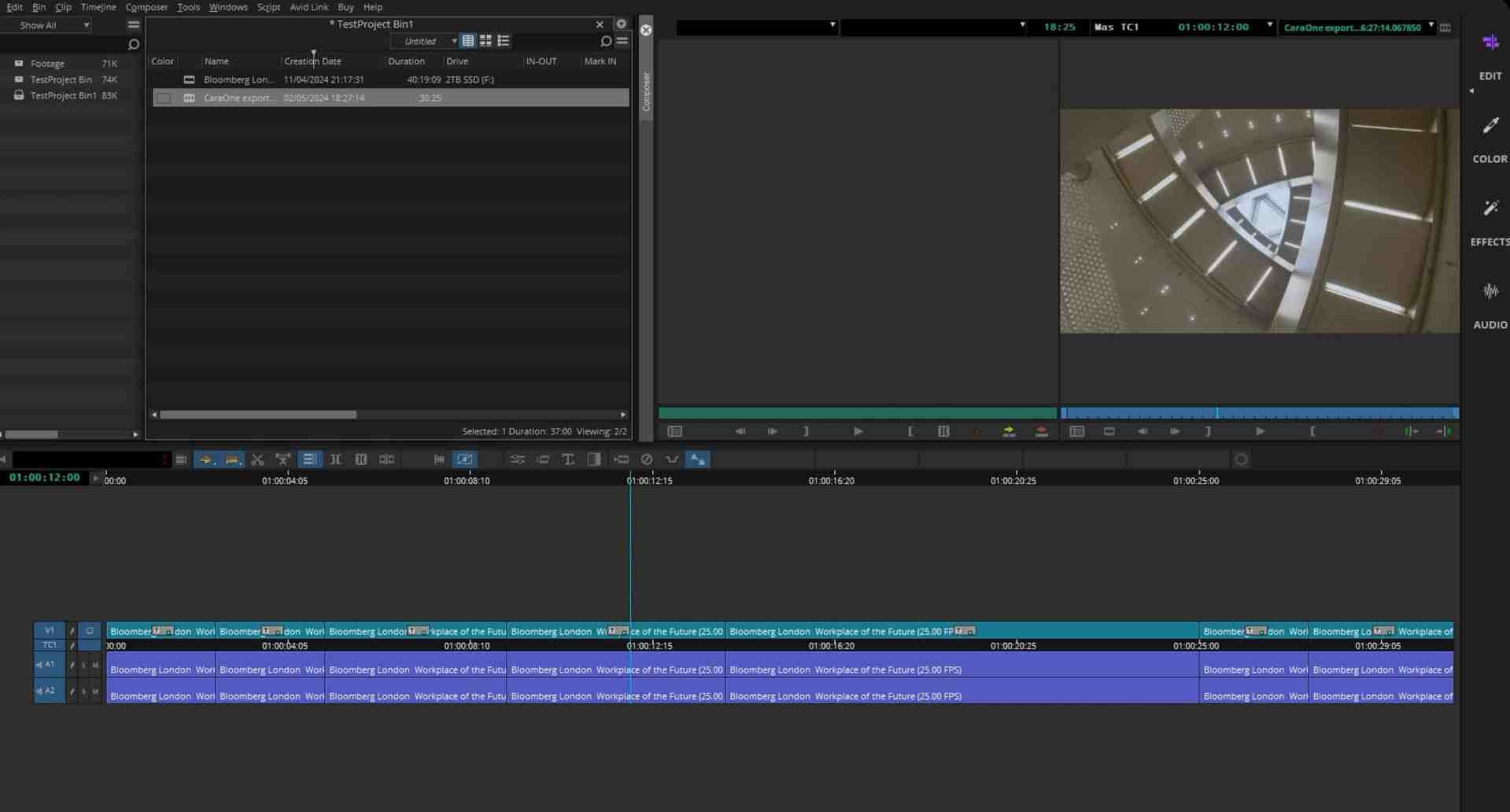

DP: Which EDL formats are supported so far?

Eddie Weinwurm: We don’t write EDLs, but AAFs. These allow us to import these sequences directly without having to relink them. And we do this in various post-production programmes, such as Premiere, whatever, because AAF is a standard. Even in Avid, which admittedly cost us a few grey hairs. So the workflow here is very simple, click, we create an AAF, you import it and you have a sequence that you can then work with directly.

DP: Do you ultimately want to replace the editor as a person?

Eddie Weinwurm: Not at all. There are already tools that produce visual stories completely automatically based on text. It all sounds great and practical, but anyone who has ever tried them knows that the results are pretty useless. Which is not because of the AI! It’s because of the information, which unfortunately it doesn’t have. Because every editor, cutter and director brings so much more knowledge to the editing suite than just a text! The direction of the medium, the tradition of the company, the visual language of the programme. Or the target audience of the advert. The taste of the customer, the mood of the boss today, and so on and so forth. So these tools are simply too generic in what they produce at the moment. Good for a quick tic-toc video, but even useless for a simple YouTuber who wants to have their own recognisable “signature” on their channel.

DP: You say “too generic at the moment”. Well, at the moment. But how do you see the future of the media industry in light of AI technologies like Sora?

Eddie Weinwurm: It’s been a long period of denial and suppression of the threat. I remember being on a panel at a conference in Hollywood last year where I was almost “lynched” by the fellow panellists from the creative side of all things – because I warned about the threat! A year later, I don’t think anyone would minimise the threat.

Now, I think most people realise that in many areas, no stone is left unturned. In 10 years or so, our television will probably be producing our own individual programmes. But you still have to differentiate – even if I am a warner, I think you have to leave the church in the village. Because not everything will be so gloomy.

Of course, on the one hand, mass-produced fiction, for example, will be particularly hard hit. Everything that authors can write on the fly and editors can cut while chatting on WhatsApp on the side will become obsolete. Anything that doesn’t require a high degree of creativity, thought and craft is of course child’s play for ML technology.

But on the other hand, if I want to watch a documentary about Nigeria, I expect to see reality, not AI fantasy! I want to see what it looks like there, not something generated by AI. Or, another example, I want to see the insane stunt – and wonder how someone can be so daring – and not an AI-produced 1620-degree backflip. That’s boring! And of course with the news – I expect anything but an AI fake!

DP: So the non-scripted sector remains relevant despite generative AI?

Eddie Weinwurm: Yes, I’m convinced of that. And islands will also remain in scripted production. I want to see Christoph Waltz in a film by Tarantino, not Vektor 321 on TPU 710. So wherever a certain brand is involved, authenticity will remain. Just like there are still many bakers who sell handmade bread rolls. The mass-produced goods, however, unfortunately come from bread factory machines. And it will probably be the same here.

But the media industry is not the only one affected; ultimately, all areas of the world of work will be affected. Even AI development itself! And just as we couldn’t stop the machines, unfortunately we won’t be able to stop it.

DP: That doesn’t sound very optimistic!

Eddie Weinwurm: No, but I’m by no means hopeless! Society urgently needs to create new framework conditions in good time, politicians need to think about it, there are many clever approaches – but that is certainly beyond the scope of this medium. And, on the other hand, it will fortunately be a while before we get to that point.

DP: So how do you think we should deal with AI?

Eddie Weinwurm: Good question! Should we now refuse all AI applications “Amish-like”? No, I personally think that would be stupid. The only thing that makes sense at the moment is to utilise products with ML technology as much as possible. To build up a head start, to make a name for yourself. Because that’s what it will be about later if you want to stay in the game despite AI.

So if CaraOne enables me to build much faster and better quality content, then I should utilise this opportunity! To create a “brand” and a head start. Because it’s going to be tight! We, ObviousFuture, are certainly trying to take an ethical approach here. This starts with the selection of training data and extends to product development. Hence the name IMA – Intelligent Media Assistant. And an assistant is only an assistant if it has someone to assist. And this person should exist for as long as possible and be as happy as possible. That is our goal.

DP: And what are you currently developing?

Eddie Weinwurm: We will be releasing CaraOne-Predict at the IBC. And I can only say that the Predict model, as we showed it for the first time at NAB, has improved by a few more dimensions. I can’t give too much away, but there will be a few features that I think will cause quite a stir again. For me, it’s always a good sign when the product managers of the “big players” in the industry stop by our stand at the big trade fairs. I just feel sorry for their poor developers, who then have to try to cobble together what we have invented somehow quickly themselves. And then at the next trade fair we’re a few miles further down the road again, while they’ve only just implemented a prototype-like functionality and so on. But it pays off to have started with such a big head start and to have a lot of really clever minds in the team – and accordingly we have some nice things in the pipeline again… Let us surprise you!