This article (“War of the Worlds”: ET’s bloodthirsty successors) by Barbara Robertson originally appeared in DP 05 : 2005.

Steven Spielberg’s interpretation of “War of the Worlds” will be remembered for several reasons: Firstly, there is the skilful weaving of today’s fears of terror into H.G. Wells’ 1898 story of an alien invasion of Earth, then there are the pleasing box office sales, the acting performances of megastar Tom Cruise, young Dakota Fanning and the cryptic Tim Robbins. Equally impressive are the images by cinematographer Janusz Kaminski – and finally the photorealistic visual effects by eight-time Oscar winner Dennis Muren and Oscar nominee Pablo Helman, which were created at Industrial Light & Magic (ILM).

In the VFX scene, the film will also be remembered for the speed with which ILM achieved such high-quality effects. The effects studio itself, on the other hand, will probably be remembered above all for the fact that these effects were created using its brand-new third-generation pipeline. “‘War of the Worlds’ was a test run,” admits Muren.

Restrained use of effects

Shooting of the first sequences began in November 2004 and allowed ILM to shoot one of the most difficult shots – the first appearance of the alien spaceships – in December. Shooting finished at the beginning of March and Paramount released the film in cinemas at the end of June. “We finished half of the finals in the last four weeks,” says Muren. All in all, ILM created around 400 shots, many of which included models and miniatures.

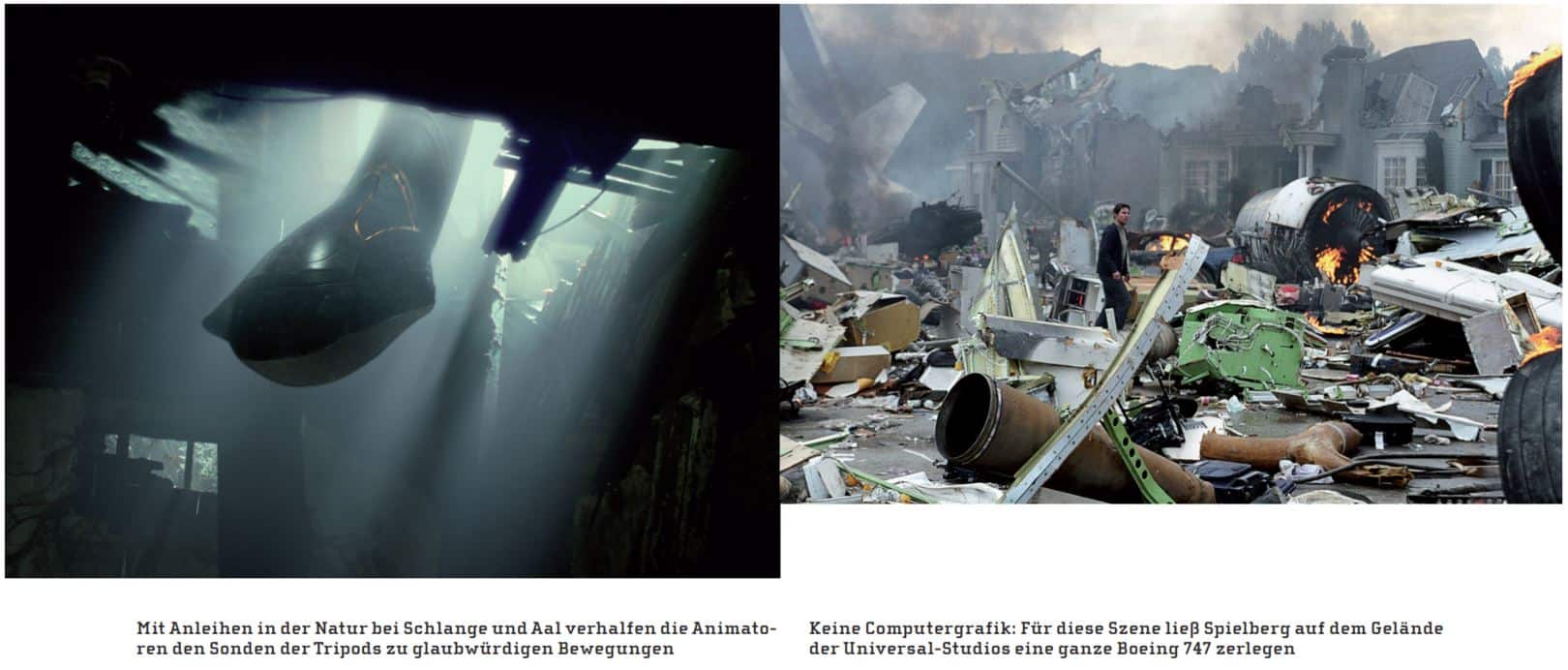

“We could have got away with computer graphics, but the explosions wouldn’t have looked as real,” reveals Muren. “So we built a bunch of models and miniatures. But we only inserted them into the shots where we needed them. We endeavoured to do as much on location as possible so that the effects interfered with the concept of the picture as little as possible. Steven was aiming for an organic look for the whole film. Everything is dirty and dusty, and the camera movements resemble the amateur footage from 11 September. We didn’t want to impose the constraints of post-production on the shoot.”

Animatics as a high-precision shooting template

The pre-visualisation helped to make this possible. Daniel D. Gregoire from JAK Films, who had worked with George Lucas on “Star Wars: Episode III”, created animatics that corresponded to the real locations and actual camera lenses. “Once they had the locations, they probably spent two or three months at the beginning checking the shots with animatics,” reports Muren. “It was a pre-visualisation like I’ve never experienced before. Once we were on location setting up a shot, Steven would ask Dan what lens the animatic was shot with. Dan would say, for example, ‘It was a 21, and the camera was 8.20 metres off the ground and tilted six degrees down’ And then we started exactly like that. That gave Steven the confidence to shoot exactly what he wanted on set, knowing that he had already gone through four or five alternatives in the previs.”

ILM started with a team of 50 people working on the first sequences that came in. Over the last five weeks, the team grew to 179 people. Most of them came in about eight to ten weeks before the end, when work on “Episode III” ended. The schedule only allowed for twelve weeks of post-production, which was by no means easy.

Sophisticated compositing

“In terms of compositing, this was the most complicated film I’ve ever seen,” explains compositing supervisor Marshall Krasser, whose credits include directing the compositing on “Lemony Snicket”, “Van Helsing”, “Hulk”, “Star Wars: Episode II” and “Pearl Harbor”. “Making the connection with the source material was a challenge.

Krasser started with five compositors, but ended up with 45. With as much of the film shot on location as possible, the compositors had to blend digital elements, matte paintings and footage of models and miniatures into images full of dust, smoke and debris. For some sequences, they took the footage, literally took it apart and then put it back together again.

For example, to create the sequence in which the Tripod, the 45 metre high alien fighting machine, unscrews from the ground in the middle of Ray Ferrier’s (Tom Cruise) shabby neighbourhood, the compositors started with the live-action footage. In this scene, all the cars have come to a standstill. Numerous lightning bolts have struck the ground in the centre of an intersection. A crowd of people crowd around the resulting hole. The pavement begins to crack open, the monster slowly rises to the surface and everything on and around the junction begins to shift. A simulation by ILM created the cracks, the compositors created the shifts.

To tear apart the church seen in the scene over several frames of the sequence, the team combined photographs of miniatures in various stages of destruction with shots of the church projected onto the geometry. “We cut everything out of the image that could move and placed it on separate parts of the geometry,” says Krasser: “Telephone poles, traffic lights, stop signs, street lamps and buildings.” The team used ILM’s camera projection software Zenviro and Comptime, the studio’s own compositing software. Zenviro makes it possible to project textures, which can consist of photos or moving images, onto 2D maps or 3D objects in a virtual environment.

Painters – or anyone else – can retouch these images based on what they see through a virtual camera that follows the camera from the source image. Once the elements were connected to the geometry, the compositors moved them as if they were being influenced by the rising tripod. They animated frame by frame. “It’s amazing how that little bit of extra effort makes the shot more believable,” Krasser praises.

Similarly, the compositors combined source footage, miniatures and 3D objects to destroy a freeway that runs through Ferrier’s neighbourhood on elevated pillars. “The upper part of the bridge was 3D geometry that was bent and animated,” Krasser explains, “but the concrete pillars were miniatures. The exploding petrol station and the houses being blown apart by a tanker truck were also miniatures. They matched the real houses shot on location so precisely that we didn’t even use the real houses.”

Tripods and aliens as pure CG constructs

The tripods and the aliens (only seen briefly in the film), on the other hand, were made entirely of CG. The tripods are designed to look more like illustrations from Wells’ book than the spaceships from the 1953 film – they look like tanks on long, slender legs. “They were robotic, mechanical and organic with occasional in-between stages, depending on the setting,” explains animation supervisor Randal Dutra. Dutra, who had previously worked with Spielberg and Muren on “Jurassic Park” and “Jurassic Park: Lost World”, left the VFX industry a few years ago to pursue a career as a painter. Muren convinced him to return for this film. He led 19 animators at ILM who worked on 123 character shots, some with multiple characters. “We had three months and three weeks to create all the animation,” says Dutra: “Steven used to edit sequences quickly after shooting. The fast pace kept everyone on their toes. Everyone worked quickly, which was great. We were able to maintain focus, goal-orientation and enthusiasm.”

In addition to the task of making the tripods move believably on their skinny legs, which actually seem unsuitable for carrying the heavy “head”, the animators developed nineteen tentacles that served as probes. In one scene, one of these probes glides through a cellar where Ray Ferrier and his daughter are hiding. “We animated it as a cross between a snake and an eel gliding through the water,” says Dutra. “The whole trick is getting the intervals of movement right.”

For shots in which several tentacles appear like medusae, the animators got help from the technical directors (TDs), who developed procedural animations for the background tentacles. For the tripods’ headlights, the compositors created an effect reminiscent of wavy, gaseous, steamed-up glass. “We designed a two-dimensional fractal noise pattern,” explains Krasser, “marked some tracking points on the head of the tripod and fixed our effect on the tripod using the animation data we obtained. As a result, the animated effect moved with the tripod and remained attached to it.”

Sometimes it doesn’t have to be a shader

For the gas and smoke emitted by the tripods, the compositors combined fog elements – film footage of fog – with two-dimensional fractal noise. “Why write shaders when you can also use real or two-dimensional techniques to create something you’ve never seen before?” asks Krasser rhetorically.

Like their tripods, the aliens also have three legs and each leg has three toes. “I modelled them on red-eyed tree frogs,” reveals Dutra. “As they move around, they put their hands on the walls rather than on the ground.” The animators switched back and forth between Maya and ILM’s new pipeline toolset, called Zeno. The TDs, modellers, match movers, painters and lighters, on the other hand, all worked with the new tools. “With every job, we always do something we’ve never done before,” says TD Michael Di Como: “This time, we replaced our entire pipeline.”

New pipeline with input from Europe

Parts of the new ILM pipeline have been in development since the pre-production of “Star Wars: Episode I” in 1998 and 1999, for example the award-winning camera tracking software for the pod race and later Zenviro, the camera projection mapping software. However, the actual development of the new, standardised toolset took place in the previous two years, triggered by the planned move to new premises. When “War of the Worlds” came up, ILM decided to pick up the pace once again. “If you don’t take risks, if you don’t do something under pressure, firstly it will never be finished,” Muren lectures, “and secondly it will never work properly. So the only way is to combine it with a job, and that’s what I decided to do.”

With the move to the new premises in mind, and knowing that after the final episode of “Star Wars” ILM would be processing 2,000 shots a year rather than 4,000, Muren was tasked with finding ways to reinvent ILM. Part of the result was standards for Zeno’s user interface. “We needed tools that would enable artists to take on more than one task in image creation,” he explains. “In Europe, a lot of work is done by one or two people who do everything from match moving to rendering.

We weren’t set up like that before, but I think our people are ready to take that step. It all starts with an easy-to-use toolset.” ILM’s first pipeline was based on SGI Inventor. The second, which was primarily designed to produce living creatures, was based on scene files from early versions of Softimage animation software. The centrepiece of the new pipeline is now a scene file developed and controlled by ILM. “Zeno manages all the data at the scene level,” explains Chief Technical Officer Cliff Plumer: “It’s a core toolset with a timeline, scene graph and a curve editor that loads the tools the artist needs.” This approach means that the pipeline is open to innovation.

Everything under one roof

All of ILM’s older proprietary tools have been rewritten. And by integrating the Python scripting language, new modules can now be easily added. A simulation engine that calculates clothing, liquids, hair and skin is the centrepiece of the achievements. Models can consist of subdivision surfaces, polygons or nurbs. A new lighting package called Lux delivers what TD Di Como calls “true 3D lighting for particles and creatures”. And there is a two-way connection between renderers such as Mental Ray, Renderman or Brazil and Zeno’s compositing module. Live links allow software such as Photoshop, Inferno and Shake to appear as modules within Zeno, making it easier for users with experience of commercial software to get started with Zeno. ILM’s software is also fast: the solution, which is programmed in 64-bit throughout, accesses Nvidia graphics cards directly.

The most important change, however, is in the workflow: artists now work with a standardised user interface that is based on Maya and allows them to work easily with any tool from the toolset (just as Dennis Muren had planned). Also built into Zeno is what software developer Alan Trombla calls “non-destructive overwriting” – it ensures the integrity of all elements in a scene, regardless of who edits an element and when. “Zeno keeps everything separate, but to a TD it looks like everything is merged. Modelers can paint, painters can animate and compositors can simulate. TDs can light, paint, animate and model. If they want to.

“The new pipeline challenges artists to rethink,” says TD Curt Miyashiro. “Animation used to be cached. Now TDs can adjust, change, fine-tune and add correction shapes to the animation to enhance an animated model.” Miyashiro provides a simple example from the film: “We had to change the direction of the tripod headlights to fit the shot,” he says. “In the past, we would have had to rely on the animators to do that.” One of the shots was actually created from start to finish by modeller Michael Koperwas. “It was a shot of a spider crawling up Dakota Fanning,” explains Miyashiro: “He modelled it, painted it, rigged it and lit it.”

How much impact the new pipeline will have on ILM’s artists remains to be seen. But if initial tests are any indication, it will likely help the studio meet the growing demand for high-quality, yet quickly created effects. And because the pipeline was co-developed and will be shared by Lucas Arts, the game development arm of Lucas Digital, there are many possibilities yet to be discovered. “I’ve seen the difference in the energy with which people have approached the work,” summarises Muren. “Some of them really enjoyed it and I think they got their things done faster. The culture still needs to change, but we have initiated a philosophy that we are open to people wanting to learn. It will probably take a year.” After a moment’s thought, he corrects himself: “Maybe six months.”