Table of Contents Show

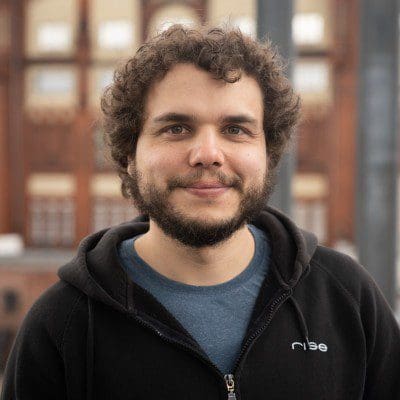

Fallout Season 2 asked for more scale and less forgiveness. Rise delivered 244 shots across seven episodes, with the heaviest work landing early, and kept two sites moving as one crew. The playbook stayed familiar, but the demands went feral: closer cameras, heavier mechanical interaction, city-wide procedural destruction, and sand-driven reveals that punished every shortcut.

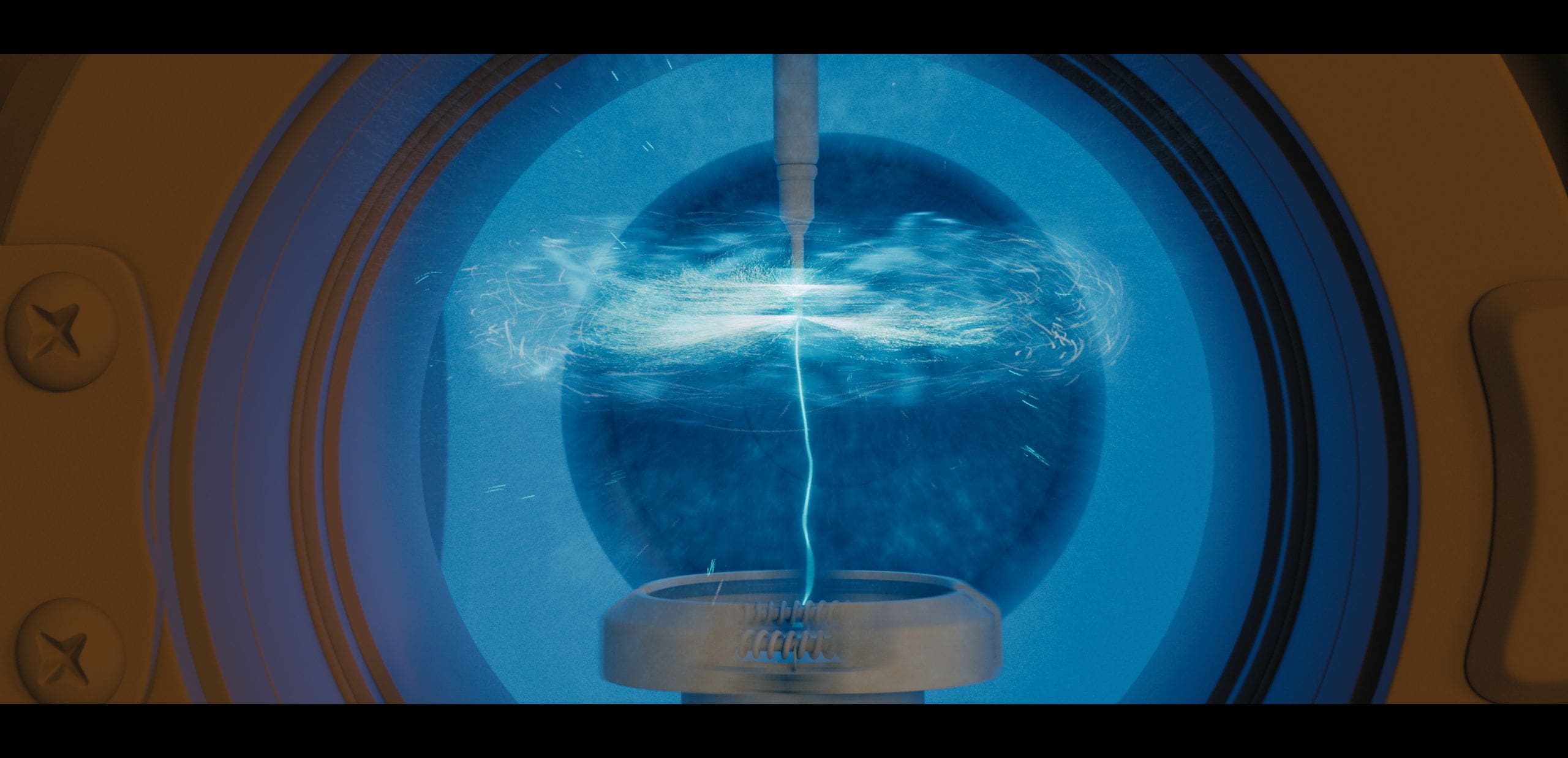

Andreas Giesen is a Rise VFX Supervisor with a Houdini-heavy background and a track record on FX-intensive shows. He is credited by the Television Academy for a 2024 Primetime Emmy nomination for Fallout in “Outstanding Special Visual Effects in a Season or a Movie”. His Rise FX credits include serving as the studio VFX Supervisor on The Matrix Resurrections, as well as work on has worked on X-Men, The Matrix, The Last of Us and the various entries in the Marvel universe, among others.

DP: When we last spoke about Fallout Season 1, you worked on a show that combined large-scale environments, destruction, Vertibirds, Power Armour, and complex integration work. On Season 2, where did the challenge shift?

Andreas Giesen: The challenge shifted purely to massive scale and extreme scrutiny. In Season 1, we were establishing the rules. In Season 2, we took those rules and stress-tested the pipeline. We had a huge jump in scale, like procedurally destroying the entirety of Los Angeles or dealing with the multi-layered sand reveals of Area 51, but we were operating with roughly the same core team size. Brute forcing was not an option. We had to lean heavily on our procedural setups and the USD foundation we built in Season 1 just to survive the shot count.

DP: Did Season 2 feel like a continuation of an established visual language, or more like a technical reset because the demands had changed so much?

Andreas Giesen: Artistically, it was a continuation. We already had that shorthand with Jay Worth and Jonathan Nolan, so we knew what that tactile, grounded Fallout look felt like.

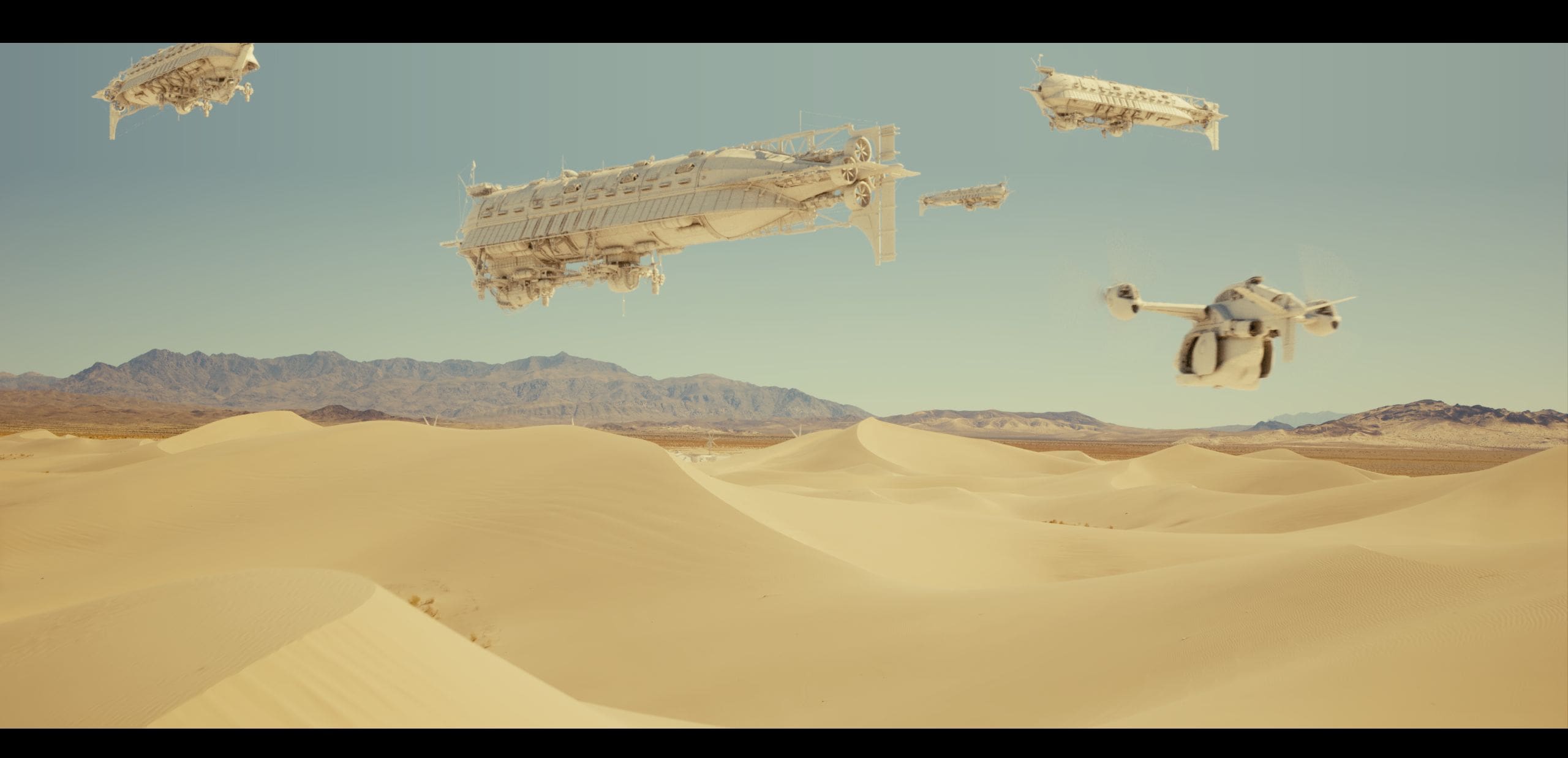

Technically, though, it was a massive upgrade. We did not throw everything away, but we had to rebuild or upgrade many assets because the camera was suddenly much closer or the interactions were much more complex. The Vertibird docking with the Caswennan, for instance, required a whole new level of mechanical detailing that just was not necessary in Season 1.

DP: RISE delivered work across several episodes, with particularly dense work in some of the early ones. How did that affect the structure of the production on your side?

Andreas Giesen: We delivered 244 shots spread across seven episodes, but the bulk of the heavy lifting hit right in episodes 2 and 3. To keep the chaos in check across our Berlin and Stuttgart offices, we operated as one unified team. We ran single VFX dailies so it never felt like two separate facilities. We relied heavily on our in-house production management tool, risebase 3, to keep the sites synced.

Honestly, a massive shoutout to our VFX Producer Annelie Dangel , Production Manager Scott Kunysz, and the coordination team. They were the real firewall keeping the production structured while the artists dealt with the pixel pushing.

DP: You mentioned that the work was divided into more specialised teams. How exactly did that division work in practice?

Andreas Giesen: Instead of splitting the work purely by department, where an asset just gets tossed over the wall, we built small, cross-functional strike teams dedicated to specific sequences. For example, we had a dedicated Area 51 team and a completely separate Caswennan team.

Each of these pods basically consisted of one layout artist, one lighting artist, and an FX artist if the sequence required it. This meant the artists became absolute experts on the continuity, lighting setups, and specific technical hurdles of their assigned sequence, rather than constantly context switching between different environments.

DP: The Caswennan ship has become even more important in Season 2. At what point does a hero asset like that stop being “just a vehicle”?

Andreas Giesen: When you get that close, it absolutely stops being just a vehicle and becomes a massive flying set. The layout has to treat it like a static environment just to block the shots, especially when we are tracking complex character animation and heavy mechanical interactions, like the Vertibird docking, directly onto the hull.

DP: What were the rebuild decisions under the hood?

Andreas Giesen: It was actually a really interesting round-trip process. Since the flight deck scenes were shot on a virtual stage, we received the Unreal Engine asset from production as our starting point. Funny enough, that Unreal asset was originally based on our own Season 1 dirigible model!

So, our CG team, led by Jenny Leupold , took the updated proportions from the virtual stage, brought the asset back into our pipeline, and completely re-engineered all the high-res cinematic details and shading quality.

DP: How did you approach balancing a highly detailed close-up asset with practicality for layout, lighting, and shot iteration?

Andreas Giesen: We relied heavily on our USD workflows and instancing. We had to design functional heavy hydraulics and complex mechanical joints so the lifting arm physically made sense up close, but we could strip that back or use lighter variants for wider layout tasks so the scene would not grind to a halt in the viewport.

DP: In Season 2, there seems to have been a stronger overlap between physical production, virtual production, scans, and final CG. How did that affect the pipeline in concrete terms?

Andreas Giesen: It meant we lived and breathed LIDAR. For the Area 51 reveal and the hangar sequences, production gave us incredible plates and highly accurate scan data. Having that precise spatial data was the only way to guarantee a seamless transition from the practical sets into our massive digital extensions. Once that data enters production, it locks in your scale, distance, and speed, which were crucial for making the flight dynamics feel grounded in reality.

DP: There was also mention of Unreal-based virtual production material related to the airship. How did you manage the relationship between real-time assets, scanned set data, and final cinematic assets?

Andreas Giesen: Again, USD was the bridge. We took the Unreal assets, which gave us the exact scale and spatial relationships from the LED volume, and used them as the spatial framework to build our final pixel cinematic assets over the top. It ensured that when we integrated the final CG back into the live action plates, all the contact points and lighting references matched the physical set pieces perfectly.

DP: Was the biggest benefit from USD technical elegance, or simply that it stopped artists from stepping on each other’s work?

Andreas Giesen: Ha, a little bit of both, but honestly, stopping the file overwrites is worth its weight in gold. On a more technical level, the real elegance was how flawlessly it let us bridge the gap between Maya and Houdini.

For the Shady Sands environment, our Layout Lead Karin Wolf and her team built the pristine, non-destroyed city parts in Maya. We then brought that via USD straight into Houdini to scatter all the granular details and endless cornfields. Being able to mix and match layouts without friction meant artists could just stay in the tool they were fastest in.

DP: The Vertibird work in Fallout has always been interesting because it needs to feel stylised and believable at the same time. What were the challenges for it in Season 2?

Andreas Giesen: The challenge is that it is a massive, lumbering vehicle, but it still needs personality. For Xander Harkness arriving at Area 51, he is a fantastic, slightly cocky pilot, so we had to inject this aggressive precision and specific swagger into the flight path and mechanical braking.

The integration challenge, especially during the Caswennan docking, was finding that fine balance of heavy momentum. We had to ease the mass and simulate procedural micro vibrations to show it buffeting in the air currents. The hardest part was nailing the exact frame where the mechanical arm takes over the full weight and the rotors power down.

DP: How much of selling a vehicle like that comes from animation, and how much from camera language, editorial rhythm, and integration into atmosphere and environment?

Andreas Giesen: Animation gets you the weight and the performance, but the environment integration is what sells the lie. In the hangar landing, the plate was dramatically backlit. Our lighters had to introduce specific light sources to shape the geometry nicely and match the foreground. Then the FX rotor wash tied everything together. We simulated heavy CG dust and debris blowing right past the lens, matching the practical wind from the live-action plate to physically anchor the digital ship into the real set.

DP: For sequences involving scanned practical sets, such as hangar or landing environments, what are the biggest pitfalls once the scan data actually enters production?

Andreas Giesen: The biggest pitfall is that the scan data and the live action plate lock you into absolute physical reality. If the plate has a specific wind direction or interactive lighting, absolutely every piece of CG vegetation, dust, or vehicle integration has to physically react to that exact same environmental energy, or the illusion breaks immediately.

DP: Fallout still has this very specific balance: it is heightened and absurd in places, but the world still needs physical credibility. How does that affect VFX decisions in review?

Andreas Giesen: You have to ground the absurdity in absolute, boring physical reality. For the dramatic dirigible crash, which is inherently a heightened, spectacular moment, we went straight to historical footage of the Hindenburg. We ran intense, clustered simulations starting with plastic deformation for the metal bending, triggering RBD sims for the hull ripping apart, and feeding that into multiple layers of pyro for flames, smoke, and contact sand. If the physics are incredibly strict, the audience will buy into the universe’s stylised madness.

DP: On a show like this, where does proceduralism genuinely save time, and where does it still need a heavy layer of manual art direction to become production-ready?

Andreas Giesen: Proceduralism saves you on the sheer volume, but art direction saves the shot. For the destroyed Los Angeles, we started with basic map data, literally just cubes and planes. Our brilliant TD, Kilian Baur , built a Houdini tool that procedurally converted those cubes into heavily detailed, destroyed buildings. It generated massive amounts of geometry. But you cannot just hit simulate and walk away. We had to manually paint the degree of destruction across different zones for compositional reasons. The tool does the heavy lifting, but the artist still has to compose the frame.

DP: Was there a particular sequence in Season 2 where the real constraint was neither the creative idea nor the client feedback, but simply scene complexity, cache weight, or iteration cost?

Andreas Giesen: The dirigible crash and the Area 51 reveal were absolute beasts for cache weight. For Area 51, we were blending volumetric dust, suspended sand particles, and contact sand actively swirling off the geometry. The dirigible had even more interactive layers, expertly managed by FX TD Arnaud Malherbe under Jens Martensson .

We survived by heavily clustering the sims for faster turnarounds and by relying on deep compositing workflows in Nuke. Our Compositing Supervisor Michael Lankes , Comp Lead Kader Bagli , and Comp Lead Jannik Walzer did incredible work taking those massive datasets and balancing them without bringing the farm to its knees.

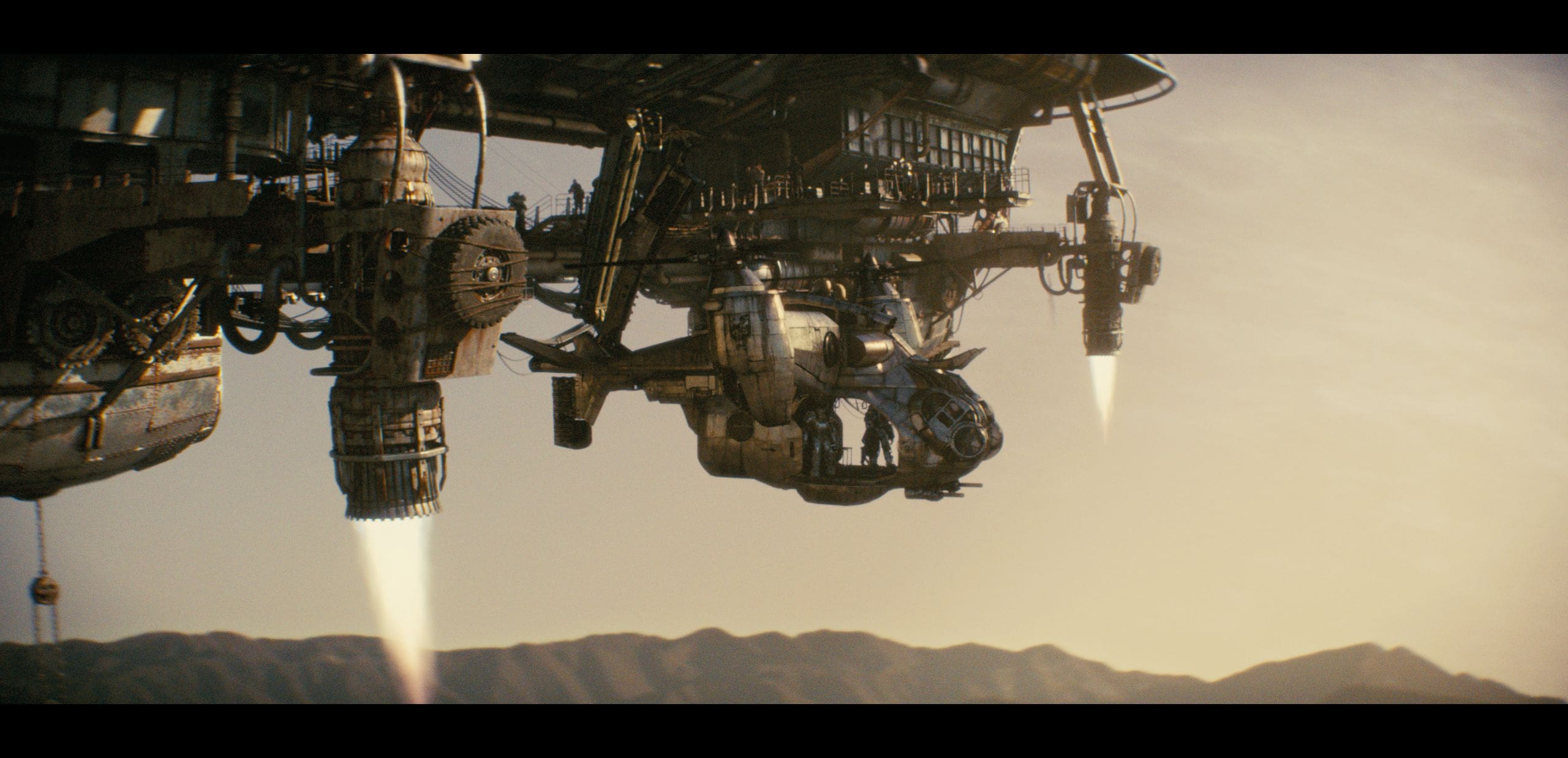

DP: What became easier on Season 2?

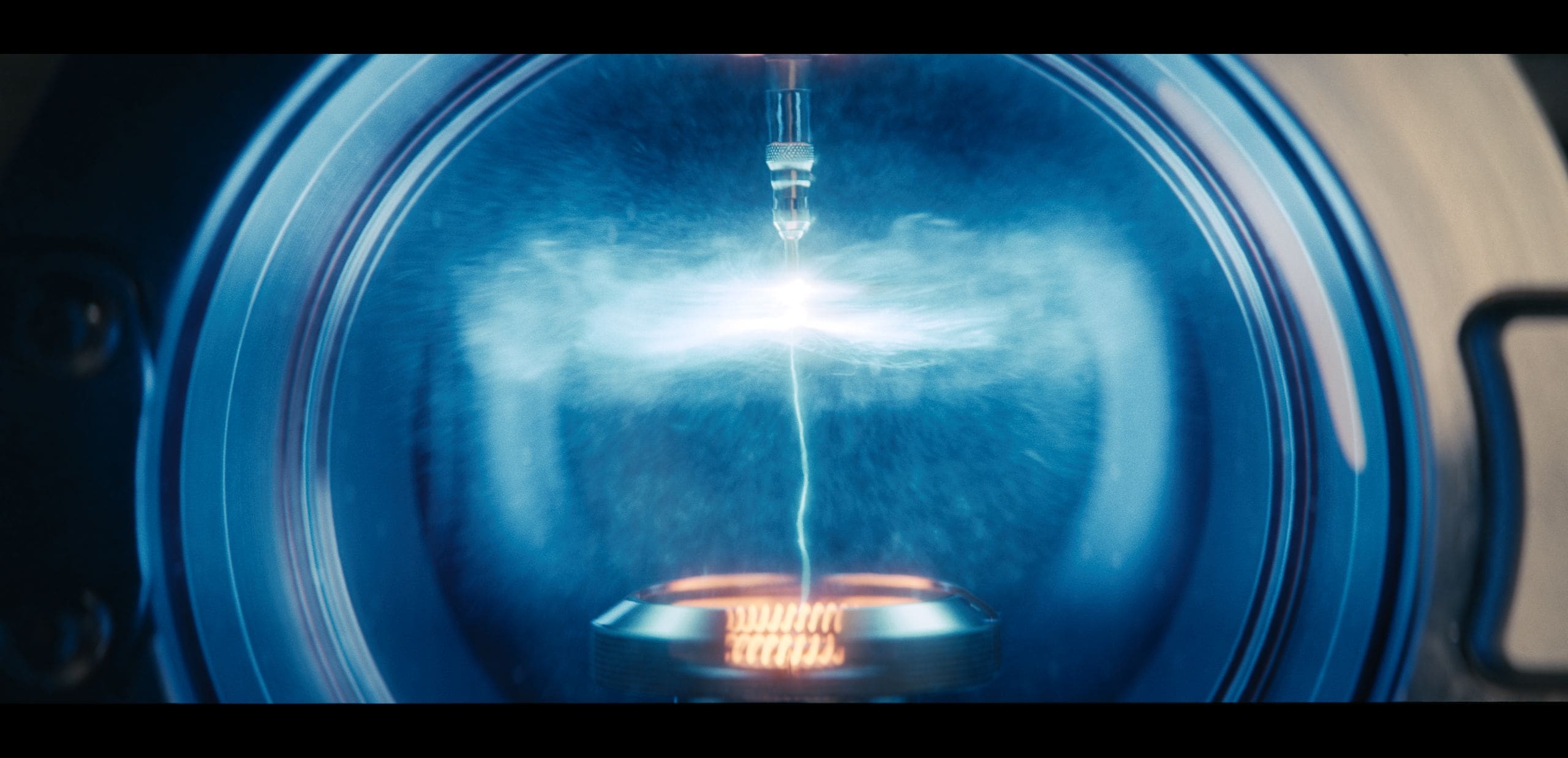

Andreas Giesen: Things like lookdev for the energy signatures became easier. We reused the core effect for the cold fusion and diode glows from Season 1. But because we had to scale it up to so many more shots, we had to build highly efficient setups in Nuke involving heavy rotomation and complex relighting, since that blue glow affects every shiny metal surface around it. The look was established, but the volume and the necessary integration were significantly harder.

DP: Was there a shot or sequence this season that looks deceptively simple in the final cut, but was technically far more difficult than most viewers would ever suspect?

Andreas Giesen: It has to be the Power Armor enhancements. Production has this absolutely incredible practical suit that a stunt performer wears on set. However, because it is a physical, wearable suit, sometimes individual parts will naturally jiggle or bounce a bit too much during heavy action, which breaks the illusion of solid, heavy metal armor.

In the shot where the massive dirigible is coming down and exploding in the background, we actually had to completely replace the shoulders of the Power Armor while the character is running away. Everyone in the audience is obviously staring at the massive fiery airship crash taking up the sky, but there we are, meticulously tracking and doing invisible CG replacement on shoulder pads just to make sure the suit feels appropriately heavy.

DP: And conversely, was there a shot that looked terrifying at first but became manageable once the right structure or workflow was in place?

Andreas Giesen: Definitely the procedural destruction of Los Angeles. The idea of destroying a city of that scale is terrifying. But once Kilian’s tool was in place, and we utilised our USD pipeline to block the shots with simple geometry while the high-res destruction updated seamlessly in the background, it actually became a highly manageable, iterative process. We could instance the endless piles of rubble and rusted cars, which kept the massive scale from becoming unrenderable.

DP: What is a technical lesson from Fallout Season 2 that would transfer well to other large episodic shows?

Andreas Giesen: The absolute golden rule is to keep everything as procedural as humanly possible so you can actually survive rapid client changes. That, combined with establishing those small expert strike teams we talked about, is how you manage the chaos. But the real lifesaver that transfers to any heavy show is aggressive pipeline automation. For sequences with massive shot counts, we utilised our proprietary riseflow tools to completely automate the heavy lifting.

We could submit CG renders from one principal master scene across 50 different shots before leaving the studio. By the next morning, the pipeline had automatically generated 50 temporary composites, already perfectly combining the new CG with the live-action plates using deep learning. Waking up to 50 ready to review temp comps instead of a massive rendering bottleneck is the only way to deliver a show of this scale.

DP: What is the next project you are working on, insofar as you can already talk about it, and is there anything from Fallout Season 2 that you are taking with you into that next production?

Andreas Giesen: I am currently working on something very exciting, but unfortunately, it is strictly under wraps right now, so I cannot speak about it just yet. But I am absolutely taking the USD workflows and the procedural pipeline strategies we refined on Fallout straight into this next challenge!