In his third appearance on the big screen, Spider-Man has to fight three villains who demand everything not only from him, but also from the effects team. DP investigated how Imageworks created characters out of sand and black mass and sent actors hurtling through the air.

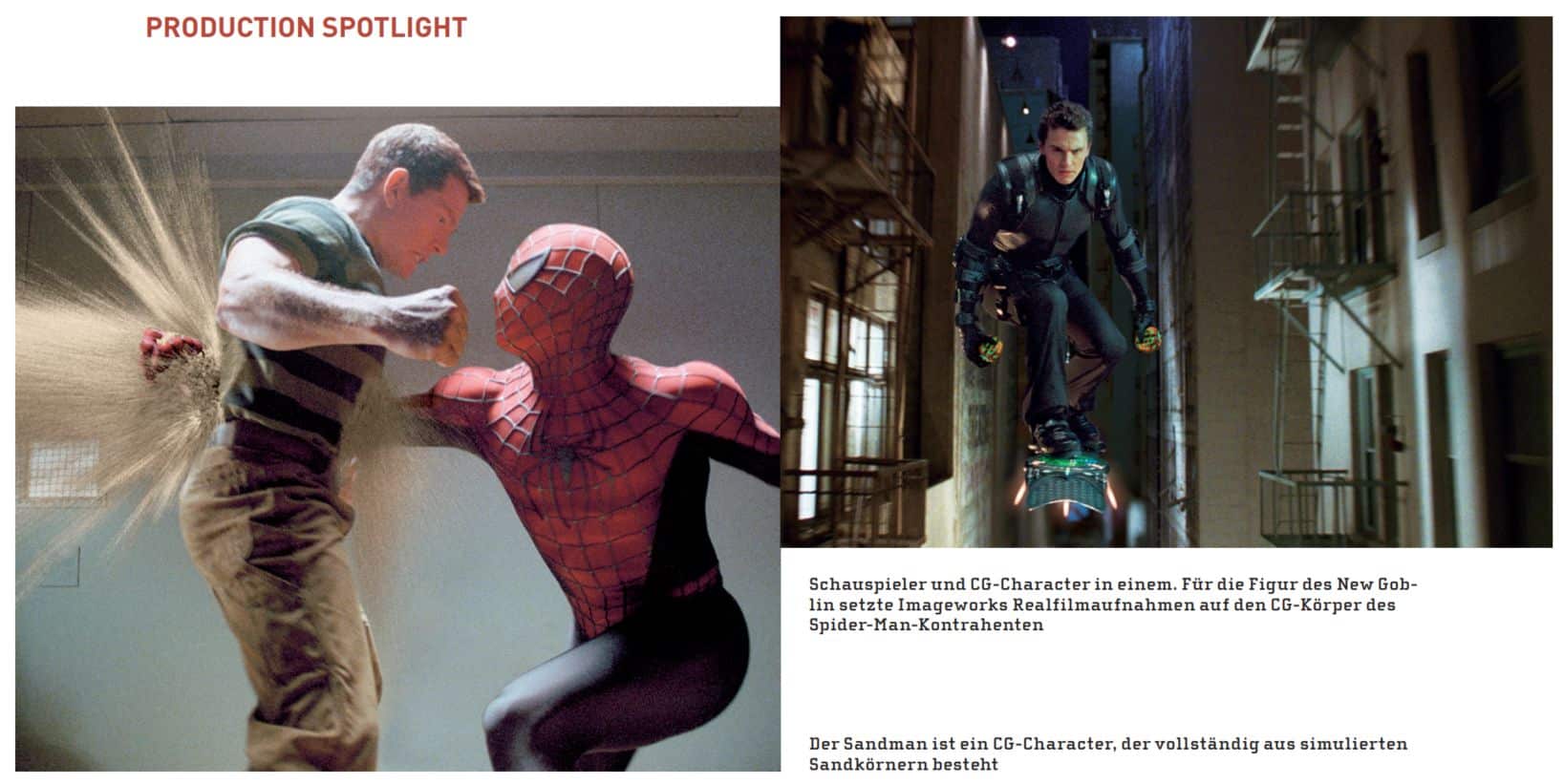

As if it wasn’t hard enough to cope with a double identity. In the third instalment, Peter Parker aka Spider-Man (played by Tobey Maguire) also has to contend with the darker side of himself – and with three villains, two of whom are completely digital. The third, who only occasionally appears as a digital double, is his former best friend Harry Osborn (James Franco), who has followed in his father’s footsteps, not to say rolled: he becomes the New Goblin, a jet-powered skateboarder. The other two villains are Sandman and Venom. Sandman is sometimes a particle version of Flint Marko (Thomas Haden Church) and sometimes a cloud of dust. Venom is a symbiote: black mass that first attaches itself to Spidey and then to Eddie Brock (Topher Grace).

Spider-Man 3 is once again directed by Sam Raimi; Scott Stokdyk from Sony Pictures Imageworks, who won an Oscar for his visual effects in Spider-Man 2, returns as visual effects supervisor. For this film, he led a team of 270 artists at Imageworks who worked for more than two years to create their 950 shots. Eight other studios also contributed shots: BUF, Evil Eye Pictures, Furious FX, Gentle Giant Studios, Giant Killer Robots, Halon Entertainment, Tweak Films and X1fx. However, Imageworks retained the key work, realising the fight between Peter and Harry, placing the villains in their settings and creating the final battle where the actors fight each other. “There were more actors and more villains in this film. That made everything a good deal more difficult,” recalls Stokdyk. “We had fight scenes with multiple characters.”

Street fight

The first sequence Imageworks created for the film was the fight between Peter Parker in everyday clothes and Harry Osborn, mostly in his New Goblin costume. Although Peter is not wearing his spider suit, he still shoots cobwebs to swing through the street after Harry and his speeding skateboard.

“It was kind of a showcase for the film for a long time, a promotional clip,” reveals CG supervisor Grant Anderson. “We wanted to make it look as good as possible.” To build the street, Imageworks used its Assembly Component System, a directory structure for metadata built in Maya. “We have component hierarchies that create cities,” Anderson reveals. “The system tracks every building and every building component; it reads in constructions that you’ve put together and stores them in RIB files.”

A real spectacle

Imageworks developed a new system for the digital doubles that fly through the streets; for the first time, Spider-Man swings around without wearing a mask. “The aim was to give Sam [Raimi] the performance of the real actor and not a CG interpretation of it,” reports Stokdyk.

They began by animating the scene in Maya. Plate lead John Schmidt extracted the movement of the animated head relative to that of the camera. He then placed each actor in the bluescreen set on a motorised turntable and filmed them with a motion control camera, using the information from the animation files. “We kept the connection between the animated head and the actor,” explains Schmidt. For example, if the camera is pointed at the actor’s face at the beginning of the shot and points to the back of his head at the end, Schmidt programmed the turntable to rotate accordingly. The camera could move away or closer depending on the animation data, but the actor’s head always remained in the centre of the frame.

As it was impossible to play the actions of the fast-moving superheroes on stage at the speed at which they should move in the film, the actors often had to play their facial expressions in slow motion. In this way, they worked out spoken sentences, grimaces, snorts, expressions of pain and so on.

In the end, compositors selected frames from the scanned footage of the actors’ faces and used them to replace the CG faces in the shots. Although this system required good planning and an early commitment to the animation, the result was shots in which the digital body performs impossible feats with the actor’s real face. However, the interpretation of Thomas Haden Church’s second incarnation as Sandman was completely CG and it is hard to imagine how the visual effects team could have created this creature any other way. The same goes for Venom, of course. Furthermore, both villains have something in common, even though one character is made of sand and the other of an oily black mass: “The crucial trick with these two characters in particular was that the effects and character animators had to work together,” explains Stokdyk.

Built from sand

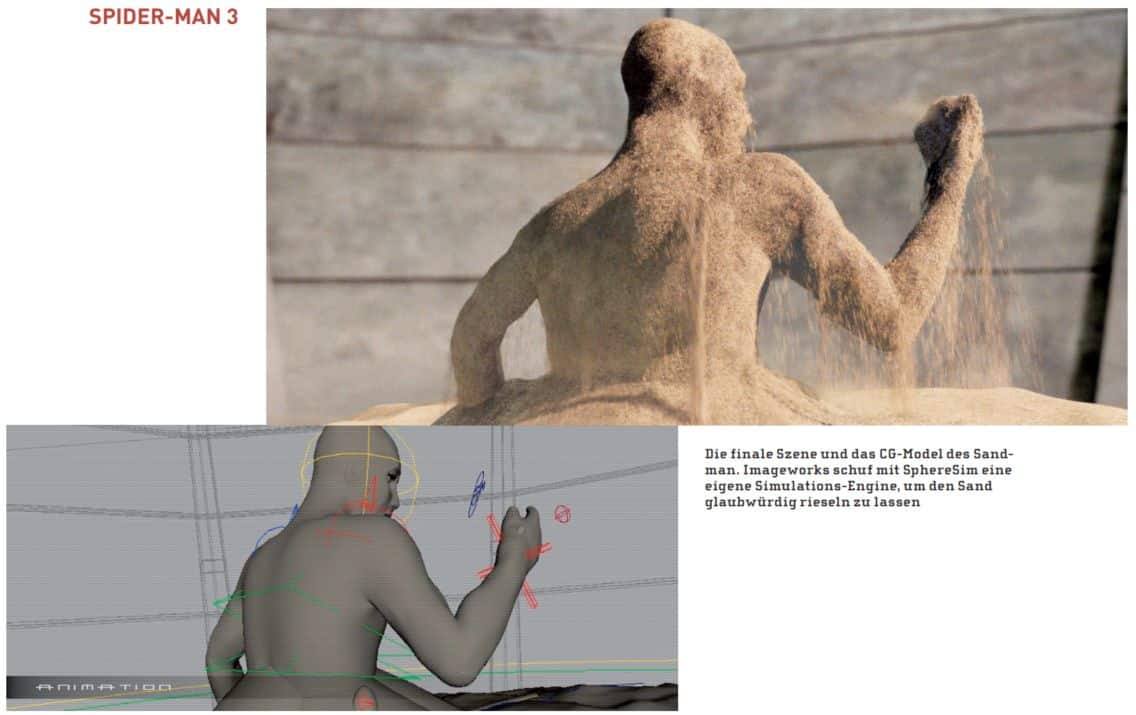

In the film, Church falls into a particle accelerator and mutates. His atoms combine with sand, turning him into a grainy quick-change artist. His “birth scene” as Sandman was the most difficult. In this scene, his figure rises from a pile of sand. “It’s as if something is growing in the sand that eventually takes on human form,” describes animation lead Bernd Angerer. “It takes him a little while before he realises what’s going on. He was human before, but then he was destroyed. This is his rebirth.”

For this shot, the effects team had to develop a system that piles grains of sand on top of each other, creates facial expressions with the particles, lets sand trickle off the figure and creates clouds of dust. “We didn’t want it to look like an object was pushing through the sand, nor did we want to create a cloud of sand,” explains Doug Bloom, who worked on the project as Sand Effects Supervisor. “The character is made up of individual grains of sand and each grain has a consciousness.”

Sand simulator

Imagework’s software development team utilised concepts from studies of particle physics and the dynamics of grains such as wheat or corn, as well as experience with conventional computer graphics, to program a system called SphereSim. SphereSim simulates solid spheres that can collide with each other or with other geometry and can stack on themselves and on other geometry. It partially contains a simplified version of solid equations with reduced friction, allowing solids to move like a fluid. Since the simulator always works with simple spheres and does not calculate rotation, the team was able to run simulations with more than a million grains of sand at the same time.

To keep the simulation speed high while the sand piles up, the software removes spheres outside the camera angle. “We spent a lot of time developing and improving controls for the technical directors [TDs],” recalls Bloom. “The simulation engine can calculate a very high number of the small, stiff spheres and the TDs can add or remove additional spheres at any time. The environment remains stable.” Because they wanted to use faster particle simulations when possible, the team represented the entire collision geometry as Particle Level Sets (PLS) or Height Fields. PLSs are a powerful tool for visualising and developing free surfaces.

“This allowed us to take the particles from a simulation we started in SphereSim and put them into Houdini, another application or a fluid simulator,” continues Bloom. “We used our own file format for level sets and height fields.”

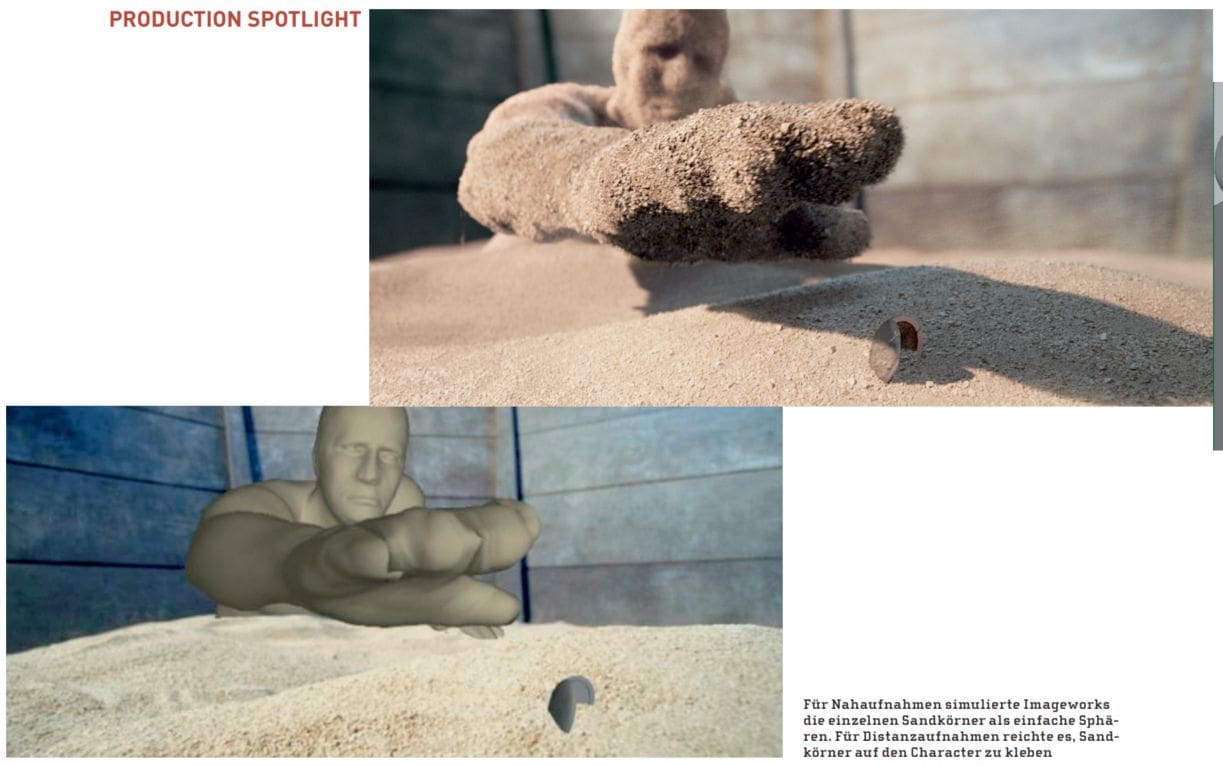

Sometimes flat, sometimes deep

Houdini, for example, used the effect animators to fill the inside of the character’s shell with particles: One inch deep for distance shots or completely filled once close-ups are necessary. Then they extruded the mesh inwards using constraints to create a particle envelope for Sandman or a fully sand-filled shape or body part like a fist. Adding gravity caused the particles to fall downwards. Before the particles hit the ground, they joined a group moving towards SphereSim. This allowed them to stack up. A programme that knew how deep the particles extended and had information about velocity and overall motion extracted an implicit surface that represented the pile. A fluid simulator used the implicit surface and velocity data to create a cloud of dust billowing over the sand pile.

In the same way, the effects team used the liquid simulator to create the enormous dust clouds that appear when Sandman dissolves in the wind. When Sandman wasn’t moving, the effects animators simply pasted particles onto his CG face. To do this, they covered the polygonal mesh using a RenderMan plug-in that took instanced models of grains of sand depending on the camera position; simple pieces of geometry with displacement or dots. To ensure visual complexity, the effects artists were able to specify that certain grains of sand were always rendered in a certain way.

To differentiate facial features, shaders could reduce the size of individual grains of sand depending on the underlying mesh. “The animation and effects departments had a lot to learn from each other,” says Digital Effects Supervisor Ken Hahn. “The body play wasn’t much of a problem, but the facial expressions were. Animators are used to being able to see skin, but sand has its own texture.”

Curvy mass

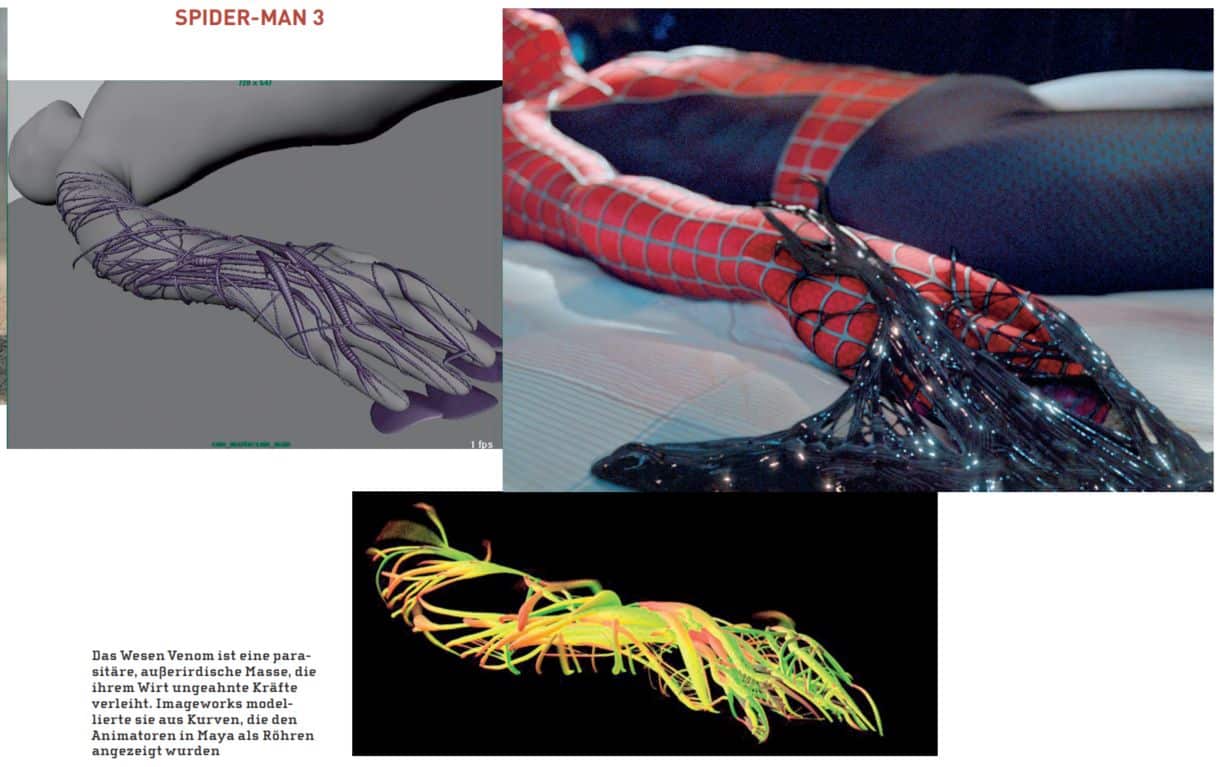

The portrayal of Venom had to be solved in a completely different way again. With the exception of the final scene, this villain always connects with a human being. This meant that the animators had to make the human characters act more brutally. Spider-Man became more aggressive; Eddie Brock fought like a wild animal. But unfortunately that wasn’t quite the end of it. There was still the matter of the black mass sticking on.

Lead character animator Koji Morihiro became the animation specialist for this creature. He used a system developed by visual effects engineer Ryan Laney. Because the black mass has no specific shape, the creature constantly changes as Morihiro and other animators attached it to its host, allowing it to grow and eventually bond with the host. It crawled onto Spider-Man, crawled over Eddie Brock’s body and eventually grew to six metres.

At its core, the black mass is a collection of curves. “We tried to keep the tool set as simple as possible, as well as the run through the pipeline,” says Laney. Each curve has a set of attributes that describe its length and shape, its surface attributes and velocity vectors. In Maya, the curves were displayed as tubes that represented the length and shape of the curve. The animators placed the curves frame by frame, modelling the creature at the same time.

“We built a given number of simple rigs with spline IK and more regular IK chains. Koji built a system with animation cycles,” Laney reports. For a shot that required a claw-shaped lump of black mass jumping onto an actor’s hand, Laney put together a bunch of curves to make it look like the reference graphic. Then he handed it off to Morihiro. “He put in a joint chain,” Laney says, “connected it, made it move, and that was the shot.”

For other shots, they built the joints first and then drew curves around them. “Usually you model a creature, put a rig in it and connect it,” says Laney. “This worked in exactly the same way.”

To add more detail, after the character animators placed the tubes, the effects animators inserted sticky pieces between them, using more curves and surfaces. “These are spans, membranes, objects that look like connecting pieces of geometry,” Laney explains. Sometimes they added the connecting elements by hand, sometimes they had them calculated. For example, when Spider-Man tries to rip his sticky black suit off his body, Venom screams and the mass looks chaotic. The effect animators used a procedural process for this setting. This utilised the proximity of neighbouring parts to add detail.

Unusual tasks for Maya’s hair system

If they wanted to give the black mass more complex movements, the animators resorted to hair systems. The studio’s own system was used as well as Maya’s hair system. “We were lucky that it worked so well,” Laney recalls. “We were able to use the hair tools to simulate the curves.”

After previewing the black mass in Maya, they then processed the data in Houdini to generate polygonal geometry for rendering. Firstly, adaptive sampling was used to generate particles on the curves. To do this, they used a data set with a high density, as the implicit surface generated from the particles was ultimately to become an isosurface. For the conversion, they used a “Convert Meatball” SOP (Surface Operator) in Houdini.

The effects artists ran test renders in Houdini’s VMantra before handing over the data to the lighting artists for final rendering in RenderMan. In the film, Spider-Man wins his inner battle and also defeats sand and black mass with a little help from his friends. His friends at Imageworks, that is.