Table of Contents Show

What exactly is Device Preview Plus supposed to do once the calibration itself is finished? And how far can a monitor tool stretch before it starts trying to become part of the wider image pipeline? In this interview, Heath Barber speaks about Datacolor’s current direction for SpyderPro, including display simulation, custom device profiles, C2PA content credentials, and the role of calibration in workflows that no longer end at a single screen.

Heath Barber (LinkedIn) is Senior Product Director, Consumer Solutions at Datacolor and has spent nearly 21 years with the company in a series of senior software, product and imaging roles. His previous positions including Senior Director, Software Technology; Global Market & Technology Manager, Imaging; Global Software Technology Manager; and Director of Development, Imaging Color Solutions. That long run across Datacolor’s software and imaging side makes him a useful guide for a conversation.

From monitor calibration to device simulation

DP: SpyderPro now includes Device Preview. On the box, that sounds simple enough. In practice, what does it do?

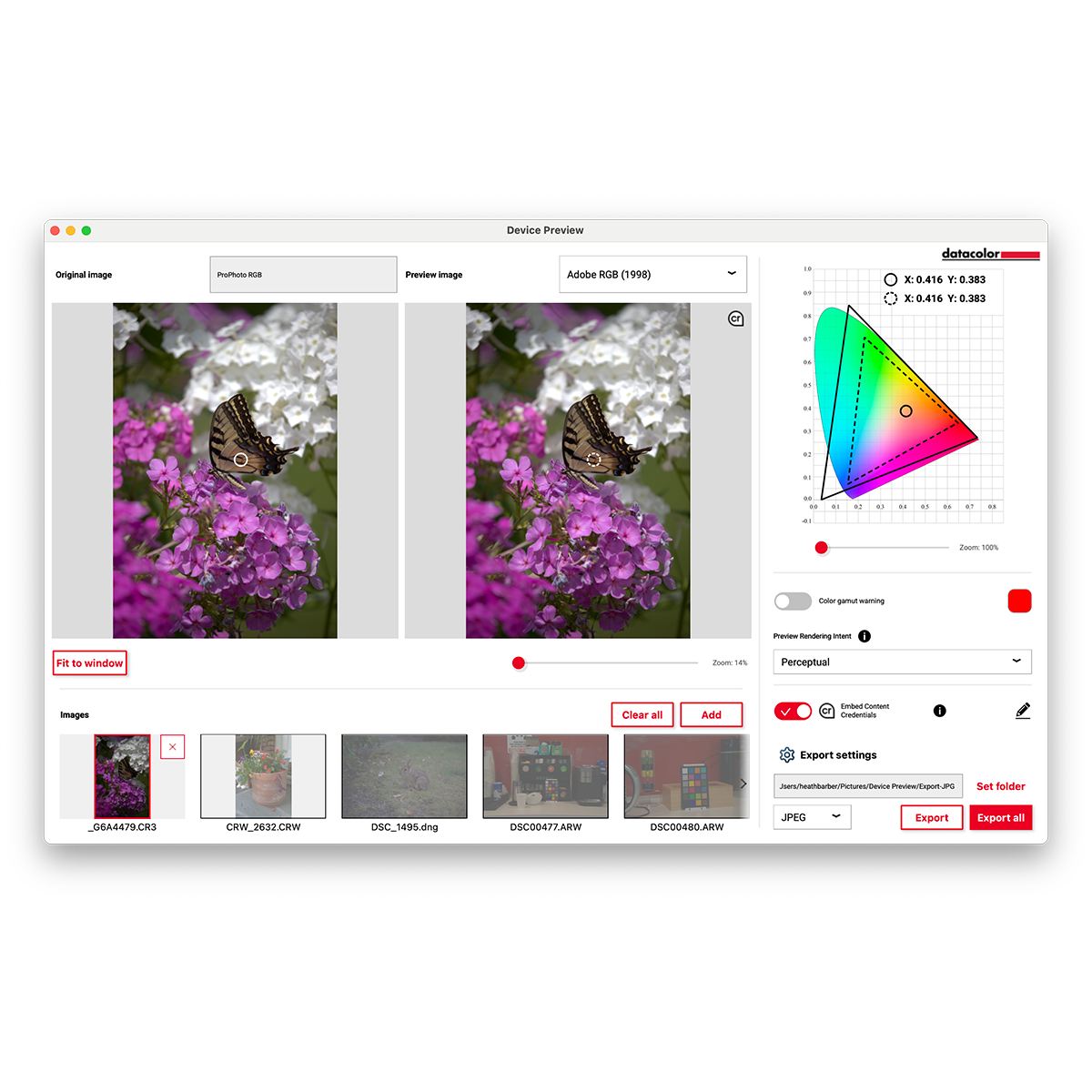

Heath Barber: Device Preview is really a way of simulating how your image or media might look on other output devices without you having to physically move the file across ten different screens and start guessing what changed where.

The classic comparison is print preview. People in print workflows have done this for years. You work on one display, but you still want a reasonable idea of how the final output will behave on paper. Device Preview takes that idea and extends it to screens. Instead of asking only, “How will this print?”, we are asking, “How will this look on a phone, on a tablet, on another class of display, or on another type of viewing device?”

That matters more than people sometimes admit. A lot of media today is first consumed on a phone. My wife is a wedding photographer, and like many photographers, one of the first things she wants to understand is how an image will look when a client opens it on a mobile device. That first impression often happens on an iPhone, an iPad or some Samsung tablet, not on a calibrated studio monitor. So what we did was build custom profiles that simulate those device classes as closely as possible on your own screen.

In other words, you are still looking at your calibrated monitor, but the software is applying a transformation that approximates how the image would appear on another device. It is not magic, and it is not the same as physically holding that exact phone in your hand, but it gives you a much more informed preview than simply hoping for the best.

Custom targets and ICC profiles

DP: There are already quite a few screen presets in there. Can users add their own?

Heath Barber: Yes, and that is where things get interesting. If you actually have access to the screen you want to simulate, you can create your own profile for it. The underlying idea here is the ICC profile, which is basically a standardised description of how a device represents colour.

If you calibrate that display with SpyderPro, create an ICC profile for it, and place that profile where Device Preview expects it, the software can use it as another target. So you are not limited to the built-in presets forever. You can extend the system yourself if you have the hardware and you are willing to profile it properly.

That is actually a useful kind of crowdsourcing. It means users can build simulation targets for screens we may not have added officially yet. Otherwise, the alternative is simply waiting for us to issue an update with more presets. This way, if you need a very specific display and you have access to it, you are not stuck.

In practice, this matters because not all displays fail in the same way. Some are cooler, some warmer, some over-saturate, some clip shadow detail, some compress highlights in ugly ways. An ICC profile gives you a measured description of that behaviour. Once you have that, you can simulate it much more intelligently.

DP: Can Device Preview also be useful in a more classic post-production or grading pipeline? For example, can it simulate ACES or broadcast-style viewing targets?

Heath Barber: Yes, absolutely. Some of those things are already there in one form or another (RAW, JPG, PNG, TIFF, obviously, which is common for screenshots and frame exports), and one of the goals of Device Preview Plus is to support a much broader set of output types and colour spaces than the earlier beta did.

That is important because once you move beyond stills and basic display calibration, you run straight into colour-management questions. ACES, for example, is not just a buzzword people throw around to sound serious. It is a standardised colour pipeline used in film and post to help move images consistently between cameras, applications, VFX, grading and final delivery. Broadcast standards do something similar in their own context. They define how media should behave so that a signal is interpreted predictably.

What Device Preview Plus is trying to do is give users a way to preview those kinds of transformations and output conditions within a simpler interface. It is not trying to replace a full finishing pipeline, but it does let you think more concretely about where your image is going and how it might look once it gets there.

That matters because color correction is never done in a vacuum. You are always correcting toward some destination, even if people do not always say it that way.

DP: So in the long run, could this also help compare different source looks or make camera matching easier before you are deep into grading?

Heath Barber: That is part of the bigger ambition, yes. I would phrase it less as “this replaces grading” and more as “this gives you more context earlier in the workflow.” A lot of users do not only want calibration. They want to understand what their material is going to do when it moves from capture through editing and eventually toward delivery. So one goal of this relaunch was to build a stronger foundation for that kind of ecosystem thinking.

Part of that was hardware support, including better support for multiple monitors. Part of it was product structure, so users can start at one level and move upward without feeling that they bought into a dead end. But the big conceptual shift was image processing. That is what starts moving SpyderPro out of the little utility corner and into actual day-to-day workflow relevance.

If you can preview, compare and process images more meaningfully before the very end of the chain, that is valuable. People do not always need a giant facility pipeline to benefit from that. A photographer, editor, videographer or small studio can still gain a lot by seeing likely output behavior earlier and more clearly.

What C2PA actually is

DP: Let’s say somebody changes an image in the pipeline and then blames someone else. How do I know who broke it?

Heath Barber: That is where C2PA comes into the conversation. C2PA is essentially a standard for content credentials, which has been driven by Adobe and a few other companies, including Meta and Google. The simplest way to explain it is that it works like a verified provenance layer for media, which is supplied by approved partners and products. It is a way of attaching information to an image, video or audio file so that the history of that asset can be preserved and inspected.

Now, it is important not to oversell it. This is not an unbreakable security vault. It is not meant to function like heavy-duty encryption where the whole system collapses if one person touches the file. The goal is different. The goal is to create a reliable chain of information around authorship, origin and edits, so that platforms, applications and viewers can read that history and present it meaningfully.

If somebody strips that information out, that itself can become part of the story. So the point is not only to preserve history, but also to make tampering or removal more visible. Important to note is: Not everybody can supply the data, because a “C2PA” approval stamp needs a verified supplier to integrate it. Stripping the data away is easy, as anybody who has had an intern delete sidecar files before knows.

But adding that information requires a vetted, approved software company, like Adobe or Datacolor. Quite a few other big names in our space are currently in the process of that verification, by the way – we don’t know if this system will be the one that solves the problems, but as far as we can guess today, it might stick.

From our perspective, that becomes interesting because we sit so early in the image workflow. Calibration and device preview happen near the beginning of a chain. If you can begin attaching useful metadata there, and if that metadata continues downstream, then the image starts carrying some of its own history with it.

Tracking edits through the chain

DP: So if somebody in a studio changes an image and sends it on, that history could travel with the file?

Heath Barber: Yes, that is the idea, assuming the applications in the chain support reading, preserving and writing that metadata. If a file moves into Photoshop, for example, and Photoshop adds edits while preserving the content credentials, then a later viewer or platform that supports C2PA can show that there was an earlier version, then another change, then another change, and so on. That is why I often describe it more like versioning or historical tracking than some kind of hard lock. You are building a timeline of the asset.

And that is valuable because a surprising amount of confusion in media workflows is not about whether an image was changed. Of course it was changed. The question is how, where, by whom, and in which order. That is where provenance becomes useful. It turns vague suspicion into something much more inspectable.

Why that matters for media professionals is obvious. If you are a photographer, editor, colorist or VFX artist, so-called technical metadata is not boring background noise. It is often the only trail of breadcrumbs you get when something looks wrong and you need to work backwards.

DP: Could C2PA become a foundation for more reliable metadata in professional media workflows?

Heath Barber: Yes, I think it could. Right now, the public conversation around C2PA is often dominated by AI detection, content authenticity and platform labeling. Those are real issues, but for media professionals there is another side to it, and that side is workflow visibility.

A lot of tools and viewers today do not really surface the kind of information a colorist, editor or photographer would care about most. To them, that data may just look like clutter. To us, it can be gold. It can tell you what settings were used, what was embedded, what transformations were applied, and how the file evolved.

That is why I think there is a major opportunity here that goes beyond headlines about “real versus fake.” For working professionals, the value may be that you can finally see what happened to an asset in a way that is consistent and transportable.

Camera metadata, edit history and proof

DP: I assume camera makers already contribute a lot of that information.

Heath Barber: Yes, they do. A camera can capture all kinds of useful metadata: model, lens, focal length, exposure settings, stop values, capture conditions and more. That information is already part of the professional world. The big question is not whether metadata exists. It does. The question is whether that metadata survives and whether it remains visible and trustworthy as the file moves through the pipeline.

If you can preserve camera-side information, then add edit-side information, then preserve output and transformation information, suddenly you have something much more powerful than isolated metadata fragments. You have a chain.

DP: So, for a professional production, that could eventually help prove what is captured, what is altered, and what is generated?

Heath Barber: That is certainly one of the ambitions around this whole space. It is tricky because media workflows are messy, and the real world is rarely as neat as standards documents would like it to be. But yes, the direction is toward being able to say: this is where the asset originated, this is what was done to it, and this is what remains attributable, and it is traceable. That is useful not only for protecting creators, but also for protecting the workflow itself. When something goes wrong, being able to inspect the chain matters.

DP: Ideally, you would like every screen in the workflow to have a little Spyder hanging from it.

Heath Barber: Sure, but the larger point is that I want to push us more into software. Spyder, as a hardware product, is well established. People know what a Spyder does, and the current platform supports most of the display technology our customers are working with today and what we see coming in the next several years. That is exactly what frees us to put our energy into software, because that is where we can deliver the most value the fastest. Workflow integration, format support, analysis tools, environmental adaptation, and content credentials. Those are software problems, and software is how we solve them best.

So Spyder remains the foundation, but the surrounding software layer is where we can build more capability and more relevance. Things like device simulation, image processing, and metadata let us move beyond “put sensor on screen, click calibrate, done.” That workflow still matters, but it does not have to be the whole story. And honestly, we are not done innovating on display calibration or colour-critical display analysis.

DP: You recently updated the Spyder software. What is next?

Heath Barber: There is a lot in the pipeline, but the part I can talk about today is the ecosystem side. We are working closely with key vendors across both video and photography to make sure the experience is as seamless as possible from capture through delivery. The goal is to give creators the critical tools they need to do their best work and to genuinely improve their productivity, not to add another piece of software they have to babysit.

Imaging is a team sport. People move between cameras, software, displays, tablets, phones, edit systems, grading tools, review tools, and delivery platforms all the time. So if you want to be relevant, you cannot act like calibration lives on its own little island. It has to connect into the broader ecosystem. That does not mean every user needs a huge enterprise pipeline. It does mean the tools should feel aware of how people actually work.

The market has also changed. A lot of users today are not interested in spending endless hours tweaking every parameter inside complicated full-featured suites that are harder to use than the editing and DCC tools they are already trying to keep up with. They want tools that do most of the job well, clearly and efficiently. That is not laziness. That is reality. So part of the job is figuring out where in the workflow we can add value without making the tool feel like homework.

Light-meter integration and the viewing environment

DP: What about the light meter side? Is that becoming a bigger part of the system too?

Heath Barber: Yes, very much so. That is one of the more exciting areas for us. Built-in ambient light sensing is useful up to a point, but it is broad and limited. A dedicated light meter is much more accurate, and once you have that, you can begin describing the viewing environment in a much more meaningful way.

Right now, we already think in terms of multiple zones around the viewing setup. What is the light behind the screen, what is the light facing the viewer, what is happening around the workspace. The current system uses that information in a relatively constrained way, but the longer-term plan is to expand that into a much richer map of the environment, because your monitor does not exist in a vacuum either. The room changes what you perceive. Ambient light, direction, intensity and colour temperature all influence how you see the image.

So if you can measure the environment more accurately, you can calibrate more intelligently and also preview output conditions more realistically. In other words, better environmental data improves both the monitor correction side and the device preview side.

From three zones to room mapping

DP: So eventually, instead of just one or two measurements, you could map the room.

Heath Barber: Exactly. Think of it less as a single reading and more as building a spatial picture of the environment. If you know what the room is doing around the display, then you can make much smarter decisions.

And that leads to some interesting possibilities. If you have multiple measuring points, or eventually multiple devices monitoring the environment, you can start blending those readings into a more complete model. That could matter for studio setups, mobile work or any workflow where lighting conditions are inconsistent.

Once you start seeing it that way, calibration becomes less about a single screen measurement and more about understanding the conditions in which that screen is actually being used.

DP: So the real shift here is that calibration is no longer treated as an isolated task.

Heath Barber: Exactly. That is really the core of it. Calibration still matters, obviously. It is the foundation. But once that foundation is in place, the next logical step is to ask what else can be built on top of it. That is where device simulation, image processing, metadata, content credentials and environmental measurement all come in. We are trying to move from a narrow utility toward something that participates more fully in the image workflow.

That does not mean it suddenly becomes a complete post-production platform. It does mean we are trying to make it more useful to the way people actually create, review and deliver media today.