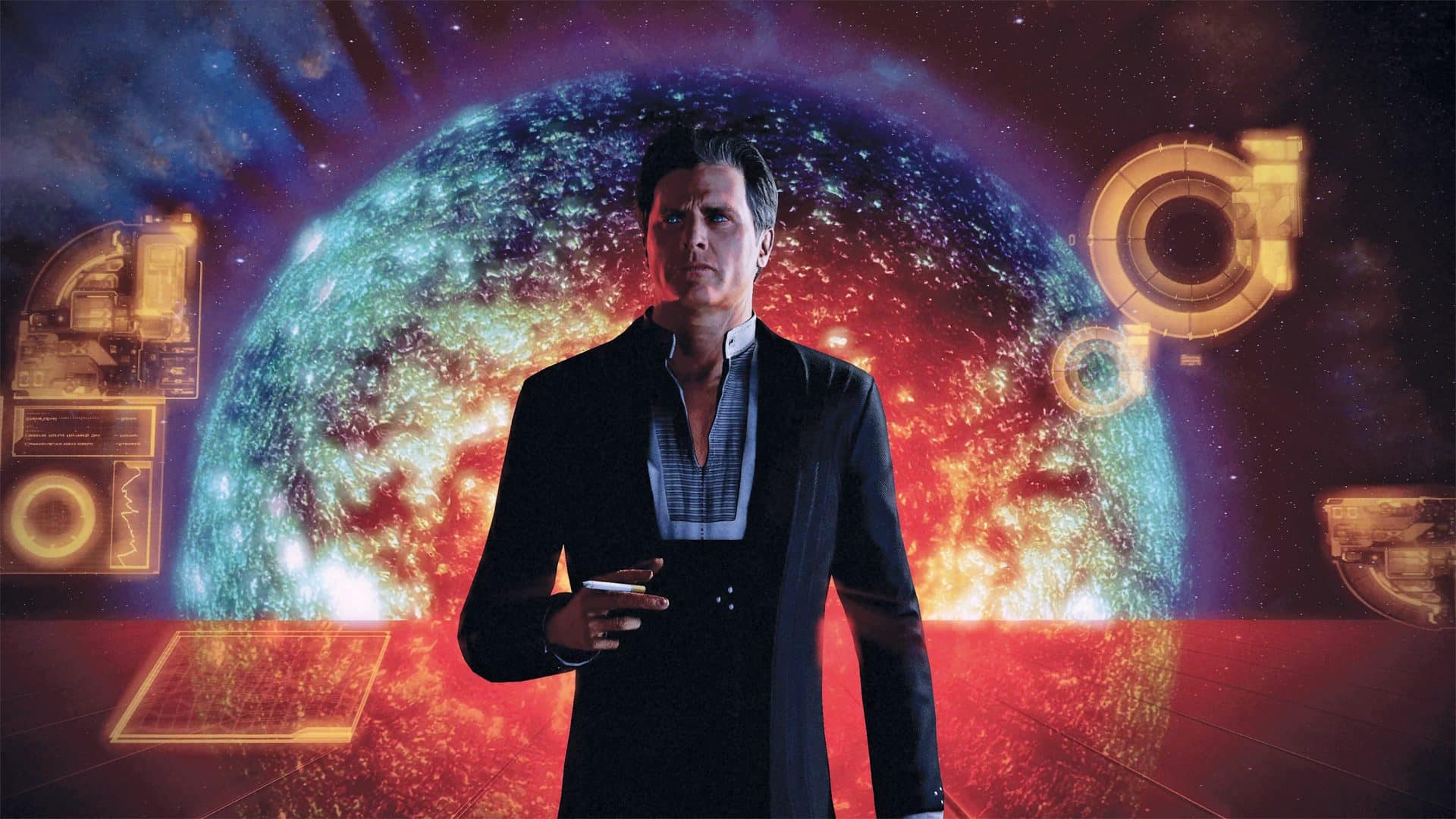

And who would have thought we would get the chance to talk to Kevin Meek, lead environment artist for Anthem, who was involved as the Character and Environment Director in the legendary edition. He is an alumnus if such varied title as “Mechwarrior Online”, “Transverse”, “Duke Nukem Forever” and now the “Mass Effect” Universe.

»Tribal knowledge is key – over-save and over-document, and you‘ll be okay when someone says 15 years later that you‘re amazing game is worth remastering.«

Kevin Meek, Environment and Character Director, Bioware

DP: To get a rough idea of the project: How many people were involved?

Kevin Meek: The “Mass Effect Legendary Edition” (MELE) was largely driven by BioWare Edmonton itself and here we have a fairly small, but quite senior group of people. Around a dozen developers, where everyone has at least 10 years experience. And the vast majority has already worked on the original trilogy. Then there was a handful of people like myself who came at it as fans and did not work on the original trilogy, but almost everyone has probably 20 years of experience working in games.

With a smaller size team, it almost feels like an indie team – just a whole bunch of people wearing a bunch of different hats. They’ve all been through the process before, so they know what they’re doing and just did get down to business really fast.

That being said, we also had a lot of external help – we were able to push some of the work to outsource studios and just find specific experts in certain fields. For example, Blind Squirrel (blindsquirrelentertainment.com) was a programming partner for us, because they have lots of experience with porting from other remasters and remakes – specifically with Unreal Engine 3, which we are using. They are very involved in the whole intricacies of taking something up onto DirectX 11 and getting it onto the next generation of consoles.

That’s a big amount of work for us regular developers of BioWare. We would be able to get our way through, but if you can find people who are experts in that field, we can bring them in and partner with them. Also, there’s a handful of experts in Unreal 3 from Europe called Abstraction (www.abstraction.games). They came onto the project for a couple of months and really added a lot. But everything went through the Edmonton Studio.

DP: With the “Mass Effect” trilogy being made in Unreal 3, did you have newer technologies from Anthem, for example, to add?

Kevin Meek: The last time we, as a company, used Unreal 3 – or Unreal at all – was with “Mass Effect 3”. At that point, “Dragon Age Inquisition” and then “Andromeda” and “Anthem” switched to Frostbyte. It’s almost ten years since any of us have touched Unreal … except for private projects.

DP: With 10 years since the assets have been active, did you switch to newer versions of your toolset, or did you use legacy versions on newer hardware?

Kevin Meek: Well, it’s a decade long trilogy, so the tools in ME1 weren’t even the same as in ME2 and ME3. Sometimes, in order to get a file to open, so we could export it into something current – for example a very specific version of ZBrush.

But we also didn’t want to dust off and maintain three different sets of tools with their respective underlying infrastructure and engine code, so one of the first things we did is unifying that. Generally speaking, that meant going up to the most recent version, so we went up to a more recent version of Unreal 3 – more recent than “Mass Effect 3”, actually. For ME1, that was a pretty substantial upgrade, for ME3 it’s a handful of newer tools and a few iterations.

For our underlying tools – like mesh exporters and so on – the latest version in which everything was working is 3ds Max 2018, so we just unified everything to that. There were even a handful of tools that required Max 2018, because we couldn’t get the old ones compiling.

For the rest of the pipeline, we used whatever the original developers used. We opened up the assets, and then there were mesh files, and ZBrush files, and Mudbox files and Photoshop files … the development ran from 2006-ish through to 2015. That’s quite a lot of time in the games industry for a lot of tools and different pipelines. And on top of that, people were still figuring out how to use certain technologies – how to make and incorporate normal maps and high-poly /low-poly baking, for example.

DP: Do you have any tips for getting older stuff to work again?

Kevin Meek: Yes, anytime anything was documented at all was such a lifesaver – if you ever work on something that has even the slightest chance of being remade, save copies of the installation files for the programs you use. Especially near the end of a project, get everyone to work clean in the organisation of assets. It is sadly quite common to modify the texture directly, and not go back into the Photoshop file. This might get the game done, but it makes the work for the remaster or remake a LOT harder, when you have to become a archaeologist, and scroll through final_2_2.xyz.

DP: And how about technologies that weren’t possible when the games came out?

Kevin Meek: There are definitely effects, that you expect from the current generation of games. You expect some ambient occlusion, some sort of (at least faked) subsurface scattering, volumetrics …

Especially volumetrics, which came in the latest version of Unreal 3. It adds so much life and depth to a scene. We found that in ME1 and even in ME2, there was not a lot of movement and dynamic attributes to the levels. And that was largely technical constraints. Either the stuff hadn’t been invented yet, or the things were very expensive in terms of performance. Translucency and sorting and having half your screen filled up with transparent pixels. That’s still expensive, mind you – you can really catch yourself out with particle effects creating overdraw issues.

In MELE, we couldn’t go crazy, but consoles are ten times more powerful, and we have all this understanding what we want games to look like. And we could add that sense of life and dynamic. And since we brought all three parts onto one level, we could add certain types of tech into ME1 and 2.

There are other tools we had to write by hand – like our version of the ambient is custom. And the wrap lighting or the custom depth of filed is either custom, or ported back by hand from how you do it in modern day. Thankfully, with Unreal 3, there is troves of knowledge online on how to fake things like subsurface scattering these days – we took from well documented graphics bibles and user cases and tutorials. That’s one of the really big perks of working in Unreal – you just have to google it, and there’s the answer, sometimes in 10 different ways.

DP: When we look at the textures: How did you upscale all of those, and what tools did you use?

Kevin Meek: We used a number of things – we knew going in, that current AI-tools would be only level 1 for us. So, we did that as the first step – before anyone touched anything, we got that done. Myself and one programmer analysed a bunch of AI upscaling methods (Upres), and I was a bit of a sceptic before those tests. It’s like that meme “Zoom and Enhance”.

We did a lot of tests with textures, UI and FX, all the way to environment and character art. We had internal tools, and we had some out-of-the-box solutions. I was impressed with the results, but you have to test a lot and pick and choose, specifically for games.

We exported (and reimported) large batches of Targa files straight from the game files, so any last-minute changes were available – like I said, when you are close to finish, artists don’t always use the source-PSD-files, but work in engine.

Basically, we got everything from the game, and on that we ran the AI upres. At this point we had to pay close attention to the file-types, like normal maps and stacked textures. Every texture is a red-green-blue and sometimes an alpha channel. They usually don’t know about each other. So, if you upres, you suddenly get a lot of bleeding – because in the resolution change, they are not ignorant of each other, and they are suddenly mathematically incorrect.

Same thing with the stacked textures – basically a bunch of masks, for a character’s armour for example, where the different versions are just in some free channel in the texture. We had information about how shiny the armour is in the red channel and in the green channel you have the information which colours can go were. Or vice versa.

And if you process that, you get a lot of garbage – so we had to split those out into four different textures. Now we have four PNGs and then we upres those, and recombine them into a colour map and convert it back to Targa. There’s a little bit of a stepping processes.

With that approach, we ended up well north of 30,000 textures for the characters alone. But that worked really well, and bumped up the picture quality of the game. And we only upscaled one step in the source art – so from a 128-texture up to a 256-texture. Or a 1,024 to a 2,048.

DP: What was your pipeline for that heap of assets?

Kevin Meek: We had a really good tools programmer – and we tracked everything. The batch tool put the texture somewhere, split it apart, and create an Excel sheet of tens of thousands of lines, with “here’s the texture name, here is its new name (times four), here is what type of texture it used to be, and where does it go now”. And as long as you keep that Excel sheet saved, then all the tools can reference from that.

And we’d update the tools as we find things going wrong, and then you’d end up having to run the whole process again. A number of times we just reverted everything back to that “Safe Revision 01”. We babysat the AI upres and we only ran a few thousand threw it at once, just in case it crashed or anything went wrong. Largely it was just like a bank of computers working on that, as we slept or went home for the weekend.

DP: And, on the creative side, where is the tipping point where you need an artist?

Kevin Meek: Well, coming from the AI upres, we basically had the game up and running, and then the artists started coming in – and they used their trained eyes. Let’s take for example environment art: I would assign an artist to a level and they would own that level, play through it a number of times and create a list of assets they critiqued as not good enough. Those assets got ranked by priority, based on how bad it was versus how often is it used, or if it is a hero-piece, and then you just hit through your list of ugliest assets. When you play through the level again, the standard is raised – and you walk through that cycle a couple of times, and it is getting good.

But with iteratively getting the things better until the point where nothing catches your eye as bad, you still have to remember: A remaster is very art-focussed, but at the end of the day, “Mass Effect” is a story-driven game, with the characters and their interactions in the foreground – and therefore, our job as artists was to make sure that you are never distracted by the art in a negative way.

The AI upres gives us a great base to work off of, but it doesn’t make bad art good. If something is noisy or boring or shifted, you just have to recreate that texture. And then there is everything else we layered on top. AI upres is fine, but it isn’t doing anything to the meshes or the shaders or to the lighting. All of this stuff, the big-brush coating of the levels, which makes it feel more like a current generation game, has to be done by hand. There is no AI solution for relighting the level. Maybe one day there will be …

DP: Aside from the textures, did you re-do the riggs or the animations for finer details?

Kevin Meek: (laughs) Our animation pipeline did not do well after 15 years. Often it was just Perforce sitting there in the server, getting server dust on it. So, we found that often, if we would redo an animation and reimport it into the game, that took a long time just to get going in the first place. And then secondly, there were a lot of ripple effects of bugs that came from basically touching the system at all. That could be anything from underlying Unreal issues, where we’ve upgraded the version from whatever version it shipped on to the current version. Also, there were some mathematical changes, which happened a lot with lighting. Some piece of averaging, how light affects a character, changed, and now the system reacts differently. These might be little things that you don’t even pick up until you worked some time with it.

With the animation, we couldn’t really do anything about that, without devoting a huge amount of time into it and setting up the system again. What we ended up doing was change how the underlying system works with the animation – like animation trees. In a video game, you don’t animate every single thing the character could possibly do – you animate independently and blend the actions together through an animation tree – turn left, look up, aim at, these are animations that are blended, and there were a lot of issues in the tree for how things can blend together. That ended with a lot of cross-eyed moments – which was almost funny – especially when Shepard and the team go to cover, and you see their faces, and they would pull a gun and their eyes would roll up in their head. That kind of stuff, we were able to change and clean up.

The riggs stayed the same, but we had to reskin every single armour and piece of clothing we touched, because we upressed the meshes themselves. There are more polygons for smother shoulders and the details, that might just have been texture before, are now polygons and cut-outs and extrusions which catch the light. So, our tech-department reskinned the verts to the skeleton. These new polygons need to be connected to the bones to move with them.

DP: With your background in environment art: How did you treat the planets, some of whom were quite barren in ME1?

Kevin Meek: For the uncharted worlds, they were the most barren. They are all Unreal-terrain-based and not a static mesh. That gives you a few semi-procedural options, but still works well within the tools that existed in Unreal 3.

What we would do is like a scatter mesh system, instead of just green texture on the ground. Now we have green texture on the ground, which is upressed and looks better and has a better material to it. And finally, it is actually projected on the ground instead of UV stretched everywhere. But also, on top of that, there is an actual mesh on top, like of blades of grass or rocks.

That’s basically a system you tell “on this type of texture, given this angle allowance, how dense do you want these rocks to be”. Every time you load the level, it is different. But nothing so large it needs collision, so trees we would stay away from. That would have created a whole swarm of ripple effects, because you would be stuck on the tree, but it is not always there, because it is generated. So, we kept everything level enough that you can walk around without collision.

The mesh of the terrain we upressed, and the materials we used are now triplanar shaders on the terrain. In the original, it was just top-down-projection. A polygon on a cliff would have the UV stretched all the way down the cliff, because it doesn’t know about angles. But with a triplanar projection shader, it actually shoots the texture from X, Y and Z and then it blends those intersection points. So now your cliff actually looks like a cliff instead of melting rock. That’s not new tech, but it is much more expensive, and they obviously couldn’t have afforded that back in the day. But it makes a world of difference when you’re driving around those levels.

DP: When you remastered all three parts: Did you use assets from ME3 in ME1, since they were already better quality?

Kevin Meek: Yeah, actually there were a lot of assets that are shared between the entire trilogy, so as they finished work on “Mass Effect 1”, “Mass Effect 2” would start and they have the library of assets to start from and a number of those made it through all the way up into “Mass Effect 3”.

Certain ones got improved in Mass Effect 3 and we were able to use those as a starting point. Especially with characters and also placeable props. Your job as an artist would be to ask: “Does this exist in ‘Mass Effect 3′? And does it look better in one of the other games?” It was baked into every single character armour and faces with the morph head system and wrinkle maps and all of your character customization options and more. But all came from whatever the best version was, which is generally ME3, but not always. Then we improve that and then brought it across the trilogy. But crates were unchanged. Some of the crates, which are so prevalent in a cover shooter, were the exact same crates that existed in “Mass Effect 1”, and it was kind of rough, but it was the exact same asset in ME3.

DP: What are you telling your colleagues working in upcoming games, now that you finished the remastering of a decade–long project?

Kevin Meek: I think for any game that we’re working on, it is good to have naming conventions and documentation – and get the artists to understand the best practice for that. The original Devs of the “Mass Effect” trilogy did a really good job with those. We can go back into the original Perforce and search files.

On the other hand, there is no documentation whatsoever of how to rebuild lighting in the levels, which is like a baked static lighting. But what layers do you show? And in what order? All because there’s just not a single page somewhere in some internal wiki that says: okay, here’s how to do it, guys.

It was all tribal knowledge, so that’s the main thing: Always assume that you are off the project in a week and that your tribal knowledge is going to be lost with you. I think, if you take that approach to everything and just over-save and over-document, then you’ll be in an okay state 15 years later, when someone says you’re amazing game is worth remastering.