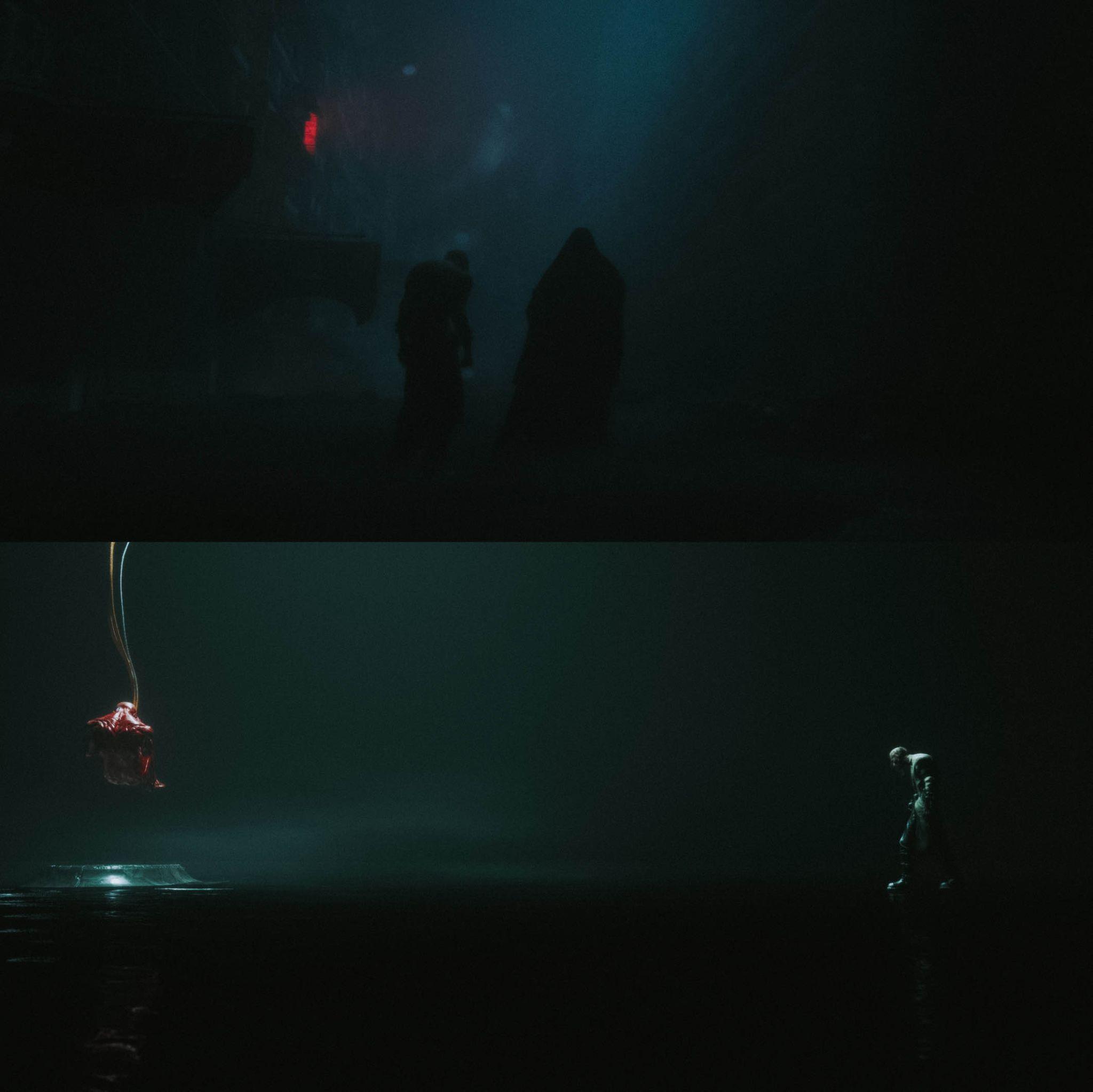

Making a film with some friends, with no studio, no mocap stage, and no budget, usually sounds like a dead end. For realtime director Dimitri Vallein, it became the premise for New Specimen, a four-minute sci-fi short built entirely in Unreal Engine 5 using iPhones, off-the-shelf software, and a lot of patience. Every shot from facial capture and markerless body motion to full cloth simulation was produced solo in his living room. The result isn’t a tech demo but a polished short film that questions how far a single artist can push cinematic storytelling inside the realtime toolset.

DP: Hi! Can you introduce yourself?

Dimitri Vallein: Hi everyone! I’m Dimitri, a real-time 3D director and technologist. Before filmmaking, I built projects around immersive 3D technologies. I reached 500M+ views, creating Augmented Reality experiences and developing video games that hit #1 on the French App Store. A few years ago, I turned to Unreal Engine with a clear goal: to direct the sci-fi films I’d always dreamed of seeing.

Since then, I’ve written, directed, and self-produced two UE5 shorts: The Last Star (IMDB) (2022), screened at Dances With Films LA at the TCL Chinese Theatre and was selected for the BMVAs (Best Animation) alongside The Weeknd, Pharrell Williams, and Muse. And now, New Specimen (2024), which was a semi-finalist at the Rhode Island International Film Festival and later led to an invitation to join a panel with Ron Dyens (Oscar-winning producer of Flow) to discuss real-time 3D, the exact process I’ll break down here.

DP: So, how did this all start?

Dimitri Vallein: Everything began with this single render from my friend Qtn.cls: a lone figure in a night field. If you want to see his stuff, check his Instagram | Artstation | Youtube

I felt instantly connected to it. I could feel the silence, the cold breath, the mystery. In that moment, the world of New Specimen started to take shape in my mind. I called my childhood friend Vincent Guyon and laid out the vision for where this could go. We bounced ideas back and forth until the story snapped into shape. Together, we drafted a tight 4-minute outline, and I jumped straight into production.

DP: How did you create the main Character? And what exactly is it?

Dimitri Vallein: One key point for me was to create a unique, distinct look for the main character. Most of the time when you watch Unreal Engine short films, you can spot MetaHumans immediately, and it often pulls you out of the story. The problem is usually that people use MetaHuman’s default look, which still feels quite uncanny. It often lacks the personality and style you will look for when creating something distinctive. That’s why I chose a 3DScanStore head scan to avoid this trap and go for something unique.

I picked the scan like I’d cast an actor. I was looking for a distinctive face—something that doesn’t look too much like a human, but at the same time doesn’t look too much like a fantasy creature. I wanted the viewer to see a bit of themselves in this creature, so they could feel more emotion toward it and more easily relate to what’s happening to him.

Then I “MetaHumanized” it while keeping the surface details from 3DScanStore by adapting the original UVs to the MetaHuman UVs I just created. This step mattered because I still wanted to use the MH animation features, especially the facial rig, which were crucial to my process.

Facial Animation

DP: The character’s face has a few unique shapes – how did you translate the rigs? And the eyes are very weird – can you talk us through their setup and creative process? Did you try normal eyes first, and then go for the full black?

Dimitri Vallein: For the rig, the whole goal was to keep MetaHuman’s facial system, but preserve the scan’s personality. I MetaHumanized the head so I could use the MH rig, then I protected the “non-standard” features by keeping the scan’s surface language and not smoothing it into a generic MetaHuman face.

For the eyes, it’s the first thing I did after the MH process: I changed the eye material to something more unique and distinctive. I’ve always loved experimenting with new materials in my scenes, something you wouldn’t expect at first, but that creates visually interesting results. This is the same process I used to create the 3D environment for The Last Star.

I probably tried dozens and dozens of different materials to finally create its magic. I had this idea from the beginning: to have eyes that look entirely metallic, while still catching reflections from the lights to give him that sparkle of a soul. It was a deliberate story choice.

One of the biggest challenges was getting believable facial expressions, and, above all, making them land emotionally for the viewer (curiosity → apprehension → anxiety → fear → desperation). I didn’t have an experienced actor around me, so I did it myself.

I used my iPhone with the Live Link Face application (Made by Epic) to capture takes for each sequence, processed the data in Unreal Engine, and synced it with the body animation in the sequencer. I added light facial keyframes to push certain expressions or correct specific muscle movements, but about 90% of the results came straight from the capture.

DP: Considering the movements, shouldn’t that be easy on any Mocap Stage?

Dimitri Vallein: I didn’t have the budget to rent a motion-capture stage with suits, hire performers, and bring in a tech team for calibration and cleanup, so I explored markerless video-to-motion options and chose the tool Move.AI for its accuracy. I set up four iPhones on tripods around a small capture area, ran the standard calibration, and recorded all the performances myself in my living room.

The best part of this process was the iteration speed: If a take didn’t work properly, I could re-record the animation immediately. I exported FBX files from Move.AI, retargeted them in Unreal with the IK Retargeter, and did a light cleanup in the sequencer (foot contact, hand jitter, etc.) until it felt right on-screen with the lighting and environment.

DP: How did Move.Ai work for you? Will you use it again?

Dimitri Vallein: Move.AI was fantastic to use because it gave me the one thing I was looking for as a solo director: iteration speed. I could record, review in context, and redo takes immediately. It’s not always perfect; some parts of the body still need attention and cleaning sometimes, but the trade-off was worth it in that context. It also gave me the opportunity to try different acting choices and decide which emotion I wanted to evoke.

Yes, I’ll use it again, especially for the animatic phase when I’m alone and iterating quickly. But for a more ambitious project, I’m aiming to do performance capture with real actors and direct them on the spot. That’s what I’m planning for my next project. The interesting part about cinema is that it’s a collective art form, and as my projects grow, I want to get closer to that. That’s also where all the fun and memories are made.

DP: So, to make it easy, you used a flowing hospital gown?

Dimitri Vallein: One thing I always aim for in 3D is to keep every pixel in motion. CGI gets static and fake pretty fast. So I like to keep everything moving on screen, even subtly; the result feels more believable to the human eye. That’s why I decided to take the hard road for the clothes and simulate them for my characters on every shot.

DP: I assume the new ML-Cloth wasn’t available in the version of Unreal that you used?

Dimitri Vallein: No, not yet. For that, Jon Sanchez (who worked with me on The Last Star) designed a hospital-style gown in Marvelous Designer. He also created a torn, distressed variant of the garment with rips along the hem and sleeves. I love this choice because it lets the outfit evolve with the story. Check out his Instagram and Artstation to see some of it!

Once the garments were designed and optimised, the next challenge was simulation. I exported the MetaHuman animations and brought them into Marvelous Designer.

DP: Considering that this was simulated Cloth over a motion-captured rig, how many Iterations did you need to make it look liveable?

Dimitri Vallein: Some simulations went right after a few iterations. (walks, look-arounds). Others were bigger challenges; the hardest were probably sprints, shoulder rolls, and sudden stops. They needed troubleshooting: collision thickness tweaks, pattern adjustments, higher sub-steps, and occasional re-caches until everything fell into place. I then exported the cloth back to Unreal as Alembic (Ogawa) and integrated it into each sequence.

DP: Soooo, let’s talk about the location – where did those come from?

Dimitri Vallein: The environment and lighting were created by Qtn.cls. I had already worked with him on Vortex, and we’ve always been closely aligned on the aesthetics we like. It was great to work with him again.

DP: Which stumbling blocks did you encounter during setup?

Dimitri Vallein: It’s one thing to create cool visuals and animate them in a fast-cut or epic way, or have them

work with strong music and a voice-over that takes all the space, or even use masked 3D characters to avoid showing the eyes and/or mouth.

It’s another thing to tell a real story, with multiple 3D characters interacting with each other, without any artefacts to hide facial features or music to take over the story. So the biggest challenge, and also the most interesting one, was confronting the digital human problem head-on, with everything that implies. I had to learn a lot of different things to make it work visually, and I’m happy with how the emotions, facial expressions, and micro-muscle movements turned out. I think this is what most 3D artists avoid, but it’s also the thing that, if we break through, the world is ours.

DP: Looking forward, with the recent updates to Unreal, what are you looking for to utilise in your next project, and was, in your opinion, already perfect as it is?

Dimitri Vallein: I’m really looking for more AI-driven tools built directly into Unreal Engine. The tool I’m most eager to have is an AI tech that understands my characters and their intentions, and, based on the environment, the story, and good prompting, can generate procedural animations. It would be an amazing step forward for creating animatics easily. It would be a real revolution in my process.

I’m also interested in the evolution of Nvidia’s tech toolsets they keep releasing, like the Audio2Face we can use directly with MetaHumans, which is super cool. I’m looking forward to progress that might even let you prompt the emotion you want to generate on a MetaHuman face based on the audio you give it as input.

Finally, as a side note, I’m using Isaac Sim and Isaac Lab more and more to create and train my own robots. As a 3D aficionado, I see those 2 softwares as an extension of my work into the real world. You can even imagine cute creatures, develop a movie based on them, and then train them directly in Isaac Sim/Lab, and then you have a direct path to bring your story and IP into the real world!

DP: Did you go for the festivals?

Dimitri Vallein: Yes, strategically. New Specimen screened at Animation First in New York, where I presented the film to the audience and joined a panel with Ron Dyens (Oscar-winning producer of Flow), Boris Labbé, and Leslie Lynch to discuss real-time workflows and the use of AI in animation. I’m happy New Specimen was a semi-finalist at Flickers’ Rhode Island International Film Festival, and it also opened the door to the VIEW Conference in Turin. I’m also currently preparing a VR version of New Specimen to keep pushing immersive experiences and storytelling, so you might see me at immersive film festivals in the coming months and years too!

DP: And what is the next project you are working on?

Dimitri Vallein: My next project is called The Day I Met You. It should come out this year, in 2026. It’s my most ambitious project to date: 20 minutes of pure 3D. I believe more and more that Hollywood has become repetitive, pessimistic, and mechanical, addicted to the same franchises. They’ve stopped developing bold new ideas that push the boundaries of our imagination. There’s an opportunity here for the new generation of filmmakers to create innovative films by harnessing optimism, new technologies, and our deepest scientific

ideas. So this next project will focus on a hopeful future, one where scientific discovery moves civilisation forward.