For those who don’t know the tool: Spark Story sits in early previs and story workshops, pairing a browser build step with a mobile virtual production app, plus workflows that touch Blender and Unreal Engine.

NAB gets the hands-on bit

Spark Story is set for a public preview at NAB Show 2026, with an interactive booth demo that lets attendees create shots live and move between a browser session and a mobile virtual production app. The preview is scheduled on the show floor from April 19 through April 22 in Las Vegas (Booth N3148). Lightcraft calls Spark Story the first piece of a broader Spark platform that is still in development, with the NAB preview focused on rapid virtual-shot creation, script linkage, and a browser-to-mobile workflow. A beta notification signup page for the broader Spark platform is already live, indicating that a formal beta program is planned and more or less around the corner.

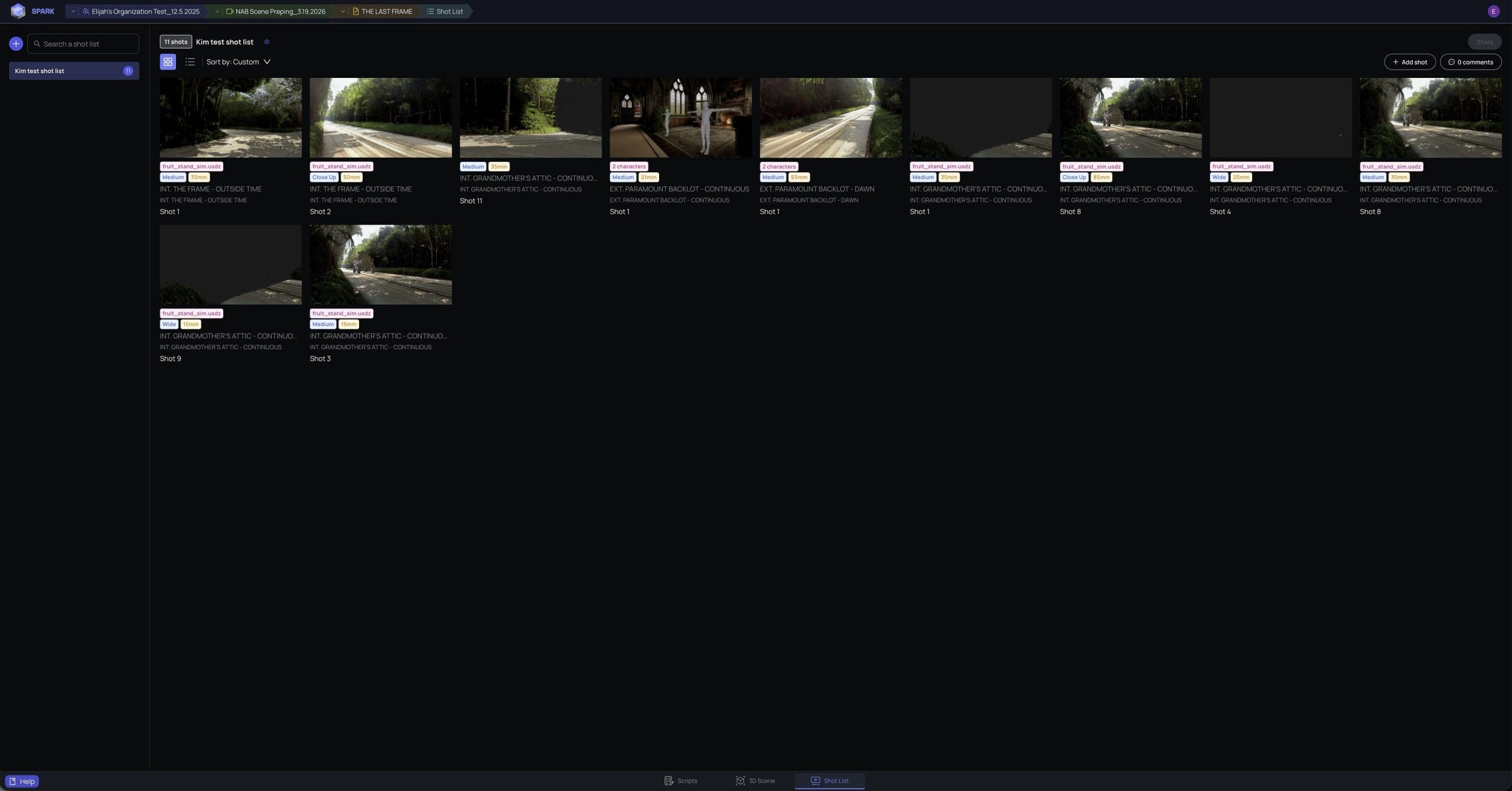

The pitch is simple: start workshopping a film on day one with the team, using rapid virtual shot building instead of waiting for later stages to see whether scenes and beats land. The preview demo uses scanned environments (Splats) and prompted environments as part of setting a scene, plus the ability to block and capture camera shots from a phone.

Browser to phone, with script in the loop

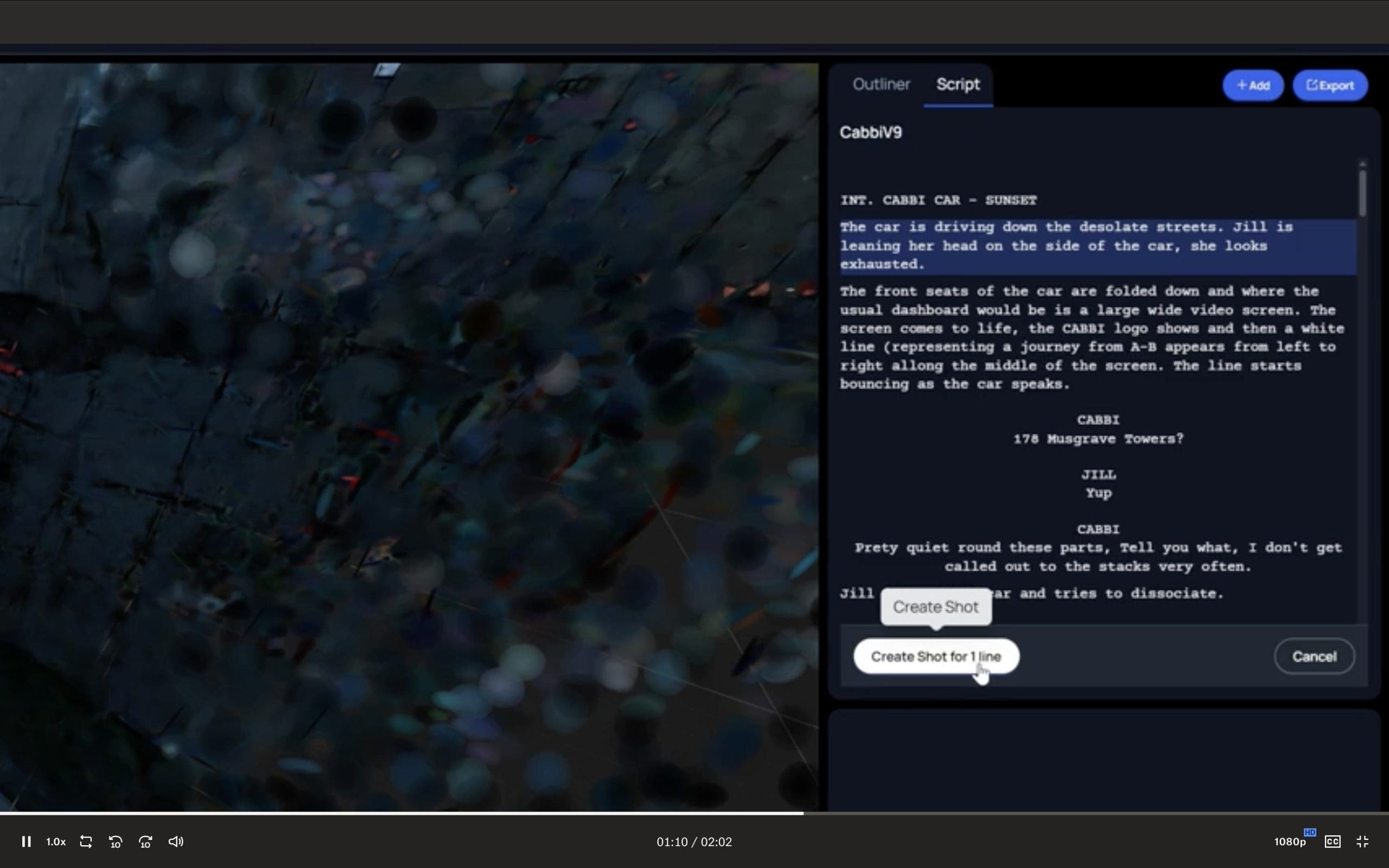

The preview description frames Spark Story as a real-time workflow that links a browser tool with a mobile app so teams can collaborate while building scenes and placing cameras. Script linkage sits near the center of that workflow, with an emphasis on connecting shots back to a screenplay, and on turning camera placement in a 3D scene into entries that feed a shot list tied to settings and framing.

A separate feature preview calls out collaborative screenplay editing and breakdown, aimed at real-time collaboration and development from partial ideas into a full screenplay. That matters less for the final file format and more for how teams keep intent, coverage, and revisions connected while the visuals evolve.

If you have lived through the classic version of previs, where shots float around in a folder tree while the script keeps changing in a different tool, this is clearly trying to make that disconnect go away. The claim is that a team can move much faster through early planning, pitching, and shoot preparation.

Environments: scans, prompts, and Gaussians

The preview highlights three main buckets of scene content: scanned environments, prompted environments, and more traditional 3D content. It also calls out support for Gaussian Splats and imported USD assets in the same workspace.

For Gaussian Splats, the industry standard is 3D Gaussian Splatting, a real-time radiance field rendering approach with an official, publicly available reference implementation. For USD, the relevant target is OpenUSD, positioned as a platform for building and exchanging 3D scenes at scale.

The interesting bit here is not that any one of those elements exists, but that the preview bundles them into one collaborative shot-building flow. If teh tool can keep scale, orientation, and camera intent consistent while teams mix splats with USD assets, that would remove a lot of glue work that currently happens in side scripts and one-off conversion steps.

Camera intent and virtual production capture

The preview copy states that Spark Story builds scenes around realistic digital environments, physically correct motion, and camera and lens models intended to keep early decisions consistent through shoot days. That is a marketing claim, but it is also a promise: camera behaviour and motion should (!!!!) translate from early planning into on-set execution without a full redo.

On the capture side, the preview positions a mobile app as the place where shots get captured from anywhere, with an integrated iOS experience described as push-button simple. For the platform piece, that points straight at iOS, no word of Anroid yet.

The practical production question is what data comes out the other end and how it plays with the rest of a pipeline. The preview text focuses on workflow and collaboration rather than interchange details beyond imported USD assets. So, at press time, there is no confirmed list of export formats, tracking data handoff details, or round-trip guarantees for specific DCCs in the NAB preview description. But there are importers for Fab.comMegascans, Unreal, Autoshot, as well as Blender, Nuke and After Effects. No word on Lightwave.

What is confirmed on the ecosystem side

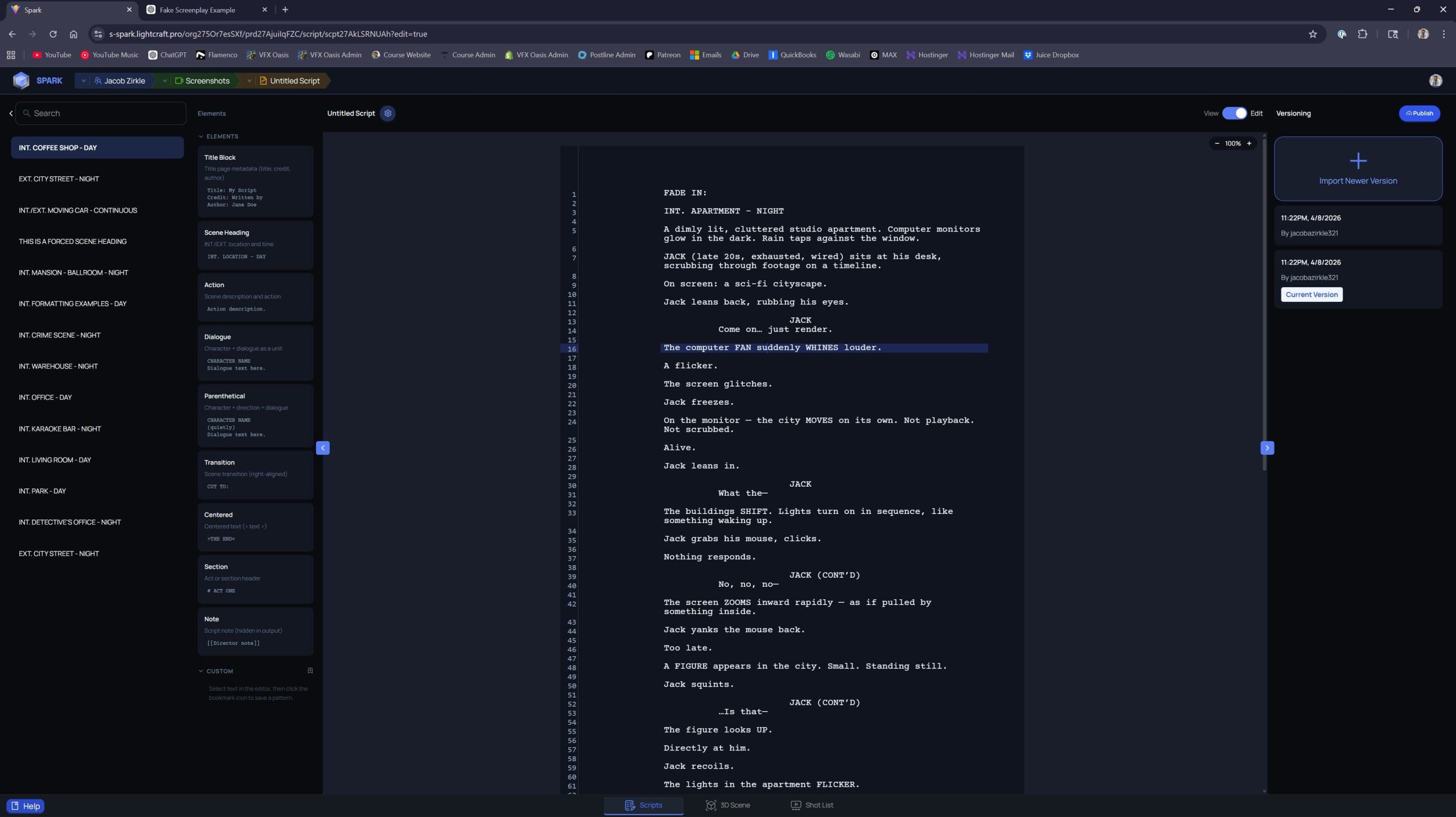

Outside the NAB preview messaging, the public documentation site already carries Spark Story sections alongside broader tooling docs, including workflow pages that reference Blender and Unreal-related processes and USD troubleshooting topics. That is not a full product spec, but it does confirm that Spark Story documentation exists publicly and that USD sits inside the expected workflow surface area.

That matters because it suggests this is not a closed demo-only concept. There is already a documentation structure, including tutorials and debugging notes, which is usually where pipelines go first when they try to validate a tool against real production constraints.

What to test before you bet a schedule on it

Rapid collaborative previs sounds great right up until a production needs repeatability, versioning, and predictable handoffs. If you plan to evaluate Spark Story, put it through the boring tests early: multiple artists editing the same sequence, script changes mid-session (Thrice), asset relinking, and exporting or round-tripping anything your downstream tools require.

New tools and innovations should always be tested before use in production, preferably on something disposable, like a two-day internal short that still hits the same technical constraints as a real show.