Table of Contents Show

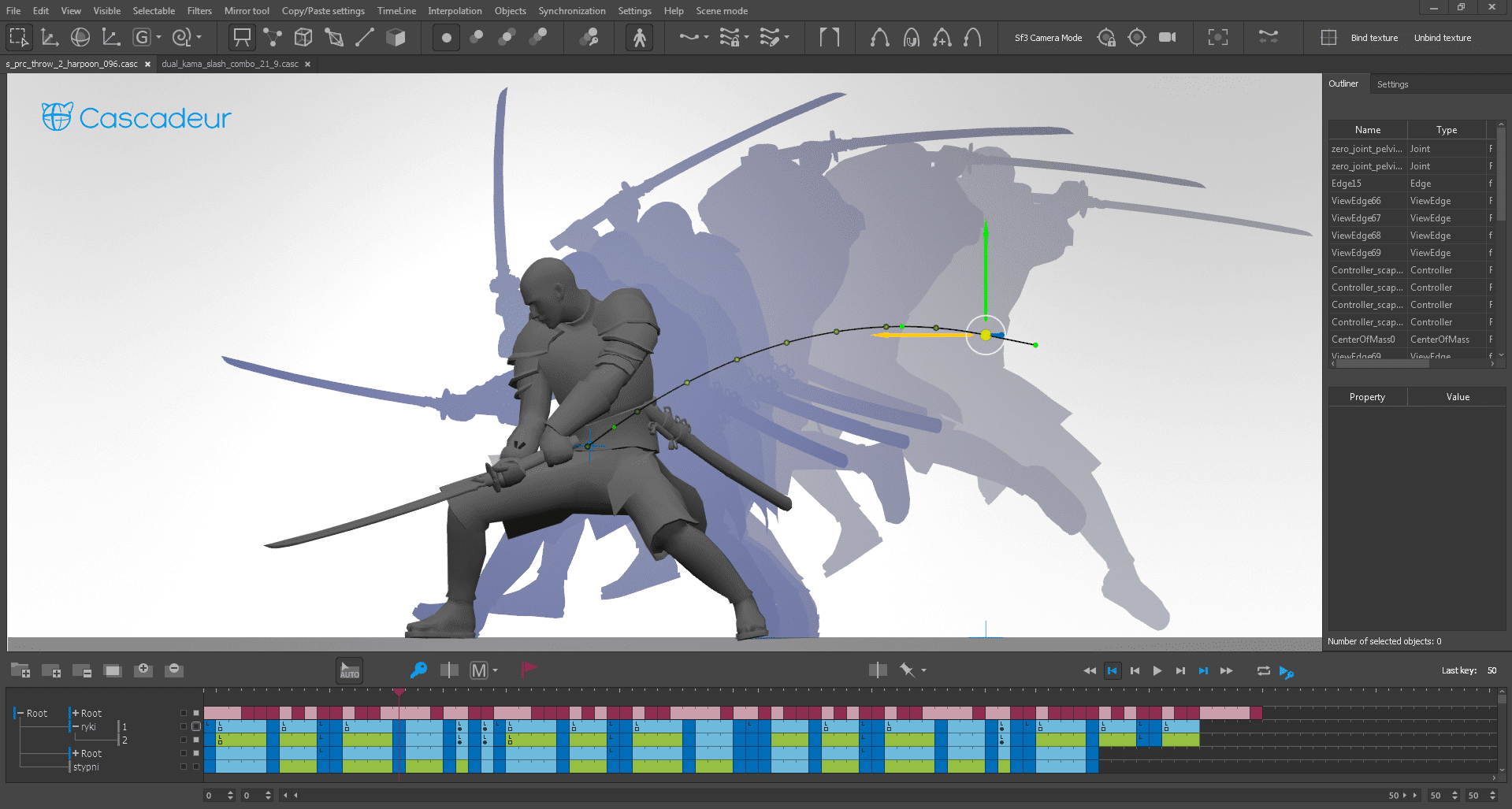

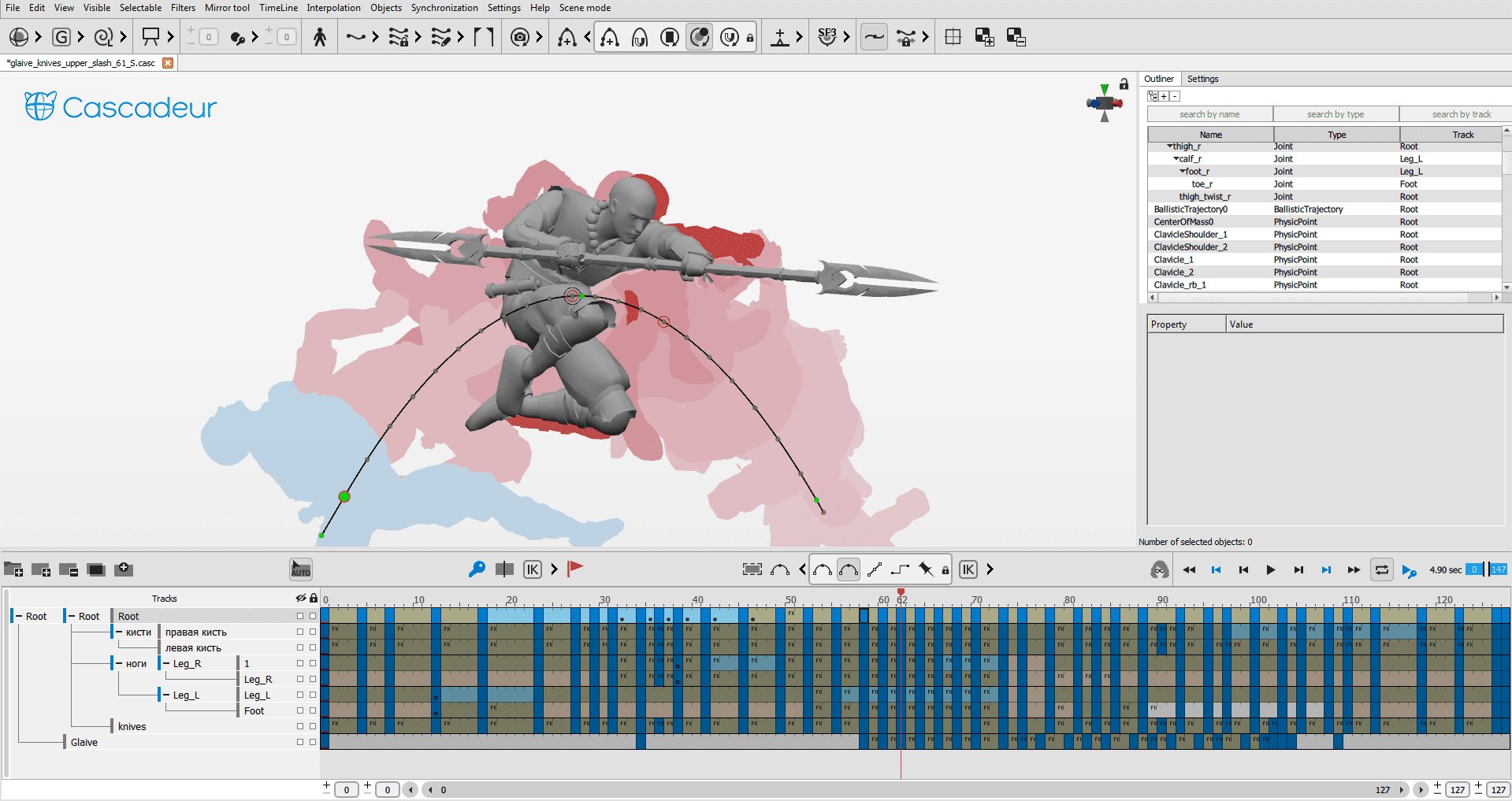

For those who don’t know the tool: Cascadeur by Nekki is a standalone 3D character animation DCC focused on physics-assisted keyframe animation, AutoPosing and AI Inbetweening. It supports FBX and USD pipelines into engines such as Unity and Unreal Engine, and began life as an internal game tool.

Alexander Grishanin is a software engineer and CTO of Cascadeur. With a background in applied mathematics and real-time systems, he focuses on combining classical physics simulation, machine learning, and intuitive animation workflows into a cohesive production tool.

He joined Nekki in 2014 as a game developer, contributing to character animation systems for titles such as the Shadow Fight series. Today, together with Cascadeur’s original creator, Eugene Dyabin, he shapes the software’s technical vision, focusing on lowering the barrier to entry for 3D animation while preserving precise artistic control.

Cascadeur Today

DP: What was the very first problem you wanted Cascadeur to solve?

Alexander Grishanin: To put this into context, it helps to know that Nekki originally started more than 20 years ago as a game development studio. Animation was always a core production topic for us, not a theoretical exercise. We were building real games under real-life conditions.

The original idea for Cascadeur came from one of Nekki’s co-founders, Eugene Dyabin. Eugene has always been interested in animation, but approached it from a very technical perspective. What bothered him early on was that established tools like Maya, while extremely sophisticated, were almost completely disconnected from physics. For someone with a technical background, that felt like a fundamental mismatch, because character animation, beyond its artistic dimension, is strongly influenced by physical principles such as balance, weight and momentum.

At that time, Nekki was producing fighting and parkour games such as Shadow Fight and Vector. To generate large volumes of believable motion for those games, Cascadeur began as an internal tool. Initially, it was a relatively simple animation editor that relied on physics-based calculations to help animators manage balance, weight, and momentum more intuitively, rather than constantly tweaking animation curves.

What began as a small internal project gradually evolved as we added the tools we needed in production. For a long time, the idea was simply to use Cascadeur inside our own studio. But as the tool matured and proved itself in real projects, it became clear that it could be useful far beyond Nekki, which eventually led to the decision to turn Cascadeur into a standalone product in 2019.

DP: And, when we look at the current version of Cascadeur, has that problem been solved?

Alexander Grishanin: Largely, yes. But it was definitely not a single step; it was a long evolution. In its early days, Cascadeur was far from being a full-featured 3D animation tool. It used a very specific internal format and was tightly coupled to our own production setup.

Over time, especially with the rise of Unity and Unreal Engine, it became clear that Cascadeur had to integrate seamlessly into standard pipelines. A key part of “solving the problem” was turning it into a proper 3D editor: supporting skeletal animation as used in game engines and enabling reliable import and export via common formats such as FBX and USD. From a pipeline perspective, that part is largely solved today.

On the physics side, the journey was just as long. Initially, we worked with very explicit physics concepts: centre of mass, force vectors, and angular momentum visualisations. We even built detailed visual tools showing forces and reaction forces. Technically, that worked well, but we quickly realised that this approach had a very high entry barrier. While powerful, it required animators to think in highly abstract physical terms, which was intimidating for many artists.

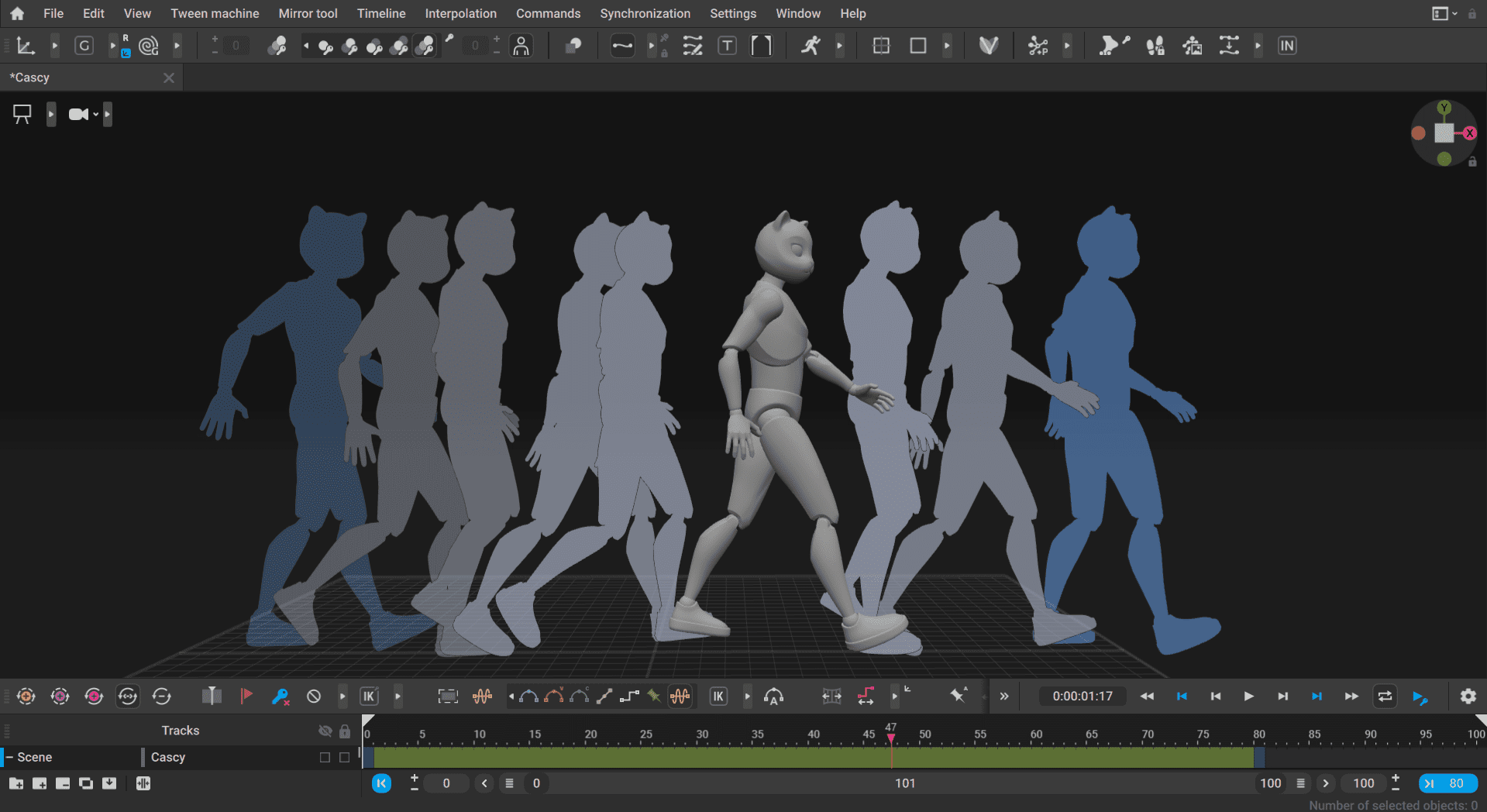

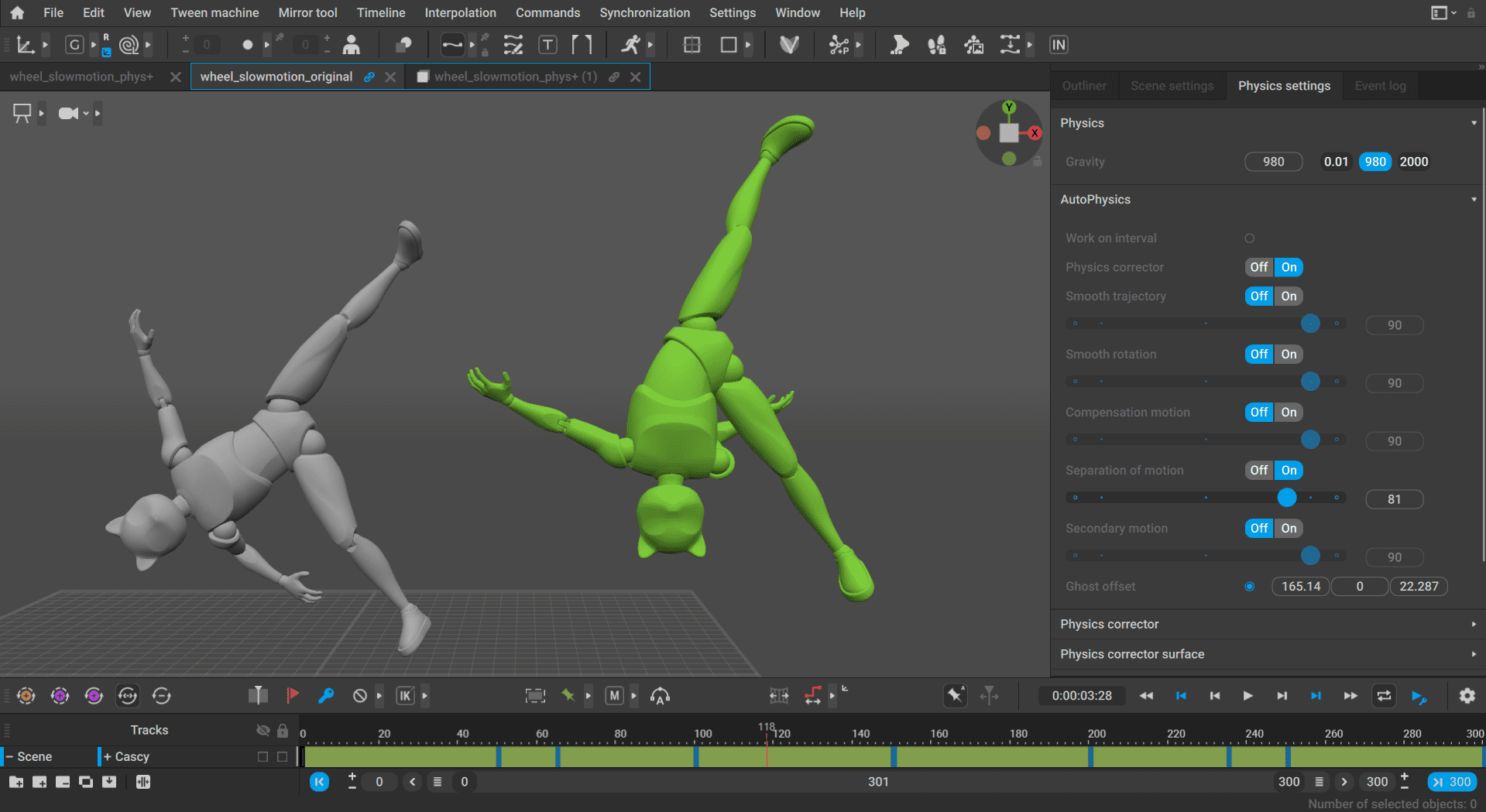

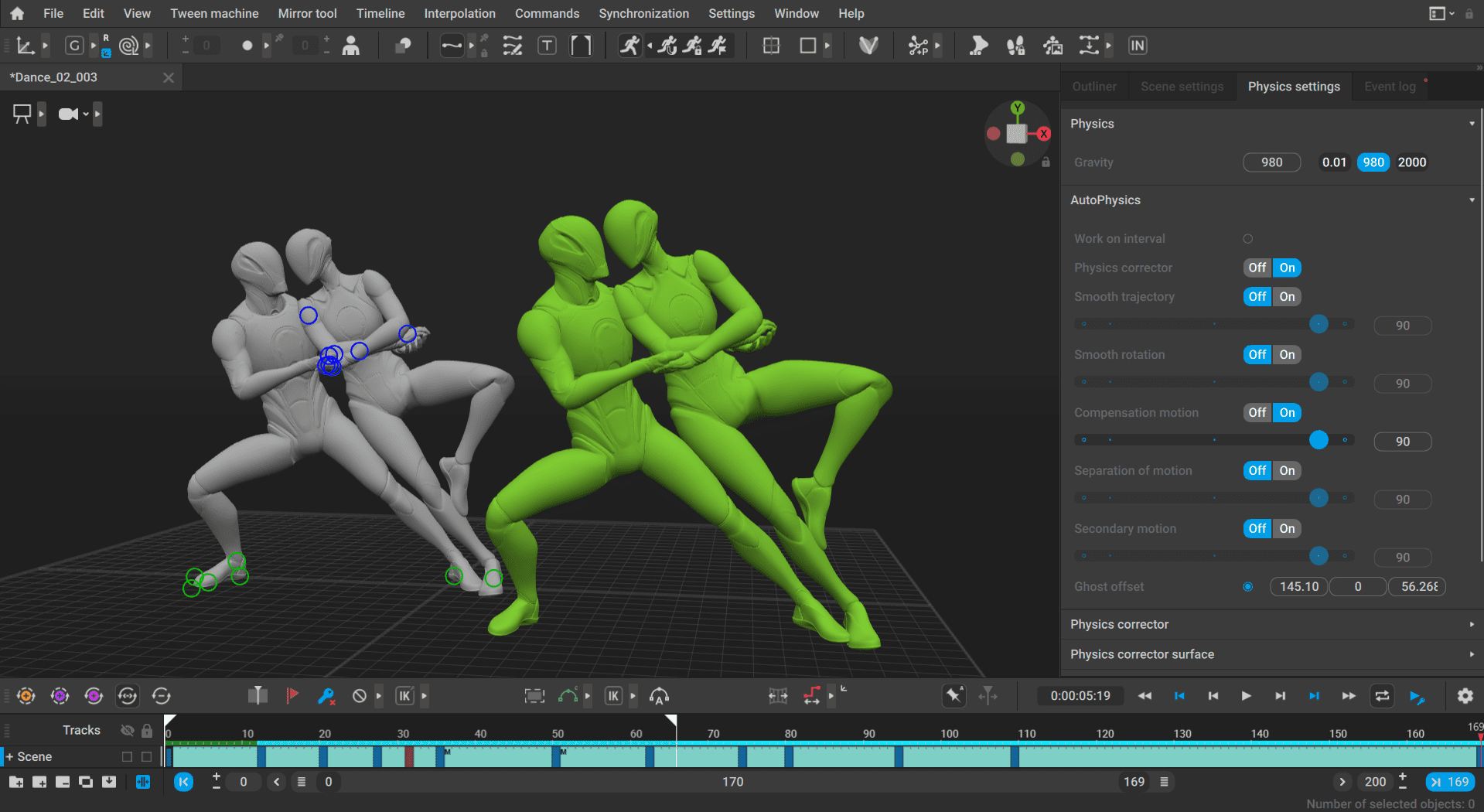

That insight led to a fundamental shift in approach. Instead of asking animators to understand physics, we focused on letting the software demonstrate it. This resulted in what we now call the Physical Assistant (aka “Green ghost”): essentially a physically accurate version of the character that moves alongside the animator’s animation and shows how the motion would look if it fully respected physical laws. It’s generated automatically and acts as a continuous reference rather than a diagnostic tool.

At this point, we’re quite happy with where we landed. There’s still room for refinement and more automation, but in terms of making physics-based reasoning practical and usable in everyday animation work, the original problem is largely solved.

DP: One of Cascadeur’s party tricks is simplifying any Biped Rig, and making it usable for animation – how does it decide what parts of an imported rig should be simplified?

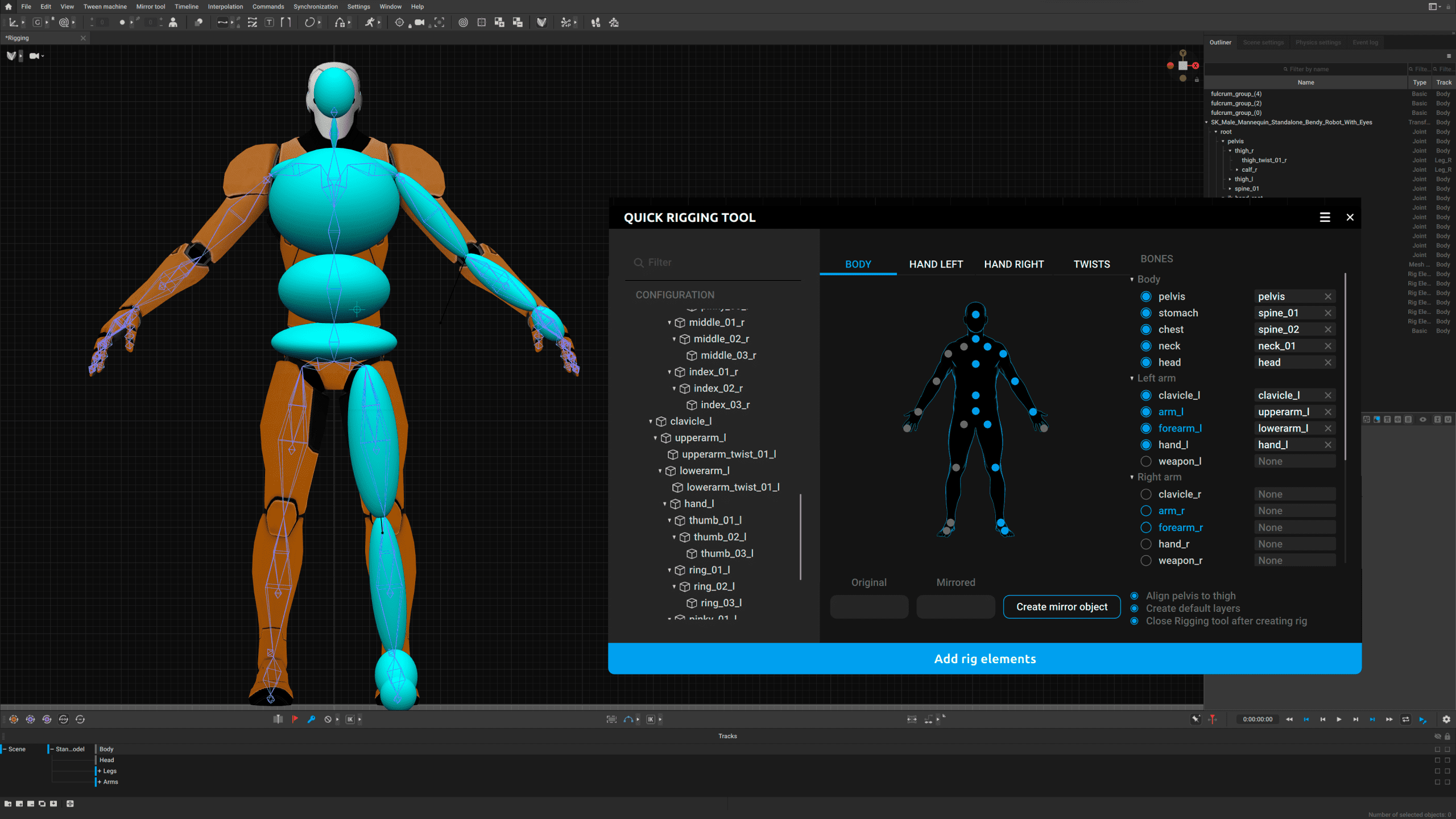

Alexander Grishanin: To be honest, there is no fully automatic “magic” that analyses an imported rig and decides which bones are essential and which are not. At least not at the moment. Our approach is actually quite pragmatic.

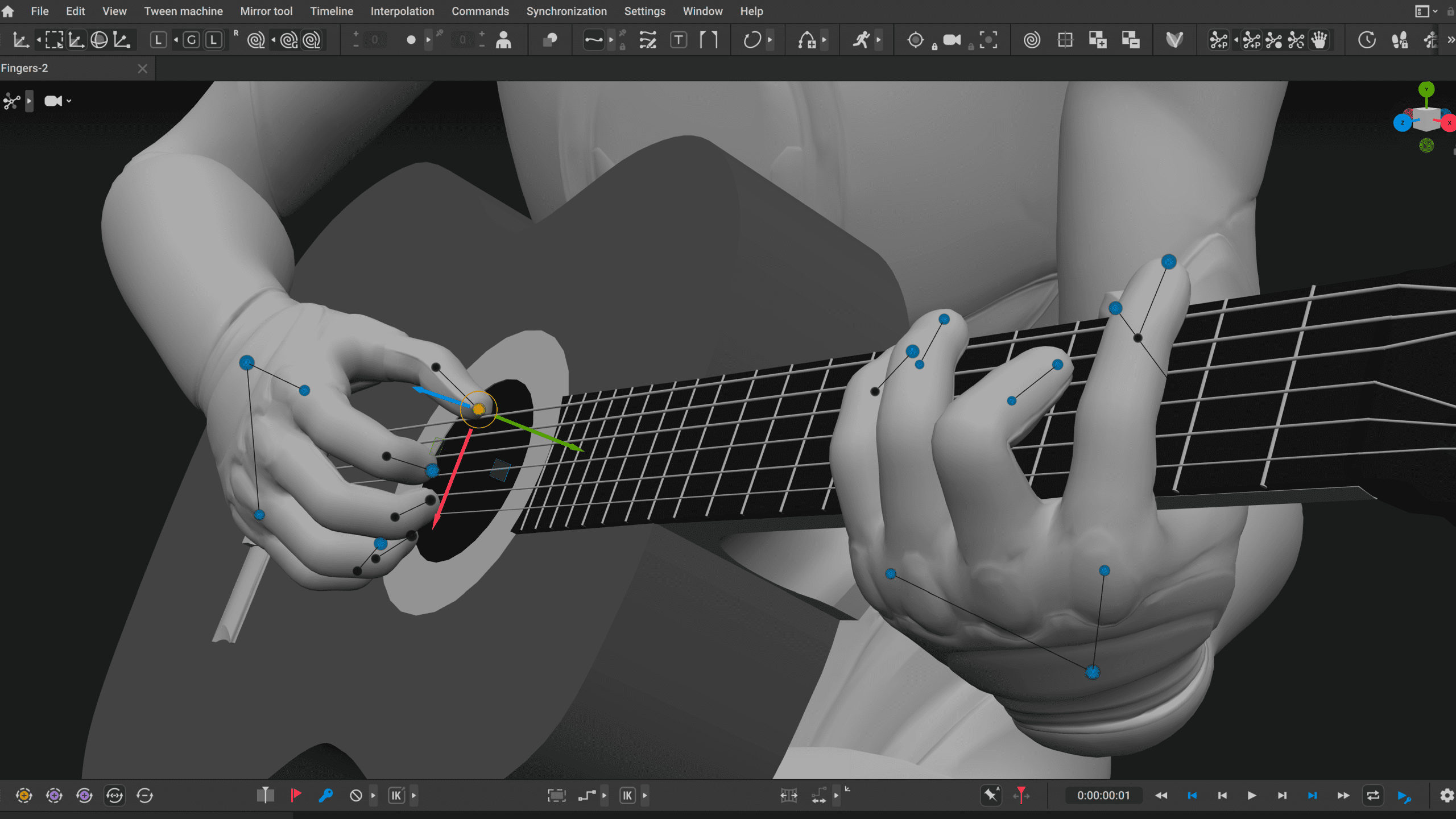

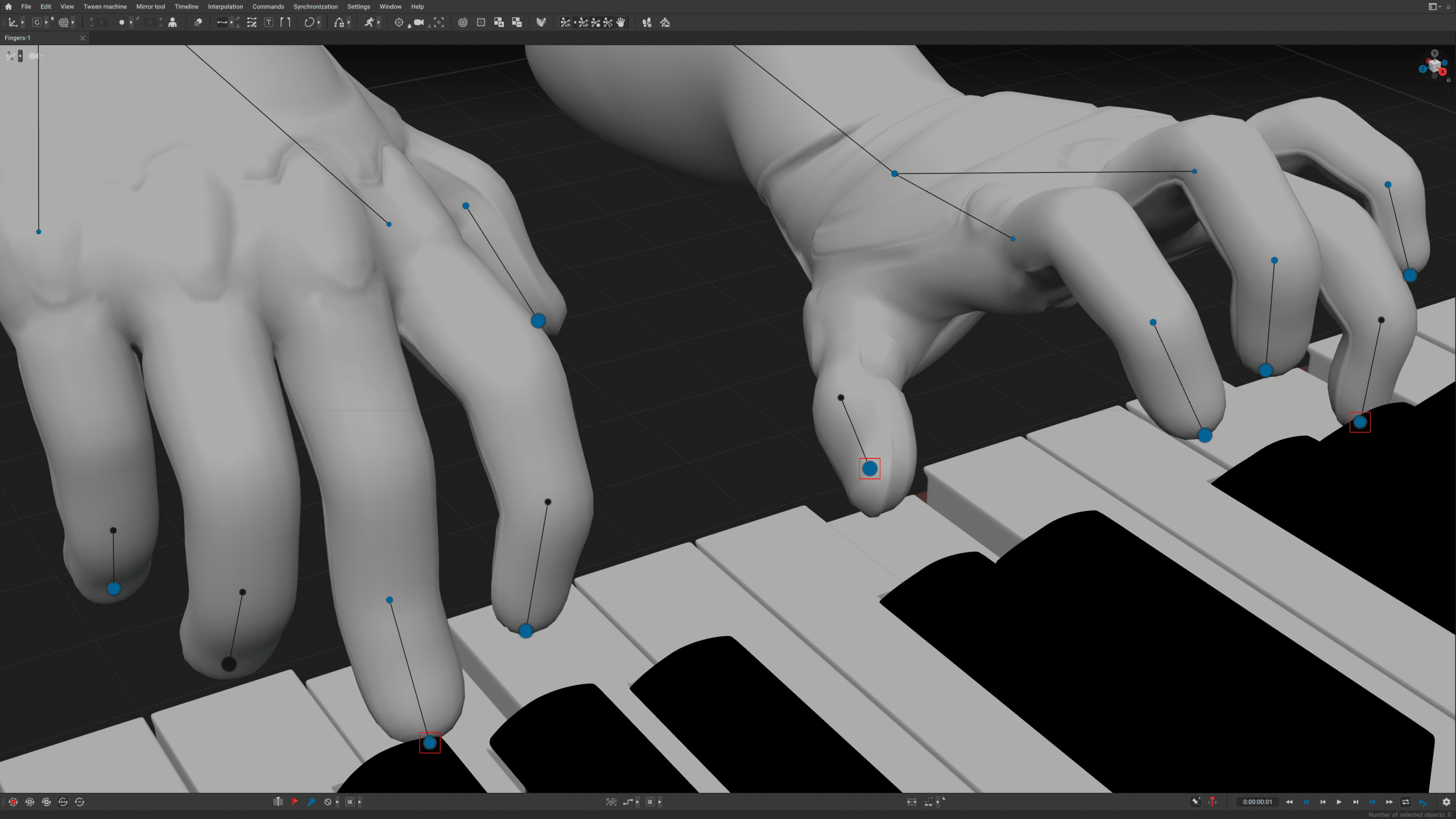

When you import a character into Cascadeur, it can indeed come with hundreds of bones: helper bones, controllers, deformation chains, design-driven additions – all the things riggers love. But for physically plausible biped animation, only a well-defined subset of those bones is actually relevant. Beyond areas like the spine, which often needs more detail, the structure required for body mechanics is fairly consistent.

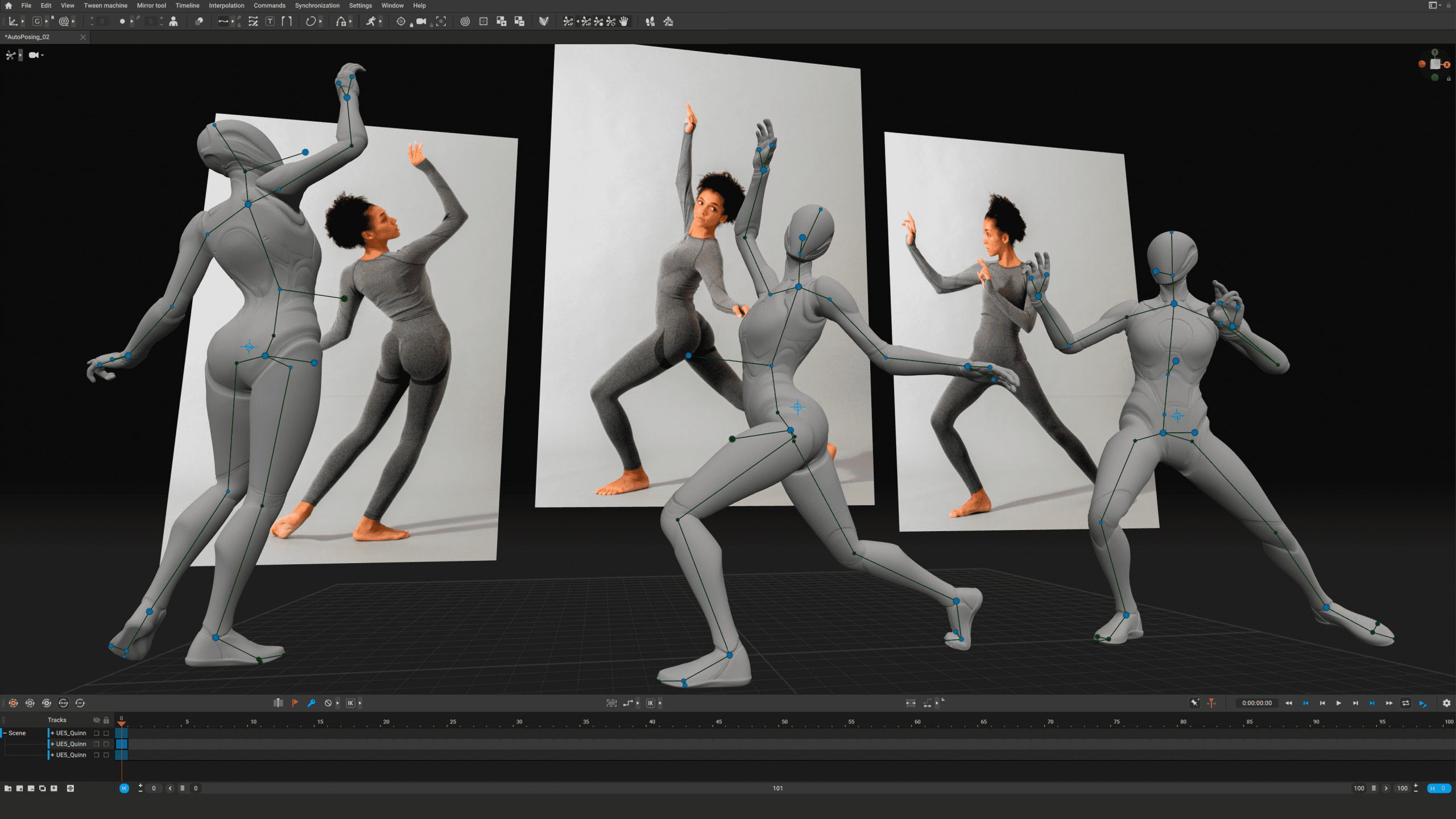

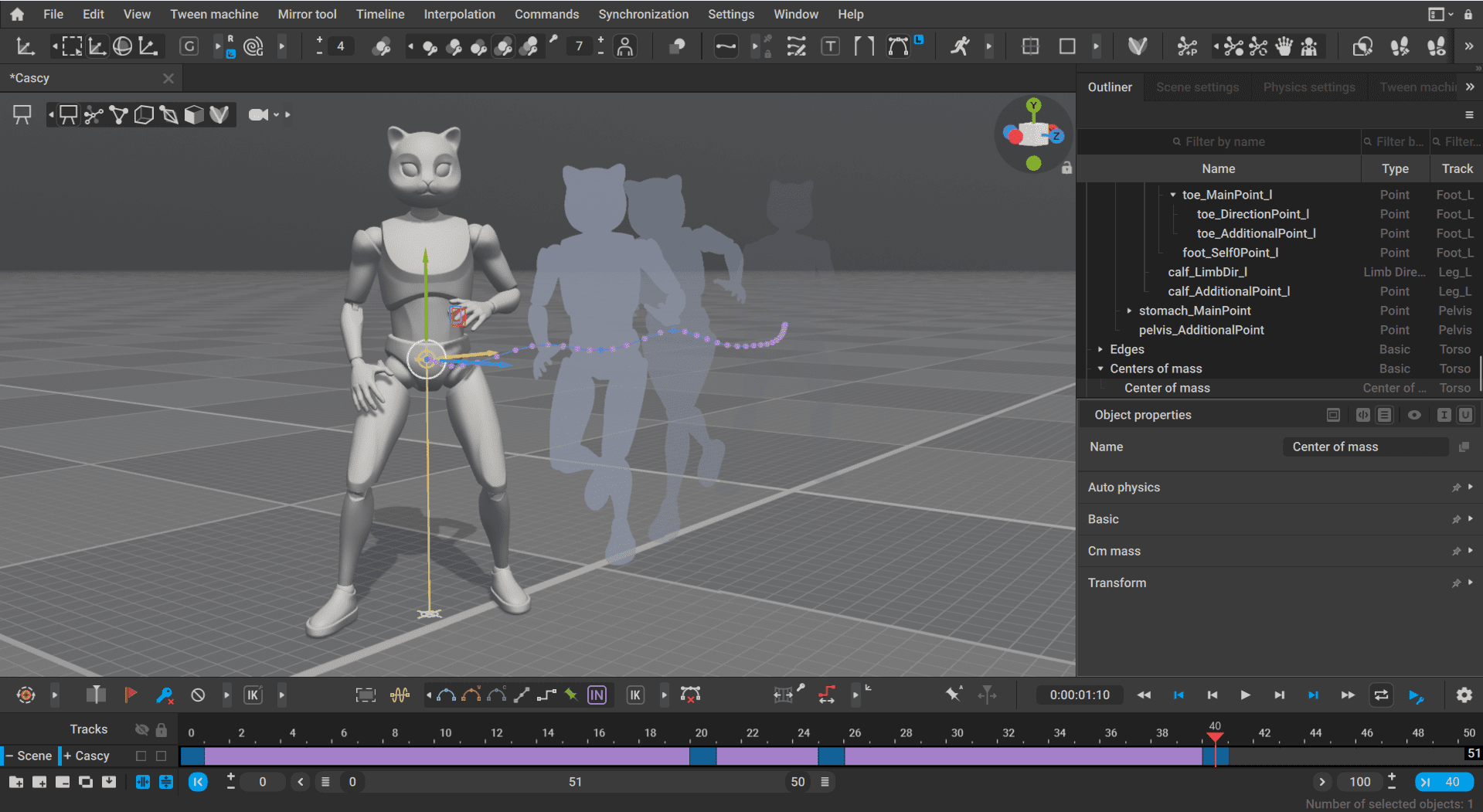

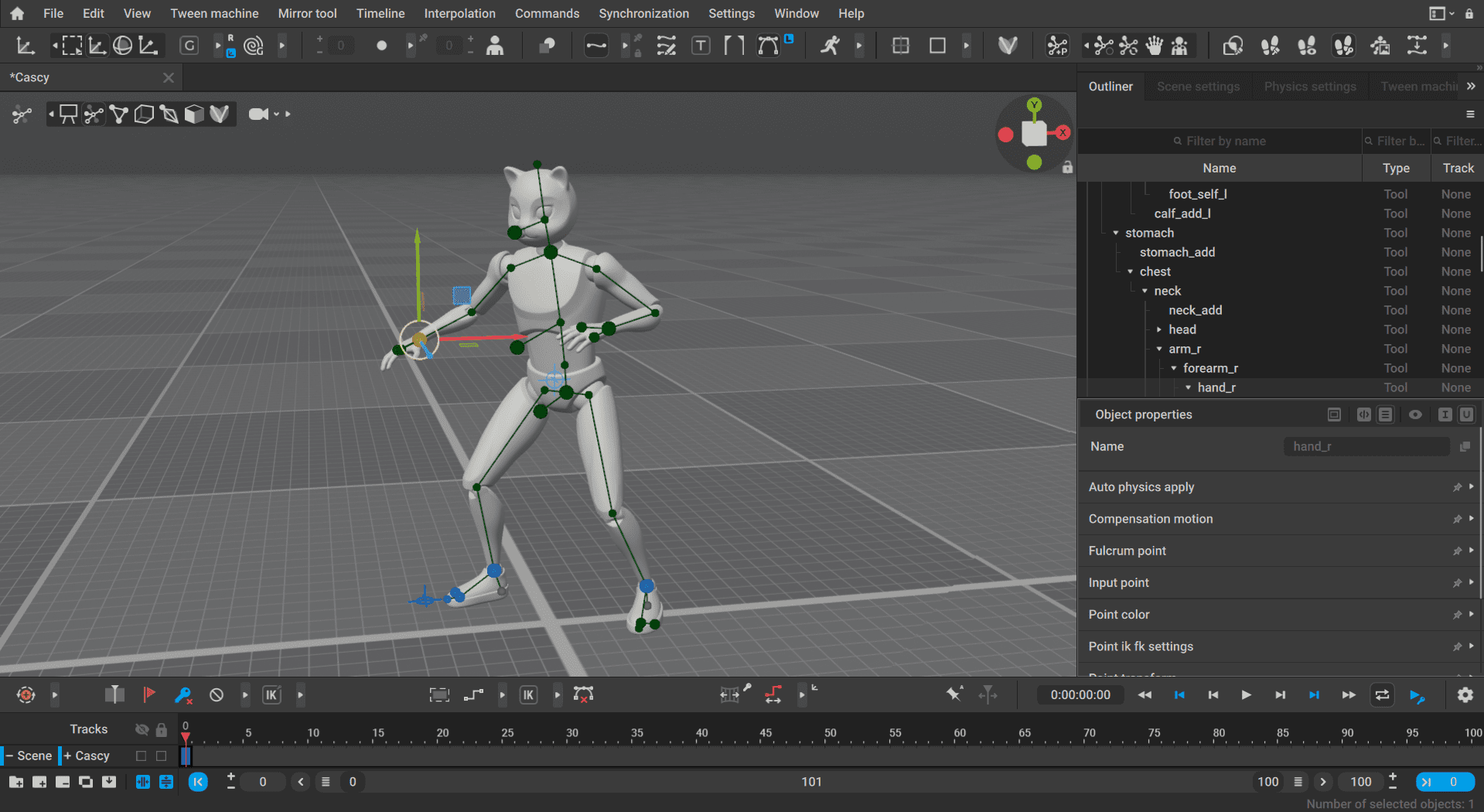

So what Cascadeur does is this: the user selects the bones that the internal Cascadeur rig should work with. This can be done either via presets, which we created for common rig types or manually in the rigging tool. The generated Cascadeur rig then controls only this selected subset, while the rest of the bones follow through skinning or constraints.

This is very similar to how quick rigging or humanoid setup works in game engines. Once the system knows which bone represents the pelvis, the feet, the hands, and so on, a lot of things become much easier, including physics-based tools and retargeting between characters. So rather than automatically “simplifying” rigs in a black-box way, Cascadeur relies on a clear, explicit mapping. That gives us predictability and compatibility with existing production pipelines.

When is a joint really a joint?

DP: How does the system identify what counts as a joint?

Alexander Grishanin: This is handled in essentially the same way as rig simplification. If the system recognises a known rig, a preset can be loaded that defines the joints. Otherwise, joint identification is done manually during the rig setup.

Currently, Cascadeur does not automatically analyse an arbitrary hierarchy to determine what counts as a joint. We rely on explicit user input rather than automated heuristics. More automated approaches may be possible in the future, but currently the process is intentionally straightforward and transparent.

DP: How does Cascadeur separate intentional mocap motion from noise?

Alexander Grishanin: At the moment, Cascadeur does not have a specialized system that automatically distinguishes intentional mocap performance from noise. Mocap cleanup is not the core focus of the software. Cascadeur is primarily designed for creating animation from scratch, but we do provide tools that make working with mocap data practical.

Our main approach in this regard is the so-called Unbaking workflow. You can import a baked mocap animation and unbake it, which converts the motion into a reduced set of keyframes and interpolations while preserving the original movement as much as possible. This makes the animation much easier to edit and clean manually. Smaller jitters can be reduced through Unbaking precision settings, but larger artifacts will still be preserved and need manual correction.

In practice, this works well because most modern mocap systems already perform significant jitter reduction in their own software before the data reaches Cascadeur. On our side, we focus on making the resulting animation editable, readable, and controllable. Additional tools, such as automatic foot contact handling and upcoming collision-penetration fixes, help improve the overall cleanup process, but we don’t currently attempt to automatically distinguish artistic intent from noise.

Version 2025.3

DP: Having that in mind: How challenging was the transition from bipeds to quadrupeds? And does AutoPosing behave differently for quadrupeds?

Alexander Grishanin: The challenging part was not so much the core technology, but standardization. The underlying rigging system in Cascadeur (the part that generates IK/FK rigs) is actually not tied to bipeds at all. You can rig pretty much anything with it, and our users have been doing that for years, including complex robots or non-humanoid characters.

What was new with quadrupeds in version 2025.3 was turning this flexibility into a standardized, production-ready workflow. That meant defining a clear IK/FK rig structure for quadrupeds and extending the rigging and “cooking” tools so users don’t have to build everything manually anymore.

AutoPosing was the second major piece, and here the behavior is indeed different. Quadrupeds have fundamentally different anatomy: more complex spines, different roles for front and hind legs, and different balance logic. So AutoPosing for quadrupeds is not a simple extension of the biped system – it’s a separate solution built on top of the same foundations, but with different assumptions.

From a system perspective, nothing really broke, but we had to add new logic and support layers to make quadrupeds feel natural to work with. We’ve already improved this significantly in recent updates, and there’s more coming in the next major release 2026.1. Retargeting for quadrupeds is now supported as well.

One thing we don’t yet have for quadrupeds is in-between pose generation at the same level as for bipeds. That requires a much larger and more specific dataset, and we’re currently exploring ways to obtain that. The transition is well underway but not yet complete.

DP: How does AutoPosing decide which constraints to prioritise between keyframes?

Alexander Grishanin: There’s a small clarification needed here: AutoPosing itself does not operate between keyframes. It is a tool used on a keyframe to generate or adjust a pose.

Our Inbetweening system is a separate mechanism. It generates motion between keyframes based on the keyframe poses, their timing, and optionally the tangents defined at the start and end keys. At the moment, it does not evaluate or prioritise constraints in the traditional sense. The keyframes themselves, and their timing, are the constraints.

When connecting two animation segments, the system interpolates between the defined poses, respecting timing and tangents rather than resolving competing constraints. More advanced constraint-aware Inbetweening is something we may explore in the future, but it’s not how the system works today.

DP: How does retargeting interact with locked joints or pinned positions?

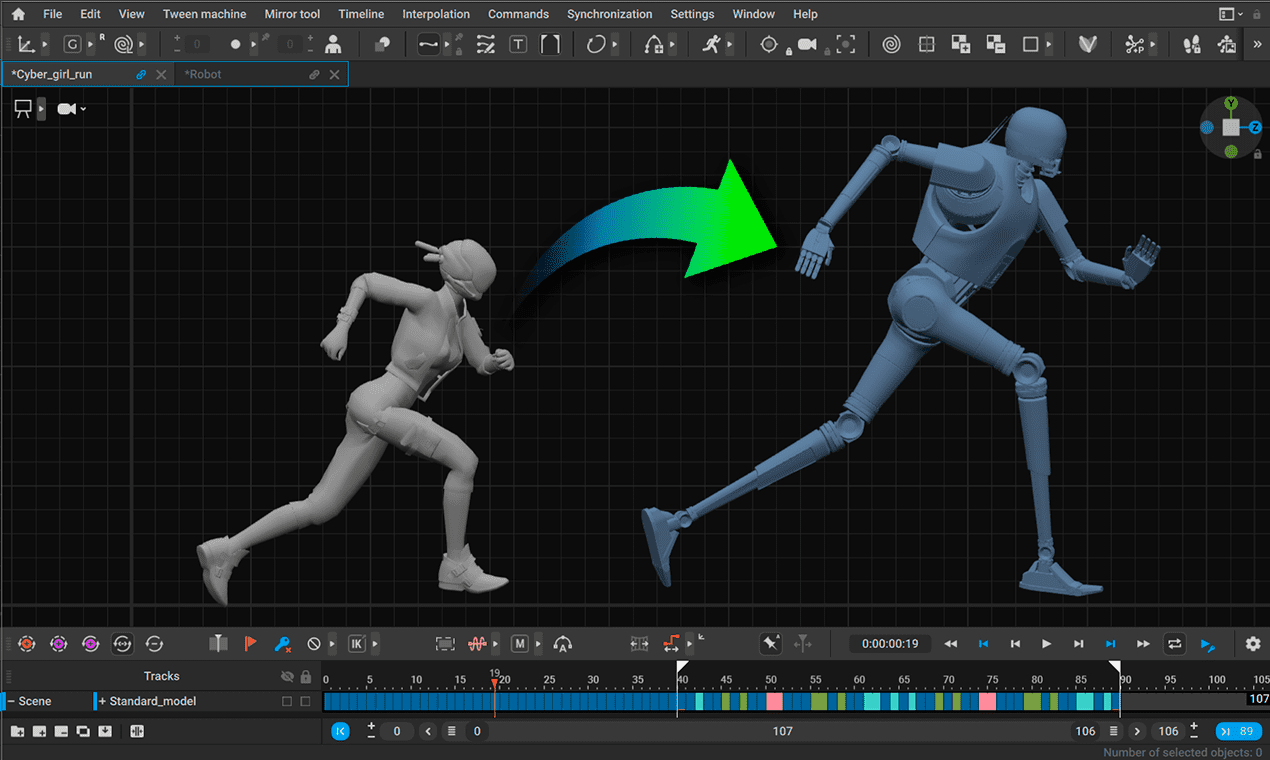

Alexander Grishanin: Retargeting in Cascadeur does not explicitly take joint locks or pinned positions into account. What we focus on instead are fulcrum points – points of contact that should retain their global position, such as feet on the ground. These are detected and preserved during retargeting.

Technically, retargeting operates at the joint or IK-rig level, but it does not account for existing constraints or joint locks. Constraints exist in Cascadeur, but the retargeting system itself does not account for them.

In practice, this keeps the behaviour predictable. For example, when weapons or props are involved, they are usually switched to FK and remain in place, rather than being dynamically resolved during retargeting. More advanced constraint-aware retargeting would require significantly more context and assumptions, and at the moment, we don’t try to make it work “magically.”

DP: Cascadeur now understands complex collisions and “stuff in the scene” How far can Cascadeur go with understanding objects and full environments?

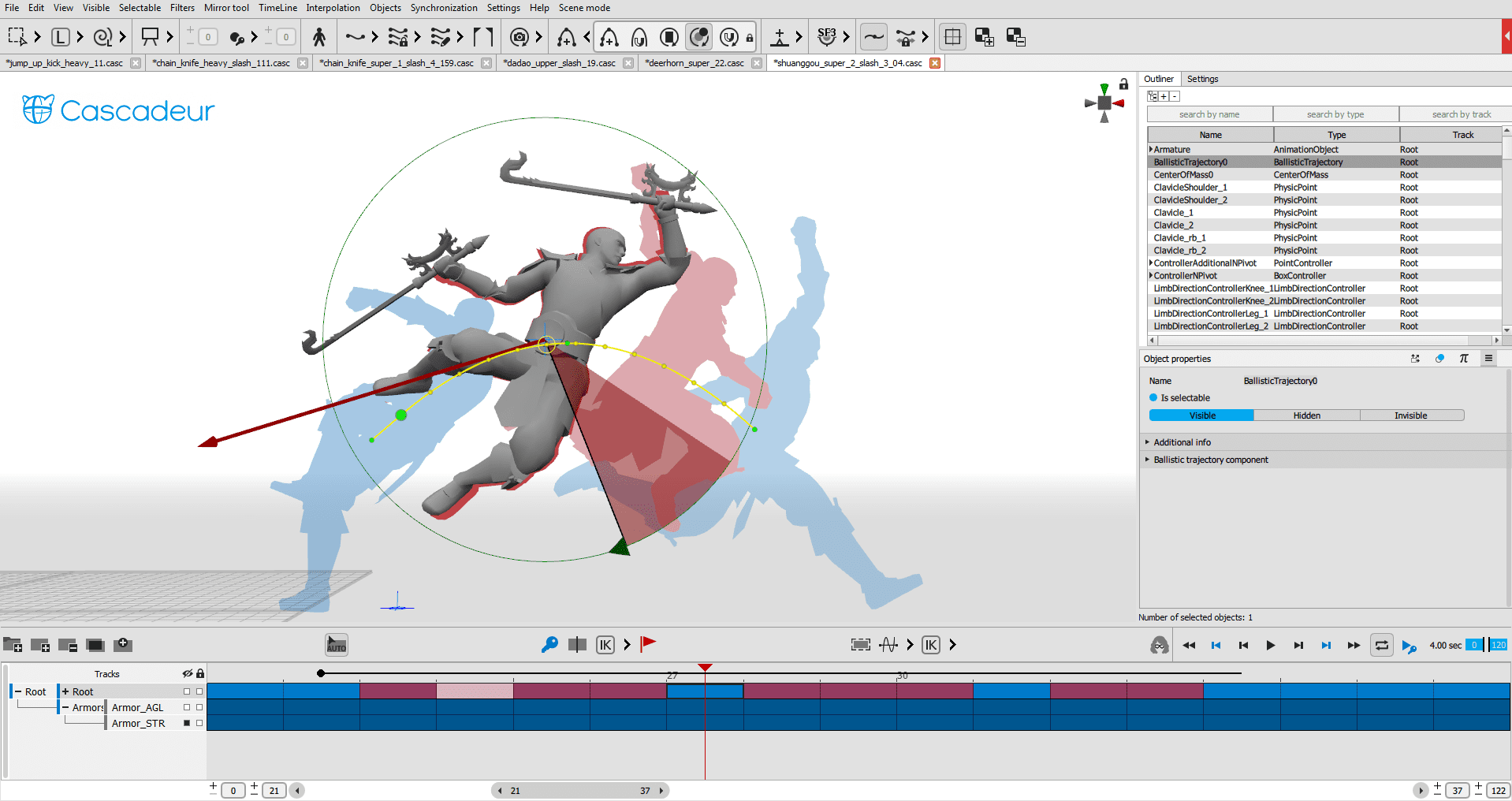

Alexander Grishanin: At the moment, Cascadeur handles environments in a fairly pragmatic way. The system automatically recognises the ground plane, and any additional objects in the scene can be treated as environment elements – as long as they have colliders assigned to them. Once that is set up, characters can interact with those objects, for example, by walking on them or making physical contact.

Most of our physics-based tools already account for these environment colliders. We support different types of collision behaviour, such as pinned or surface-based collisions, which allow for reasonably complex interactions with scene geometry.

The current limitations are higher-level automation. AutoPosing and Inbetweening do not yet consider environment colliders when generating poses or motion. We plan to improve this in the future, but it’s a complex problem, and we don’t want to overpromise on timelines.

DP: And within a scene, the movement is either interpolated from Keyframe to Keyframe, or inbetween-ed along a vector, curve or trajectory?

Alexander Grishanin: In Cascadeur, animation is fundamentally built around keyframes. Between those keyframes, we support several types of interpolation. Most of them are fairly standard: linear, Bézier-based interpolation. Quite similar to what you find in other 3D animation tools.

One notable difference is on the rig side: we rely heavily on spherical interpolation. This gives us greater control over rotation continuity and tangents while avoiding issues such as gimbal lock. Compared to quaternion interpolation, it offers more predictable behaviour when animators want to art-direct motion using curves and timing.

Inbetweening is a separate mechanism. It uses AI to generate motion between keyframes, but it does not currently follow curves, trajectories, or environmental context. Its constraints are the poses, timing, and optionally the tangents defined at the surrounding keyframes. Artistic control still comes primarily from how those keyframes are set up.

We are exploring more advanced systems in the future. For example, root-motion-based generation that could follow a trajectory or directional intent. But that would not be traditional interpolation. It would be more of a generation step, where you define intent and let the system propose motion.

So even as locomotion and motion flows become more complex, the core idea remains the same: keyframes define intent, interpolation and Inbetweening assist – but art direction always stays with the animator.

DP: And how does Cascadeur switch between interpolation and Inbetweening?

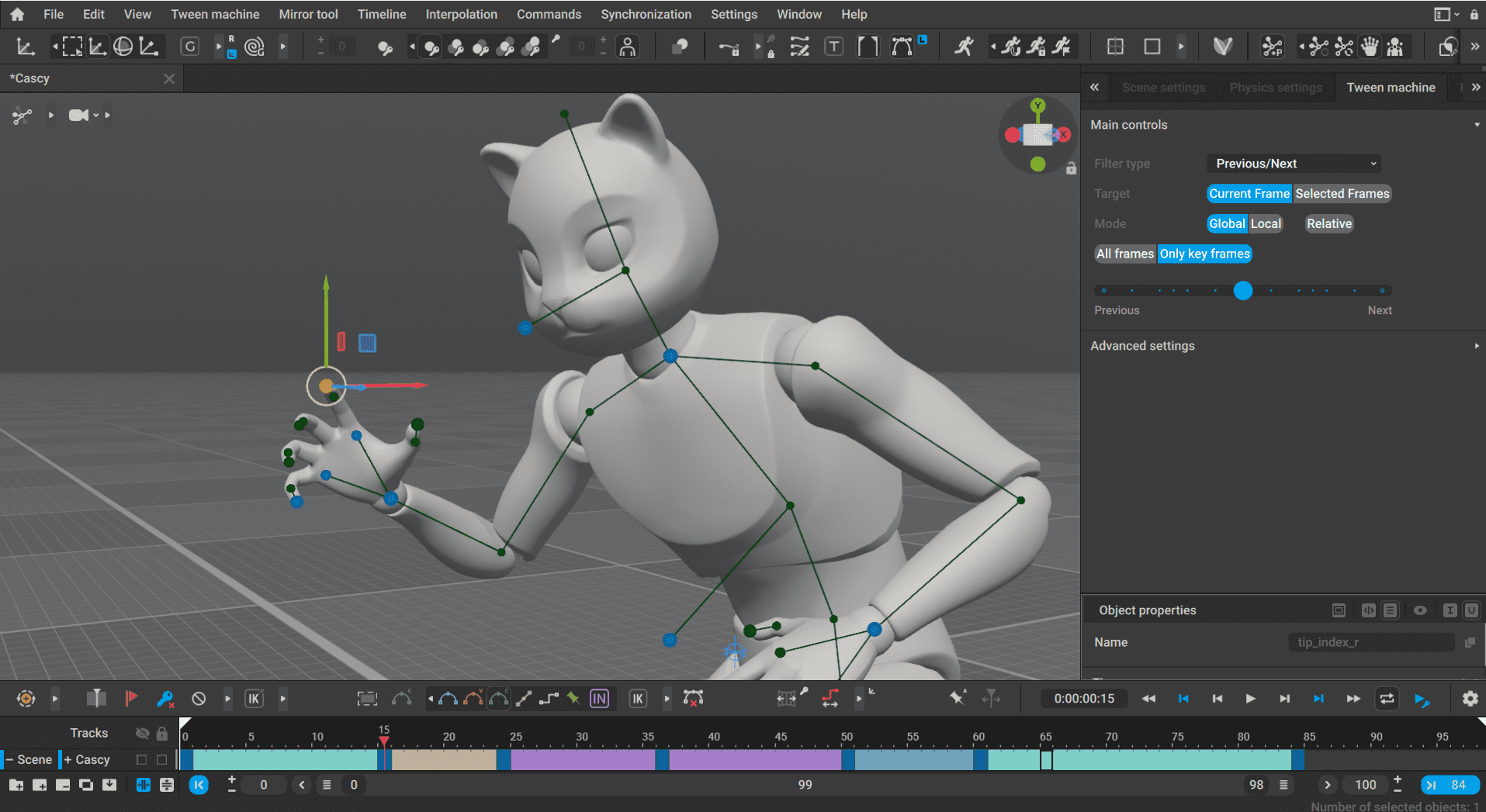

Alexander Grishanin: It’s entirely a choice made by the animator. There is no automatic switching. In earlier Cascadeur versions, Inbetweening was a separate operation: you selected a time interval, triggered the tool, and it generated a baked animation for that section.

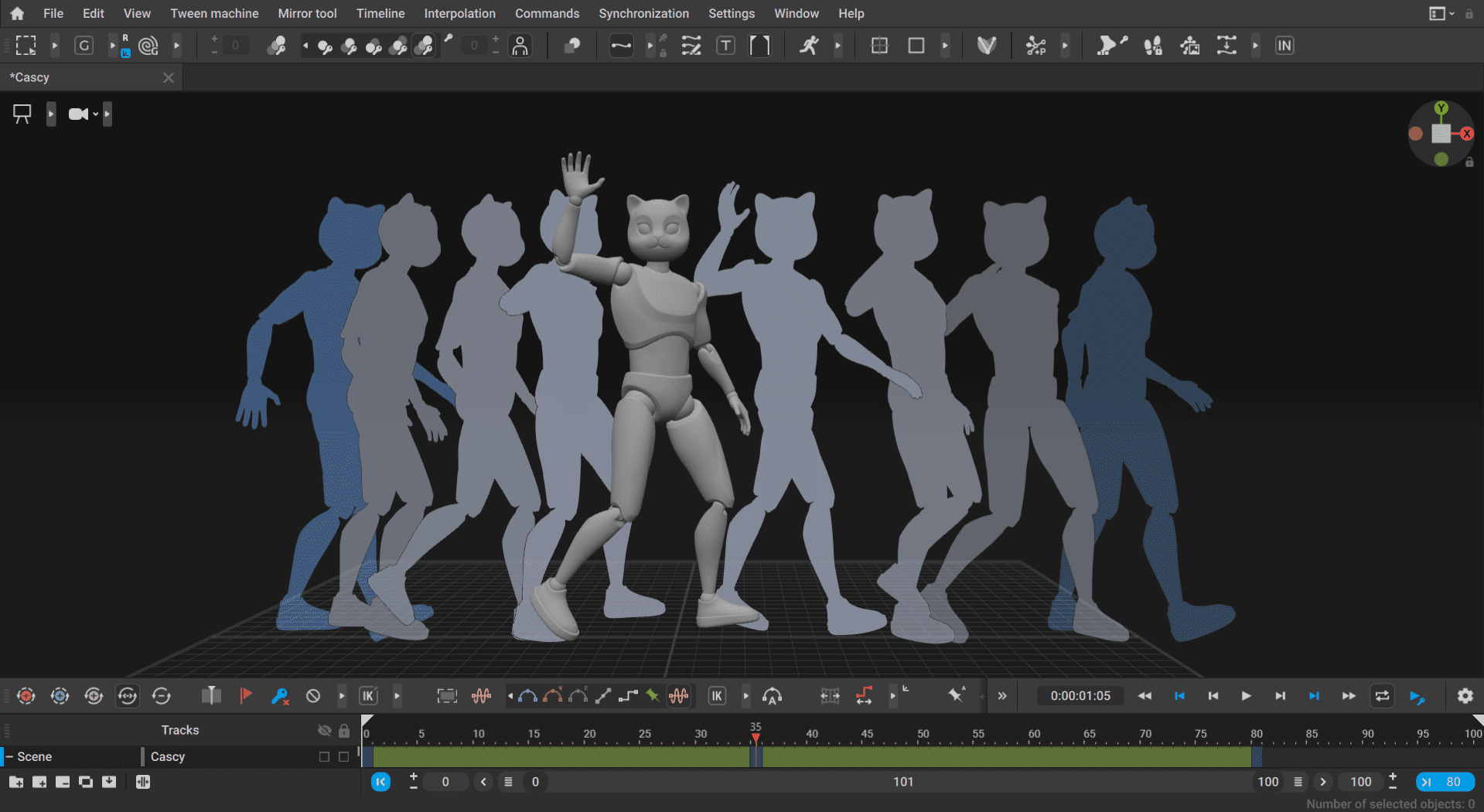

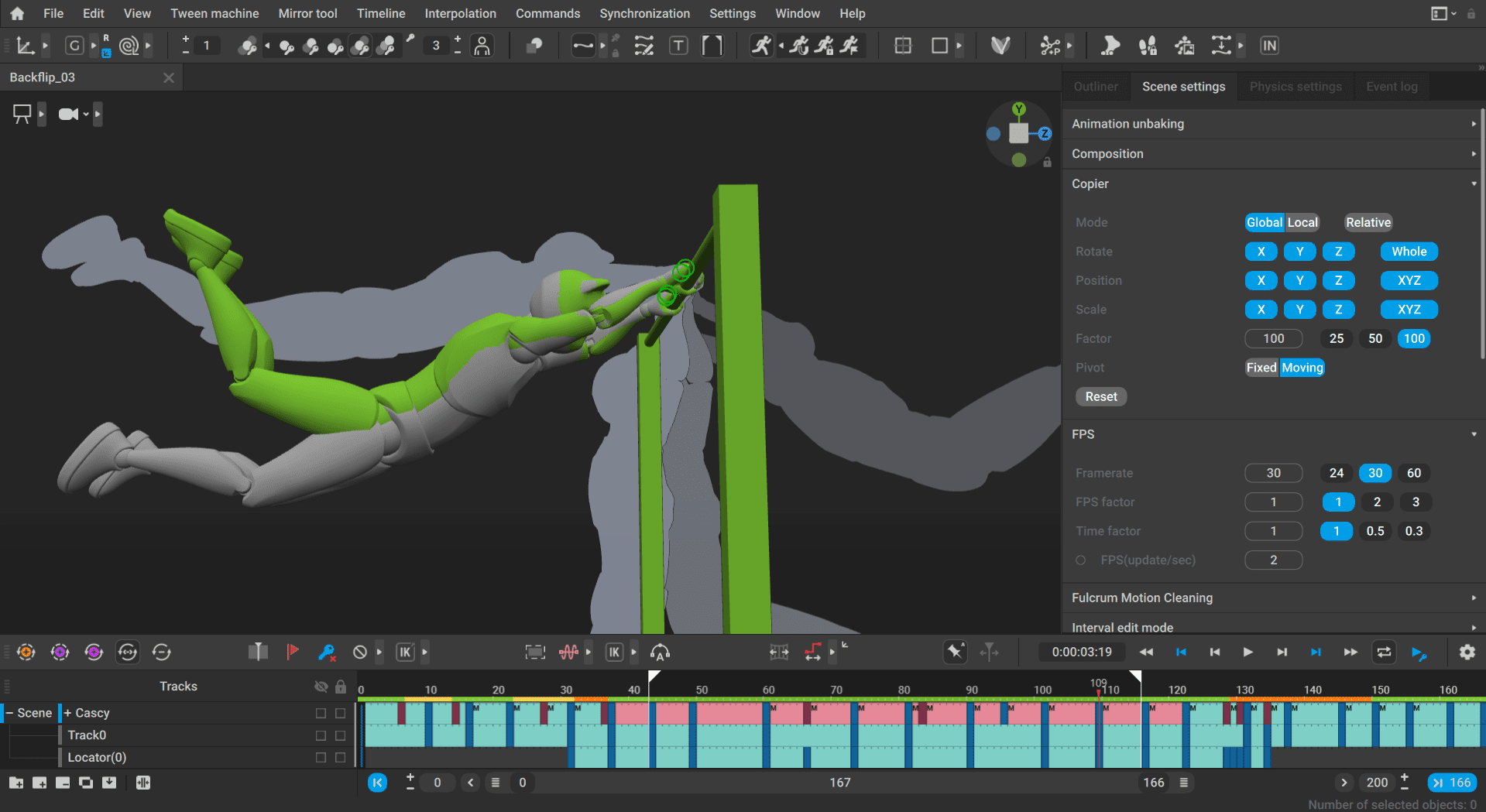

In version 2025.3, this changed. Inbetweening is now implemented as a type of interpolation. That means the animator explicitly chooses, per segment, whether motion between keyframes should use a traditional interpolation method or Inbetweening. From a workflow perspective, this makes the system much more coherent and predictable.

One of the main challenges with this change was ensuring smooth transitions between different interpolation types. We added mechanisms to blend and smooth those transitions, so switching between standard interpolation and Inbetweening doesn’t introduce visual discontinuities. So the logic is simple: the animator decides what works best for a given situation, and the system focuses on making that choice behave consistently and smoothly.

How about Ragdolls?

Alexander Grishanin: Yes. But it’s important to clarify how physics and ragdoll simulation work in Cascadeur. Ragdoll is a type of physical simulation that operates on the resulting animation, not on the process that created it.

From the physics system’s point of view, it doesn’t matter whether an animation was created using standard interpolation, Inbetweening, imported mocap, or even a fully baked clip from a library. All interpolation and Inbetweening are evaluated first. Physics, including ragdoll, then uses the final animation frames as input and simulates from that point.

Once you activate ragdoll, the simulation will typically override the animation quite significantly. For example, when a character starts to fall. There is no tight coupling among interpolation, in-Betweening, and ragdoll logic. Physics simply “consumes” the finished motion and reacts to it.

In that sense, both interpolation and Inbetweening are fully compatible with ragdoll features – because physics doesn’t care how the motion was authored, only what the motion is at the moment the simulation starts.

DP: When we look at “AI-assisted Inbetweening”, wouldn’t the next step be to get additional data from a scene?

Alexander Grishanin: That kind of scene-level, event-driven Inbetweening is far beyond what we are aiming for in the near future. Conceptually, it is interesting to use full-scene context, simulations, particles, or semantic events to influence animation behaviour, but this quickly becomes a very different class of system.

Right now, our focus is much more grounded. We aim to improve the quality, robustness, and controllability of AI-assisted Inbetweening, based on animation data and clearly defined inputs such as poses, timing, and physical plausibility. Adding higher-level scene understanding would significantly increase complexity and assumptions.

Ideas like text-driven reactions to explosions or environmental cues are intriguing, but they are not where Cascadeur is heading in the foreseeable future. Our priority is to make AI a reliable assistant within a traditional animation workflow, not a system that attempts to interpret entire scenes or narratives.

DP: With more interpolation tools added, how do you keep the timeline clean?

Alexander Grishanin: There isn’t a strict formal rule behind this. In practice, we test ourselves and carefully determine what should appear directly on the timeline and what can be placed in menus or settings. The goal is to keep the timeline readable, even as more interpolation options are added.

Unbaking is a good example of where this balance is not perfect. It can introduce quite a lot of keys and visual noise on the timeline. We’re aware of this, though in practice it hasn’t become a major usability issue yet.

In the long term, we would like to hide more of this complexity. Ideally, animators wouldn’t have to think in terms of linear versus Bézier versus IK or Inbetweening at all. You would define intent, and the system would choose the appropriate interpolation under the hood. That said, this is more of a direction or a vision than a concrete feature on the roadmap. By the way, if you want to know what we are working on right now, our Roadmap is public, and you can have a look here: https://trello.com/b/oNlIizJh/cascadeur-roadmap

DP: How big can an unbaked animation get before performance drops?

Alexander Grishanin: In practice, extremely long unbaked animations are more of a theoretical stress test than a real production scenario. We have users working with animations of up to 10,000 frames, and that is definitely possible. In version 2025.3, we added another round of optimisations specifically to improve performance with long timelines.

That said, usability becomes the limiting factor much earlier than raw performance. Working meaningfully with 10,000 frames is already very difficult, simply because it becomes hard to understand what is happening where. Most animation work happens in much smaller ranges, typically a few hundred frames at a time.

Cascadeur is optimised around that typical use case. When you focus on a limited time interval, for example, 300 to 500 frames, the system only evaluates and updates what is needed for that interval. The total length of the animation outside that range has very little impact on interactivity. That’s exactly what we improved further in 2025.3.

So yes, unbaked animations with 10,000 frames are feasible today. Beyond that, you’re likely to hit practical and conceptual limits before you hit hard technical ones. A 200,000-frame animation, for example, is far outside what we would consider a realistic working scenario.

AI in Animation

DP: Of course, in 2026 Cascadeur has AI-tools. What training data did the new Machine Learning tools rely on?

Alexander Grishanin: Our machine learning tools are primarily trained on data we own and understand very well. A large part of it comes from animation data created over many years for Nekki’s own games, especially the Shadow Fight action game series. That includes a substantial amount of hand-crafted animation and poses produced by Nekki’s animators.

In addition, we generate our own motion-capture data. We use two Xsens motion-capture suits internally and record motion data exclusively for training and validation. This allows us to create clean, well-defined datasets that align with the motion Cascadeur is designed to handle. So the foundation is a combination of long-term in-house animation data and dedicated mocap recordings, rather than external datasets.

DP: Do the new AI features change the minimum system requirements?

Alexander Grishanin: No, the new AI features do not change the minimum system requirements. At the moment, our AI-related computations run entirely on the CPU and are comparable in complexity to existing systems such as physics tools or interpolation.

We don’t rely on the GPU for AI processing, and all calculations are performed locally. Nothing is sent to the cloud. From the user’s perspective, AI in Cascadeur behaves like any other internal tool in terms of performance and system requirements.

DP: Wait, you said that all computation is done locally. None of it relies on the cloud?

Alexander Grishanin: Yes, Cascadeur runs completely on the client system. None of the AI features rely on the cloud. The neural networks we use are intentionally very lean. AutoPosing is quite small, and Inbetweening is a bit larger, but still nowhere near the scale of large models that require massive amounts of memory or external infrastructure. Because of that, all computation can happen locally without any special hardware or online connection.

DP: How do you balance classical physics with machine learning inside Cascadeur?

Alexander Grishanin: At the moment, classical physics and machine learning in Cascadeur are clearly separated and complement each other rather than competing. We don’t use AI inside the physics tools themselves. Physics is based on classical methods, mainly nonlinear equation optimisation, and operates on top of the animation that already exists in the scene.

Machine learning comes into play earlier. AI is used to help generate or refine animation, especially in cases where creating complex motion manually would be difficult or time-consuming. Physics tools then take that result and propose a physically correct version of the motion, for example, by improving balance or contact behaviour.

Over time, we realised that physics alone, while extremely valuable, cannot solve everything. An animation can be physically correct and still look wrong from an animation or artistic perspective. Contact situations are a good example: even with correct fulcrum points, physics does not necessarily enforce all the constraints needed to make motion look convincing.

This is where AI helps. It can generate complex, plausible motion that would be difficult to achieve solely through physics-based constraints. At the same time, AI is not particularly good at physics on its own. In practice, they work very well together: AI supports motion generation and plausibility, while physics supports correctness and grounding.

DP: How did you train the Inbetweening model, and what can artists tweak?

Alexander Grishanin: Our Inbetweening model is trained on our own animation data, basically to learn how it should behave in real production scenarios. We’re not trying to teach it some abstract idea of motion, but very concrete situations: how to move from one pose to another in a way that feels plausible for character animation.

For artists, the main controls are still very familiar ones. Poses and timing matter the most, by far. If you change the key poses or the spacing between them, the result changes immediately. That’s where most of the artistic control lies.

In Cascadeur there is also the option to add simple labels, for example saying “this should be a walk” rather than a run. That helps in cases where the same poses can be interpreted differently. Right now, those labels have less influence than poses and timing, but we’re actively looking at making them more meaningful.

Even though AI is involved, the idea is that animators remain in charge. You don’t tweak neural network parameters. You animate, and the AI adapts to what you’re telling it through poses and timing.

DP: Considering the plethora of “Animation Libraries”, which, like the Stock Photo Libraries of ancient times (Or, 2019), have a giant, very specific set of premade animations: Is that necessary when Inbetweening is available in Cascadeur?

Alexander Grishanin: Animation libraries are still very relevant. Inbetweening and Unbaking solve different problems, and they don’t make libraries obsolete. At least not today.

Our Unbaking works very well with ready-made animations, including clips from animation libraries. You can import them, unbake them, and then edit or adapt them much more easily. Inbetweening, on the other hand, creates new motion between poses. It works best for relatively simple or well-defined movements. When animations become very complex, it’s often hard to describe them purely through poses, and in those cases libraries are still the more practical option.

Right now, Inbetweening doesn’t replace animation libraries; it complements them. That said, it does point in an interesting direction. In the future, if you combine Inbetweening with tools such as text-to-motion or more advanced motion generation, libraries could become less central. At that point, searching a library by keywords might be replaced by simply describing the motion you want and letting the system generate it.

But we’re not there yet. Today, animation libraries, Unbaking, and Inbetweening all have their place. Inbetweening is mainly about assembling and refining motion, not about replacing large collections of finished animations.

The future

DP: With the improved viewport, do you see a future where artists animate directly in final-quality scenes?

Alexander Grishanin: Yes, definitely. That’s very much the direction we’re moving in. The improved viewport is an important step toward allowing animators to work much closer to final-quality visuals while they animate.

That’s also why we invested in a more advanced renderer. With Cascadeur 2026.1 we will completely shift to Filament, a physically based rendering system originally developed by Google. The idea is not to replace final offline rendering, but to give animators a visual result that is much closer to what they’ll see at the end, while they’re still working on motion.

Looking further ahead, there are a couple of possible paths. One is tighter integration with high-end rendering systems or real-time engines. Another is using AI-based upscaling or enhancement, where you work with a simpler representation and let the system automatically improve visual quality. It’s hard to predict exactly which direction will dominate.

What we are already actively working on, though, is better live connectivity. For example, we’re improving live links between Cascadeur and other software, including Unreal Engine. That’s a concrete step toward workflows where animation occurs in Cascadeur, allowing artists to immediately see the result in a final or near-final environment. So yes, animating directly in scenes that feel close to final quality is absolutely something we believe in – whether that happens inside Cascadeur itself or through tight integration with other tools.

DP: What does an ideal animation workflow look like to you?

Alexander Grishanin: Looking ahead, I think the biggest room for improvement comes from how input works. In an ideal world, an animator can use many different types of input: posing with controllers, of course, but also video references, maybe voice input, maybe text prompts. The key point is that all of this input produces a single, fully editable output. Nothing should become a black box.

In my “dream pipeline,” you can bring in any reference you want, create motion quickly with minimal input, and then dive into any part of the animation and refine it manually if needed. Whether that input comes from controllers, video, or text is secondary. What matters is that everything remains controllable.

DP: How do you imagine animation pipelines in 2030 or 2040? And what do you imagine Cascadeur will look like in the year 2050?

Alexander Grishanin: If we look ten, twenty or even twenty-five years ahead, I think the most honest answer is that a lot is still open. Not everything depends on animation tools like Cascadeur. We’re in a phase right now where content creation is changing very quickly, and it’s hard to draw clean lines that far into the future.

What’s very clear already is that 2D image and video generation is moving extremely fast with AI. One reason is that it’s much easier to collect data on it. 3D is lagging behind at the moment, but it’s definitely catching up. We’re seeing more tools that can automatically generate meshes, skeletons, and even basic animation. But as soon as you want to actually use those assets in a meaningful way, you still need a proper animation tool to refine and control motion. That’s where Cascadeur fits in.

On a more personal note, I believe 3D will become increasingly important over the long term. Even many 2D AI systems seem to rely on some internal 3D understanding of the world. Making that structure explicit, with meshes, joints and motion, is simply a more consistent way to build believable worlds. AI can help a lot here by lowering the barrier to entry.

If we push this idea further, I hope we end up in a world where creating 3D worlds and animated stories becomes as easy as writing text or drawing images today. Right now, far more people can paint, write or make music than create something in 3D, simply because 3D is still very complex. If that complexity goes away, content creation could change dramatically.

That would also affect who creates stories. Instead of a few large studios targeting large audiences, we might see many more creators building highly specific worlds for smaller communities. Animation and 3D would no longer be just about large productions, but also about personal expression, much like photography or music today.

At the same time, I can imagine tools for generating worlds, environments, or even basic scene setups becoming more integrated into animation workflows. Whether those systems live inside Cascadeur or connect to it seamlessly is an open question. What matters is that, once a world or scene is generated, you can step in and precisely shape how characters move and behave inside it.