For those who don’t know the tool: glTF sits between DCC export and runtime playback, and these web tools help you sanity check assets and rendering across engines before shots get spicy.

Two sites, one mission: fewer surprises

The glTF Sample Assets site and the glTF Render Fidelity Comparison site just got meaningful upgrades. They target the same everyday pain: you export a model, it looks right in one viewer, and then it looks slightly off somewhere else where it matters. The refreshed Sample Assets experience focuses on browsing, filtering, and sharing reference models that exercise specific features of glTF. The updated Render Fidelity Comparison experience focuses on visual baselines, with side-by-side checks that make renderer differences harder to hand wave and easier to track down. If you build tools, ship engines, validate exporters, or wrangle assets for realtime and VFX, these sites are designed to be the boring, reliable kind of helpful.

Sample Assets gets a real front door

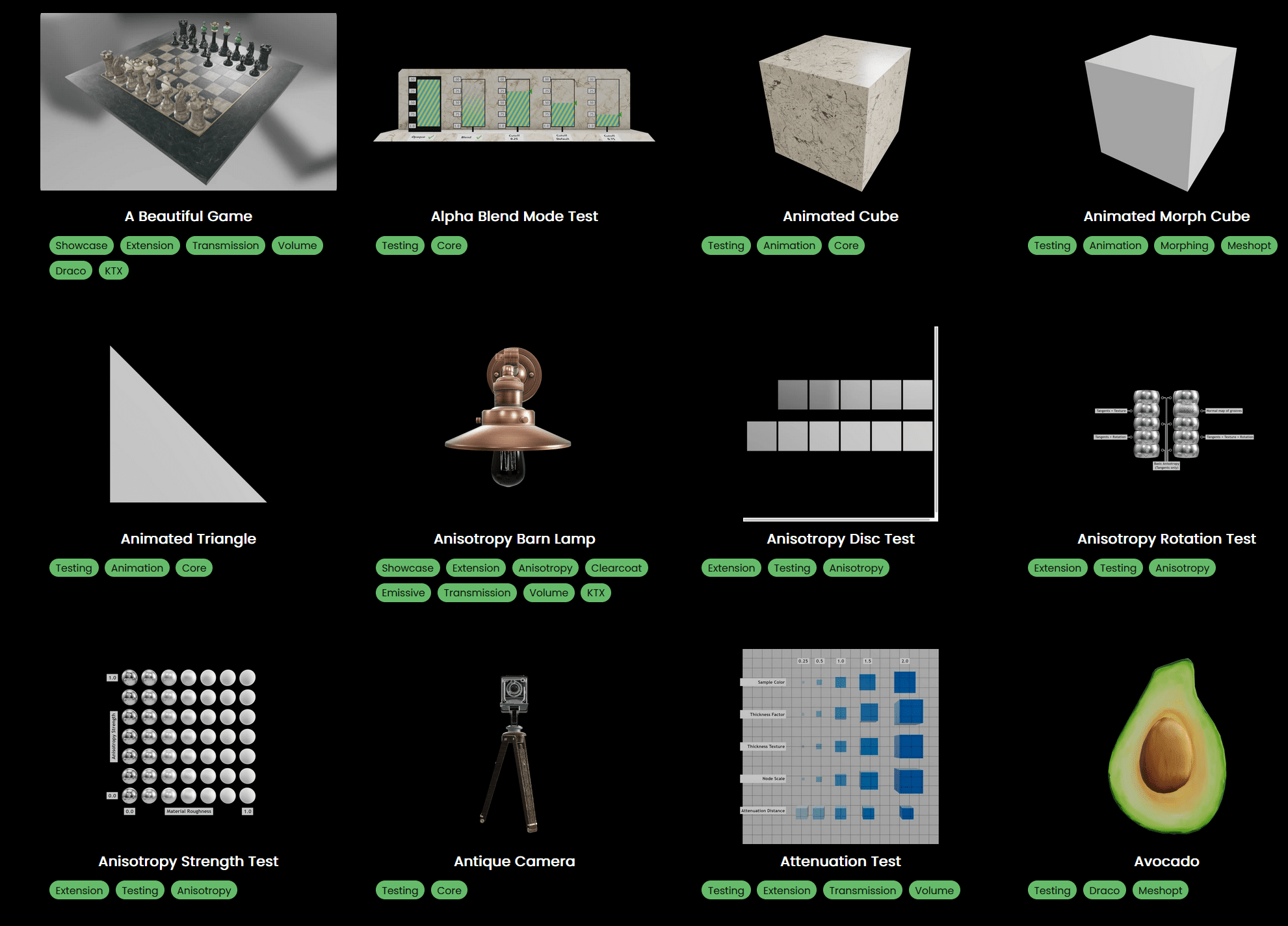

The new landing experience for glTF Sample Assets presents the repository as a proper website. The intent is simple: make it easier to browse and find models by what they demonstrate. Models appear with thumbnails, and animated assets include a small animation preview. The site supports light and dark themes and aims for fast loading and fluid navigation. Filtering and search are first class, with tags that map to features, functionality, and extension coverage.

When you open a model, you land on a dedicated model page with an interactive preview, metadata, and download links. The preview uses the glTF Sample Renderer, which is built on WebGL and targets correct support for official KHR extensions. A context menu in the preview provides presentation options that include debug visualization modes for material properties, variant selection when multiple variants exist, and wireframe presentation when the WEBGL polygon mode extension is available.

The model page also links out to external tools and shows a recommendation style list of related assets, which is a surprisingly effective way to bounce between test cases when you are chasing a specific shading edge case across multiple files.

One practical detail matters here: each model includes a screenshot, description, license information, and a live preview. That combination turns the site into a quick reference shelf rather than a scavenger hunt through folders.

Render Fidelity Comparison leans into side by side truth

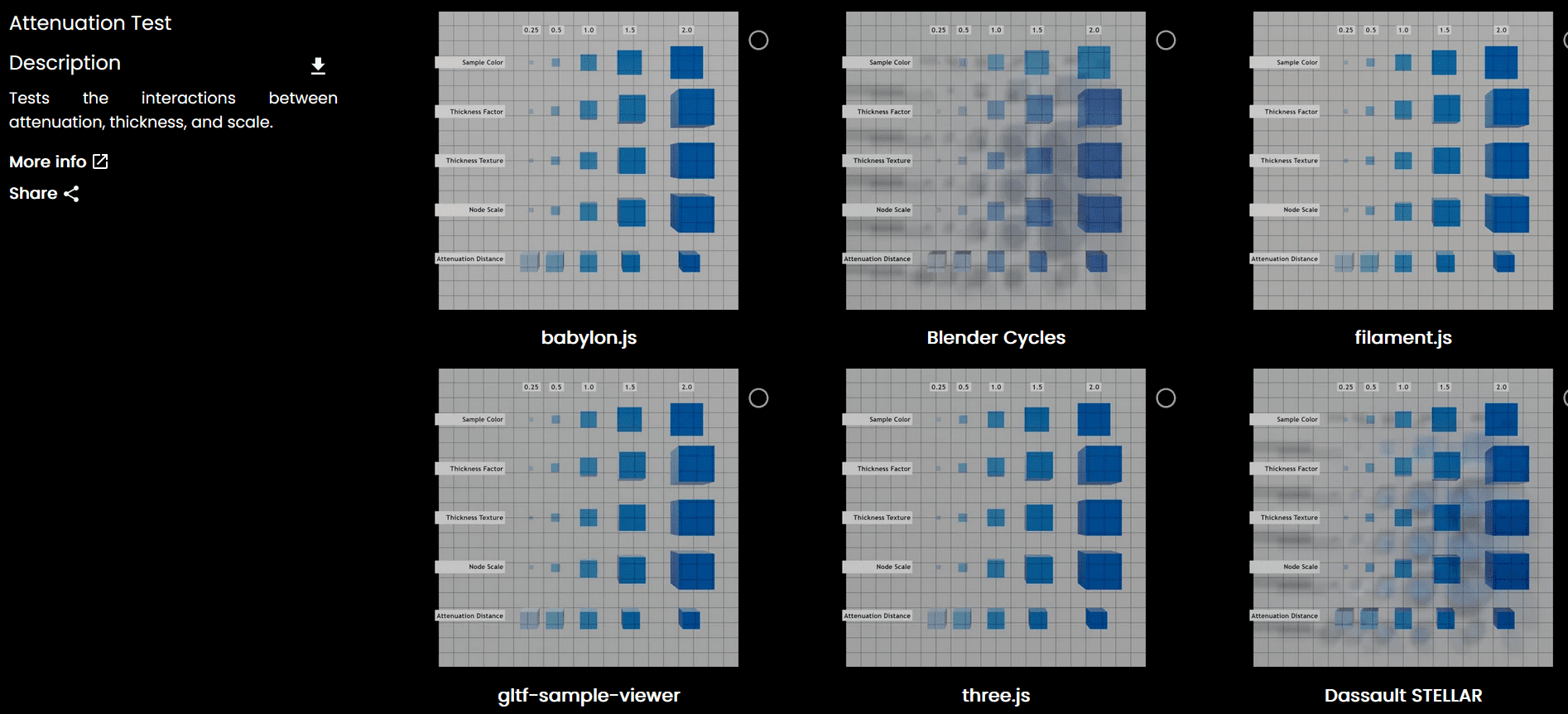

The redesigned glTF Render Fidelity Comparison site takes the same library mindset and aims it at output consistency. It frames the core goal as convergence around physically based rendering materials so you can expect a model to appear as intended across renderers and lighting environments. It also notes that this is ambitious, with realtime rendering quality still changing rapidly. *cough* understatement *cough*

The workflow is built for quick comparisons. You choose a model, filter by tags or search, then pick two renders and select a view mode. The comparison modes include side by side viewing, a slider view, and a difference view that shows computed differences.

The site spans popular realtime web renderers and also includes path tracers. In practice, that means you can jump between quick interactive engines like babylon.js and three.js, then also look at path traced production renderings from Blender using Cycles and from V-Ray. It is not about crowning a winner. It is about seeing where two implementations diverge so you can reproduce, triage, and fix.

Comparison images are generated offline and submitted via pull requests to the site repository, which keeps the content auditable and repeatable. That approach also makes it easier for teams to add coverage for their own renderer outputs without inventing a new pipeline.

The biggest new trick is an experimental 3D comparison mode that enables full interactivity comparison of two realtime renderers side by side. It currently supports three.js, babylon.js, model-viewer, and three js path tracer tooling. If you have ever tried to discuss a subtle lighting mismatch over screenshots, you know why interactive side by side matters. In one sentence, the updated site encourages you to comapre outputs with fewer assumptions and more pixels on screen.

https://github.khronos.org/glTF-Render-Fidelity/model/AttenuationTest

Contributing is part of the design

Both sites are open to contributions. For sample assets, new models can be proposed via pull requests to the repository. For render fidelity, you can submit renderer outputs by following the repository guidelines for generating renders of a sample asset and placing the results in the appropriate location for comparison.

There is also a straightforward feedback loop via issues in the repositories, which keeps bug reports and feature requests attached to something actionable. This is the one place where it is worth naming the organizer exactly once: Khronos is clearly aiming to make the glTF developer ecosystem easier to navigate, easier to test, and harder to break quietly.

https://github.khronos.org/glTF-Assets/

https://github.khronos.org/glTF-Render-Fidelity/